$ cat post/a-shell-i-once-loved-/-a-system-i-built-by-hand-/-we-were-on-call-then.md

a shell I once loved / a system I built by hand / we were on call then

Title: Y2K Redux: A Night of Sleepless Troubleshooting

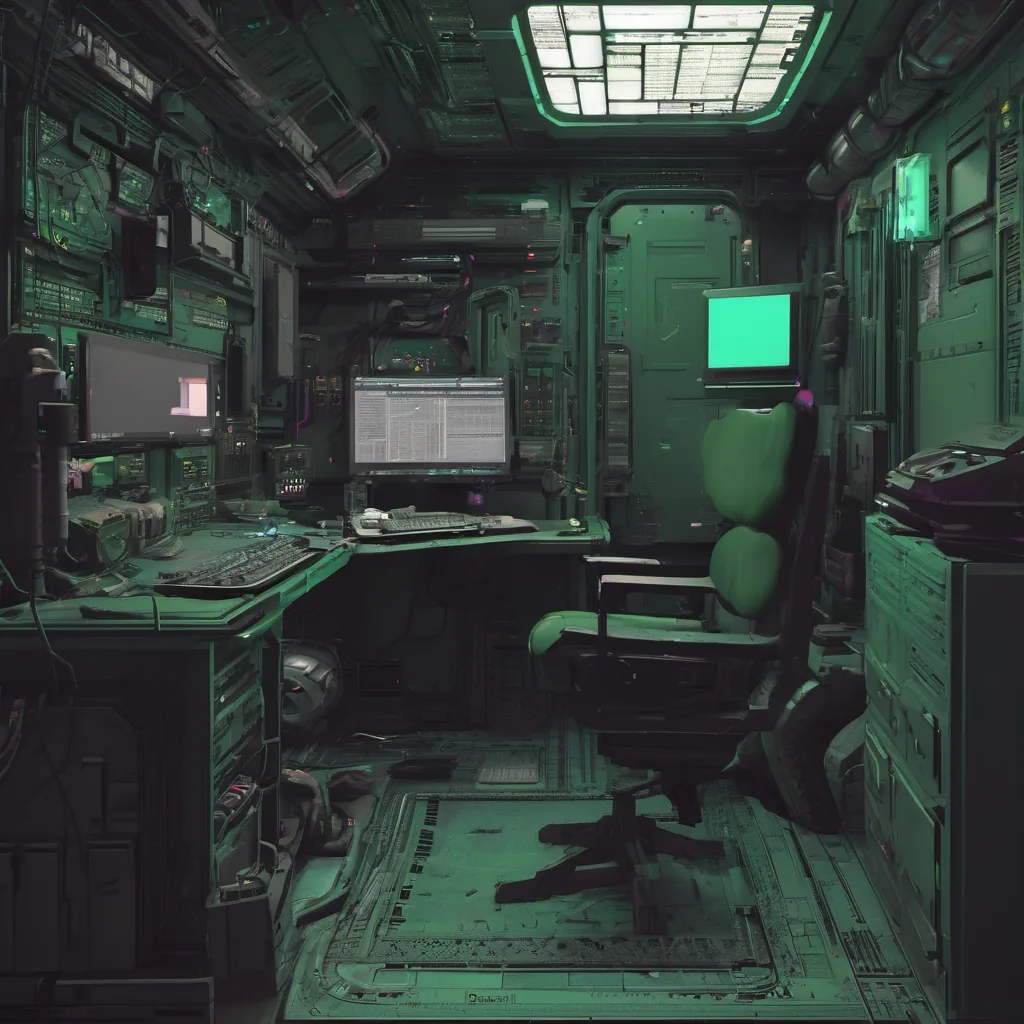

January 31st, 2000. The air was thick with anticipation and stress as the countdown to New Year’s Eve finally hit its final hour. I had been working on a small Linux-based file server for our company’s financial department, but this evening seemed more like an all-nighter at a tech event than just another day in ops.

The server handled critical files that would need to be accessible 24/7—no pressure, right? My colleagues and I had been testing it thoroughly over the past few weeks, running performance benchmarks and stress tests. But we knew that no matter how well-prepared you are for a disaster, there’s always an unexpected issue lurking around.

As midnight approached, I was on my third cup of coffee, eyes glued to the server logs, making sure everything stayed green. The clock ticked past 12:00 AM, and with it came the ominous feeling that something might go wrong. Just as I started to relax a bit, an alert popped up: “Server load is spiking.” Panic set in.

I quickly logged into the server via SSH and saw that the load average was hitting 15. That’s way too high for what we were running—it’s like trying to watch Netflix on a dial-up connection while also playing a video game. I knew this had something to do with our file server, so I began digging through the logs.

The logs showed repeated attempts from multiple machines to access a non-existent directory: /files/y2k. It turned out that some of our less tech-savvy users were still believing in Y2K bugs and running scripts that would check for directories related to 1900 dates. These scripts were causing the server load spike, but there was no way I could stop them from hitting this non-existent path.

I quickly patched the code to redirect such requests to a more appropriate directory (/files/y2k_backup), and the load dropped back down. But just as the stress began to ease, another issue popped up. The /var/mail directory had filled up with emails related to our server logs. Linux wasn’t designed for this kind of logging at scale, and now it was choking on its own output.

I quickly resized the partition and cleared out some old log files to free up space. With that taken care of, I turned my attention back to the original issue—why were users still hitting a non-existent directory? I found out that our firewall rules needed an update. Old rules allowed traffic from certain IP addresses to hit any path on the server. After tightening those rules and adding some more granular controls, the server seemed stable again.

As dawn began to break, I stepped away from the console for a quick stretch. The night had been intense, but everything was working now. Looking at the clock, it was just after 6 AM—time to call it quits for today. As I packed up my gear and prepared to head home, I couldn’t help but reflect on how much has changed since Y2K.

In the old days of 1999, everyone was talking about bugs in date handling causing major disruptions. Today, we have matured as a community—awareness is higher, tools are better, and our systems can handle more complex issues without breaking down so easily. But that doesn’t mean the nightmarish bugs go away; they just evolve into new forms.

Looking back at the year 2000, it’s hard to believe we were so focused on date handling issues. Now, in 2023, I’m more concerned about data breaches and cybersecurity threats than any date-related issues. But hey, that’s progress for you!

That night was a reminder of how important it is to stay vigilant and keep an eye out for new challenges. It may seem like the Y2K bug was just a moment in time, but it taught us valuable lessons about preparedness and adaptability—lessons that are still relevant today.