$ cat post/linux-on-the-desktop:-a-step-too-far?.md

Linux on the Desktop: A Step Too Far?

September 30, 2002

I’ve been thinking a lot about the state of technology this month. Back then, the dot-com bust was still a fresh wound, and there were rumblings that Linux might finally make its way onto more than just servers and embedded systems. But even in my naivety, I knew it would be an uphill battle.

You see, at the time, Windows 2000 had already established itself as the dominant desktop OS for businesses. It was ubiquitous, and the idea of switching to Linux seemed like a leap into the unknown. Even though the open-source community was growing stronger with each passing day—remember those early mailing lists where everyone swapped advice and code?—it still felt like a long shot.

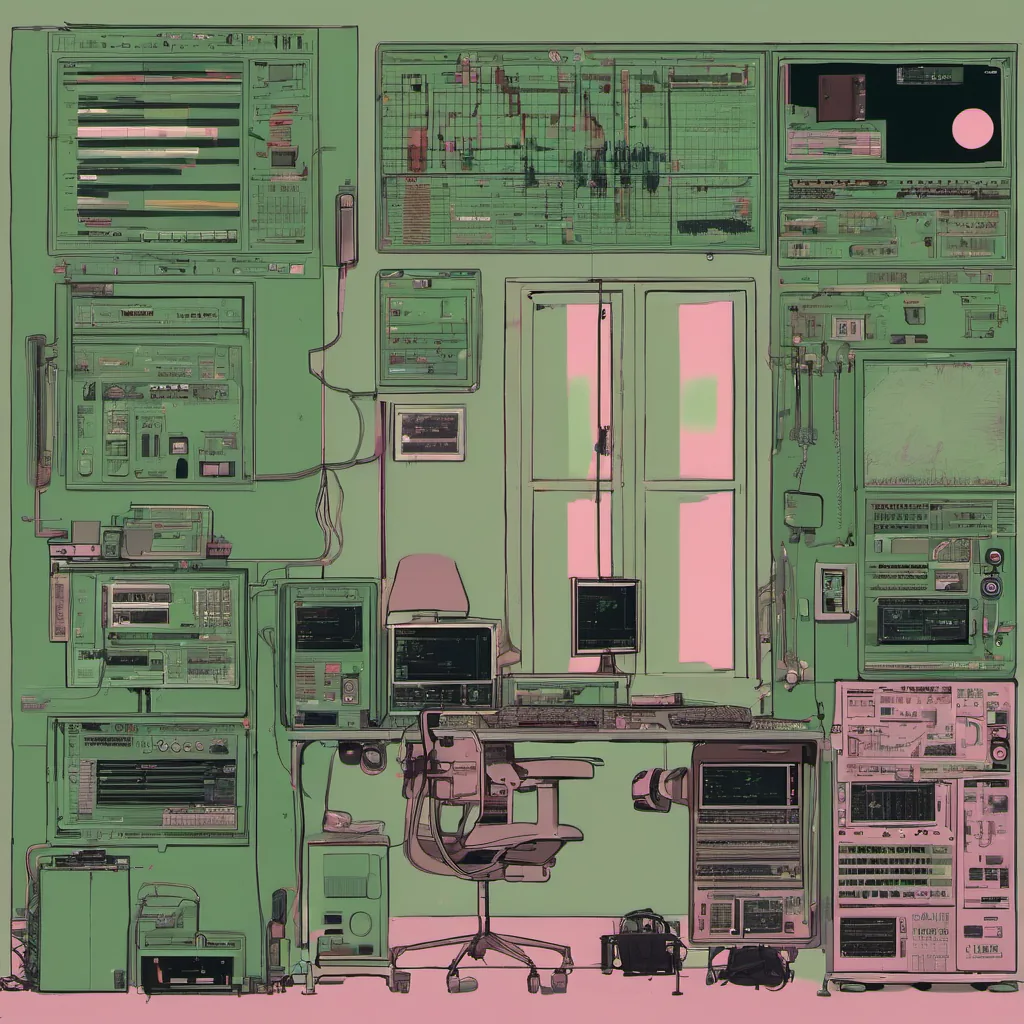

One of my jobs involved setting up a new development environment for our team. The client had specified that we use Linux on the desktop, but let’s just say I didn’t exactly have a lot of faith in it. I had to fight tooth and nail with my colleagues about this; some were already sold on the open-source philosophy, while others simply couldn’t imagine using something different from what they’d always known.

I set up a few test machines, and everything seemed fine at first glance. The software worked, and we could compile our code without any issues. But as time went on, little niggles started cropping up. A package was missing here, a config file needed tweaking there. It wasn’t long before I realized that setting up Linux for the desktop wasn’t just about moving files around.

One of my biggest headaches came from trying to get XFree86 (the old version) working properly with our hardware. Every time I sat down to do some coding, I felt like I was juggling a dozen dependencies and fighting the system rather than using it. And let’s not forget about those pesky permissions issues—trying to run as root or use sudo correctly while still making sure you didn’t accidentally break anything.

The more I thought about it, the more I realized that for most people, Windows just worked. It was familiar, and there were plenty of applications available (remember when you had to use Wine to get Photoshop running?). Sure, Linux offered a lot of flexibility and control, but for everyday tasks, Windows had become so ingrained in how people did their work.

Then came the Y2K discussions. I remember those all too well—the fears that everyone was so hyped up about. But it was more than just that; there was an underlying sense that tech was moving forward, and we needed to be ready for whatever the future might hold. Linux on the desktop seemed like a part of that movement, but in practice, it felt like a step too far.

One day, I found myself arguing with one of my managers about whether we should continue down this path. He saw the potential, while I could only see the challenges. We had a heated debate—how many times have you had those meetings where everyone knows they disagree but nobody wants to admit it? In the end, he won on this particular point, and we stuck with Windows.

Looking back now, I can see that Linux on the desktop was indeed premature at that time. But it’s interesting how history moves forward. Today, of course, you’d be hard-pressed to find a company running an exclusively Windows desktop environment for developers. The tide has turned, but that’s another story entirely.

For now, though, I’m left with this: sometimes technology isn’t just about the latest and greatest; it’s also about practicality and what people are ready to embrace. And in 2002, Linux on the desktop wasn’t quite there yet.

That was a good reflection of where we were back then. The tech world moves fast, but some battles feel like they’re never truly over.