$ cat post/a-merge-conflict-stays-/-the-orchestrator-chose-wrong-/-the-daemon-still-hums.md

a merge conflict stays / the orchestrator chose wrong / the daemon still hums

Title: Kubernetes Chaos: Learning from the Chaos Mesh

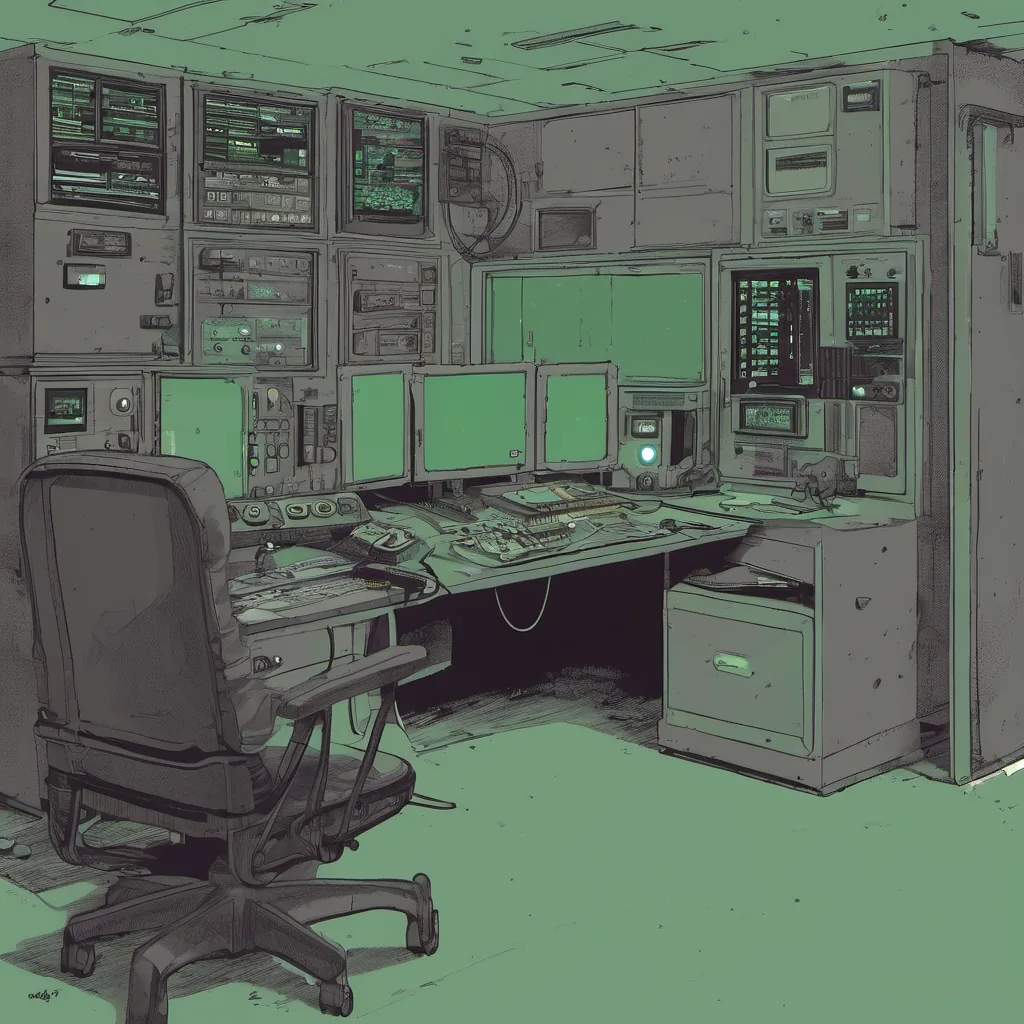

October 30, 2017 was a Thursday. I woke up to emails about a Kubernetes cluster behaving badly. The issue wasn’t just one pod going down; it was a whole node group that decided to go out on strike.

The Crash

It happened around 6 AM, and within minutes, half of our staging environment was unreachable. We use Prometheus and Grafana for monitoring, so the first thing I did was check those dashboards. Sure enough, something was wrong with our nodeStatus alerts. These alerts are critical because they tell us when nodes are out of contact.

Digging In

I quickly jumped on a cluster node to see what was going on. The system logs were full of messages about network issues and container crashes. This looked like more than just a networking hiccup—it seemed like something was actively killing the containers.

That’s when I remembered reading about Chaos Mesh, an open-source project that allows developers to inject failures into Kubernetes clusters. We hadn’t used it before, but now it felt like it might be our savior.

Introducing Chaos Mesh

Chaos Mesh is a powerful tool for testing your system’s resilience by injecting various types of chaos, such as network outages or disk failures. It uses kubectl to create chaos experiments that simulate real-world issues in controlled ways. With a little setup, we could test the stability of our applications and infrastructure.

Setting Up Chaos Mesh

We quickly spun up a new namespace for Chaos Mesh and followed the documentation to install it. Once installed, we began experimenting with different failure modes. The first thing I tried was network-latency—simulating high network latency between pods and their services. This didn’t cause much trouble; our applications handled the increased latency without issues.

Next up, I decided to try disk-failure. This is where things got interesting. We configured it to simulate a disk failure on one of our nodes. Within seconds, several pods went down, and we saw spikes in our monitoring tools. The application logs started filling with errors indicating that volumes were being unmounted.

The Lessons Learned

This experience taught us two valuable lessons:

- Resilience is Key: Our applications handled network latency gracefully, but disk failures exposed weaknesses in how we manage persistent storage.

- Monitor Everything: While our Prometheus and Grafana setup was good at alerting on CPU and memory usage, it wasn’t as effective for detecting issues related to disk I/O or file system health.

Moving Forward

After the chaos experiment, we had a meeting with our team to discuss how to improve storage management and add more monitoring around persistent data. We also decided to integrate Chaos Mesh into our regular testing process to ensure that our systems can handle unexpected failures gracefully.

Conclusion

October 30th, 2017 was a day when Kubernetes taught us the importance of resilience and preparedness. With tools like Chaos Mesh, we gained insights into how our infrastructure behaves under pressure. This experience made us stronger and more confident in our ability to manage complex systems.

That’s the story of that chaotic Thursday. If you want to read more about my adventures with Kubernetes and platform engineering, check out my blog or follow me on Twitter @BrandonCamenisch.