$ cat post/debugging-dns:-a-y2k-aftermath-tale.md

Debugging DNS: A Y2K Aftermath Tale

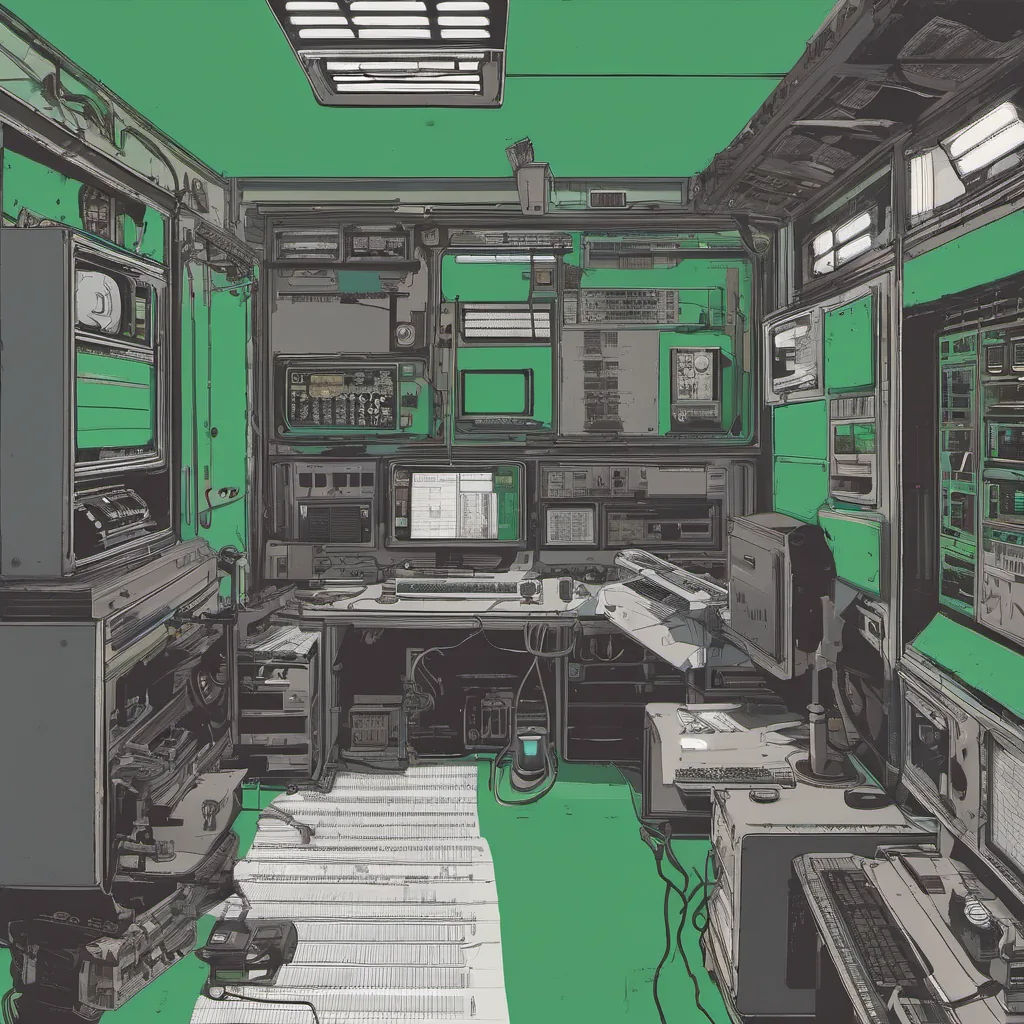

October 30, 2000. The air was still thick with the aftermath of Y2K, and I was knee-deep in a debugging session that would come to define my early years as an engineer. It wasn’t glamorous—just another day dealing with DNS servers and sendmail configurations. But there’s something to be said for the raw, unfiltered challenges that come with managing infrastructure.

You see, it all started on a routine Saturday afternoon when our production environment began throwing some pretty gnarly errors. Our web applications were failing to resolve domain names correctly, leading to a cascade of 502 Bad Gateway errors across our network. It wasn’t long before the alarms went off and I found myself in the thick of it.

I had to dig into the logs, which were anything but helpful at first glance. The named process from BIND was spitting out cryptic error messages like “zone: refresh failed” and “invalid flags.” Sound familiar? It’s always these things that bite you just when everything seems fine.

The first thing I did was check our DNS zone files for any obvious errors, but they all looked clean. Maybe it was something more subtle—a configuration issue or a problem with the network itself. I fired up Wireshark and started sniffing packets between our servers and our DNS resolvers. It didn’t take long to spot some odd behavior: requests were being sent to the wrong IP addresses, and responses weren’t coming back as expected.

After a few hours of sifting through logs and packet captures, it dawned on me that perhaps the issue wasn’t with BIND at all but rather our internal network configuration. I checked the subnet masks and routing tables—everything seemed correct. But then I stumbled upon something in the sendmail logs that hinted at a more sinister problem: there were messages indicating that sendmail was dropping mail from certain domains.

It hit me like a ton of bricks. One of our DNS servers had been serving stale data for months, and it wasn’t just failing to update its zone files; it was also misrouted traffic through our internal network. The old named process hadn’t been restarted in ages, and the new one I had set up was somehow not kicking in.

I decided to take a step back and rebuild the DNS server from scratch. It wasn’t the most elegant solution, but it was effective. After ensuring that all configurations were correct and restarting both sendmail and named, everything seemed to fall into place.

But as I sat there watching the servers stabilize, I couldn’t help but feel a twinge of self-doubt. How did this happen in the first place? Wasn’t maintaining infrastructure supposed to be more straightforward with modern tools like Linux and Apache taking over the world?

The Y2K scare had left its mark on everyone, making us more cautious about dependencies and backups. But sometimes, it’s the simple things that slip through the cracks. As I typed out the final commit message, “Fixed DNS resolution and sendmail routing issues,” I couldn’t shake the feeling of relief mixed with a healthy dose of humility.

In the end, it was just another day dealing with the quirks and complexities of running an operations environment. The tech world might have been buzzing about IPv6 and early versions of VMware, but for me, it was all about getting back to basics—ensuring that our DNS servers were up-to-date and sending email correctly.

As I closed out my terminal session and headed home, I couldn’t help but think: there’s always more to learn in this field. And sometimes, the hardest bugs are the ones hiding in plain sight, waiting for us to look a little closer.

That’s it from me. Just another day in ops.