$ cat post/a-year-in-review:-when-the-platform-was-the-problem.md

A Year in Review: When the Platform Was the Problem

January 30, 2023. I find myself looking back on a year that was packed with platform issues, AI experiments, and the ongoing struggle to keep our services humming while everyone around me was talking about the next big thing.

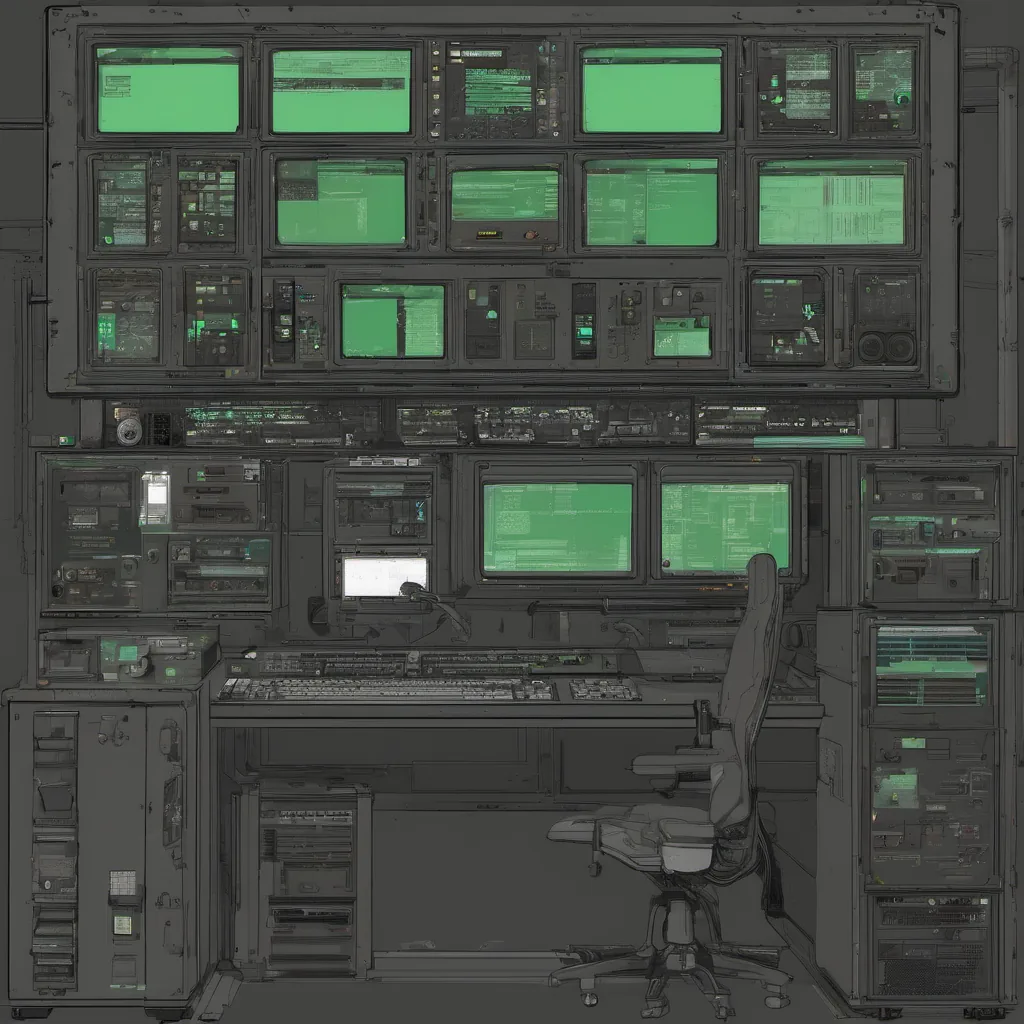

We started the year with high hopes for our internal platform. We’d invested heavily in serverless architectures, Kubernetes clusters, and managed databases—basically everything under the umbrella of modern cloud-native technologies. But by January 2023, it felt like we were fighting a losing battle against our own infrastructure. Every day was a new issue: slow API calls, database timeouts, or random outages that left us grasping for answers.

One particularly frustrating week in February saw our primary PostgreSQL cluster go down hard. The root cause turned out to be a memory leak in one of the microservices—a bug that had somehow slipped through the cracks despite multiple code reviews and automated tests. It was embarrassing, but it taught me an important lesson: no matter how advanced your tools are, they can’t catch every issue.

Around this time, the AI/ML landscape was exploding post-ChatGPT. Our team started experimenting with LLMs (Large Language Models) in various ways, hoping to integrate some of them into our products. We built a simple chatbot using one of the open-source models from Hugging Face and deployed it on a separate server. Theoretically, we could scale it out as needed. In practice, we hit scaling issues almost immediately—our server was overwhelmed by traffic, and every request pushed the API latency through the roof.

Meanwhile, the Platform Engineering team was under immense pressure to keep up with the demands of our growing user base. We had a mix of managed services from AWS, Google Cloud, and Azure, all integrated into a monolithic platform that was becoming increasingly unwieldy. Every new feature or bug fix required coordination across multiple teams—infrastructure, devops, and developers alike.

One day, we faced a particularly grueling discussion about FinOps and cloud cost pressure. Our CFO had noticed our AWS bill was climbing steeply, and he wanted to see some serious action taken. We argued back and forth on how best to manage costs without compromising performance or user experience. The conversation quickly devolved into finger-pointing between different stakeholders—IT operations blamed developers for not optimizing their code, while the engineering team accused ops of being too conservative with resources.

In March, we finally landed a big win: a new FinOps tool that allowed us to get real-time visibility into our cloud spend and automatically provisioned our services based on usage. It was like turning a corner—suddenly, we had a handle on our costs without sacrificing performance. The platform started feeling more manageable again.

April brought with it the excitement of WebAssembly (Wasm) on the server side. We were part of a small group exploring how Wasm could be used to offload some of our heavy computation tasks from the main application servers. It was an intriguing idea, but as we delved deeper into the implementation, we hit wall after wall—compatibility issues with certain libraries and limited tooling support.

Despite all these challenges, there were moments of pure joy too. One such moment came when a colleague showed me NanoGPT—a tiny GPT model trained in just 256MB of memory. It was a stunning reminder that even in the age of giant models like ChatGPT, simplicity and efficiency still mattered.

As I write this, we’re about to embark on another round of code reviews and infrastructure updates. The platform is shaping up nicely, but there’s always more to do. We’ve learned from our struggles and are better equipped for what lies ahead. The journey continues, and with each challenge, the team grows stronger.

That’s how 2023 started—full of lessons, both hard-won and humbling. I’m looking forward to seeing where this year takes us.