$ cat post/the-kernel-panicked-/-i-pivoted-the-table-wrong-/-the-deploy-receipt.md

the kernel panicked / I pivoted the table wrong / the deploy receipt

Debugging a Kafka Cluster with Prometheus

January 30, 2017. Another day in the life of an ops engineer at a growing startup. The world is buzzing with Kubernetes and Helm debates, but I’m still knee-deep in Kafka and ZooKeeper.

Today started like any other morning—stepping into the office and getting to my desk, where the familiar smell of coffee and the sound of keyboard clacks greeted me. My task for the day was to dive into a production issue with our Kafka cluster. The logs were filled with errors, but they didn’t tell us much. It’s always the most obscure problems that sneak up on you.

The team had been running this Kafka cluster for a few months now, and it was one of the key components in handling our real-time data pipeline. Suddenly, we started seeing intermittent failures and slow performance—messages weren’t being delivered as expected. We needed to figure out what was going wrong fast, before things got worse.

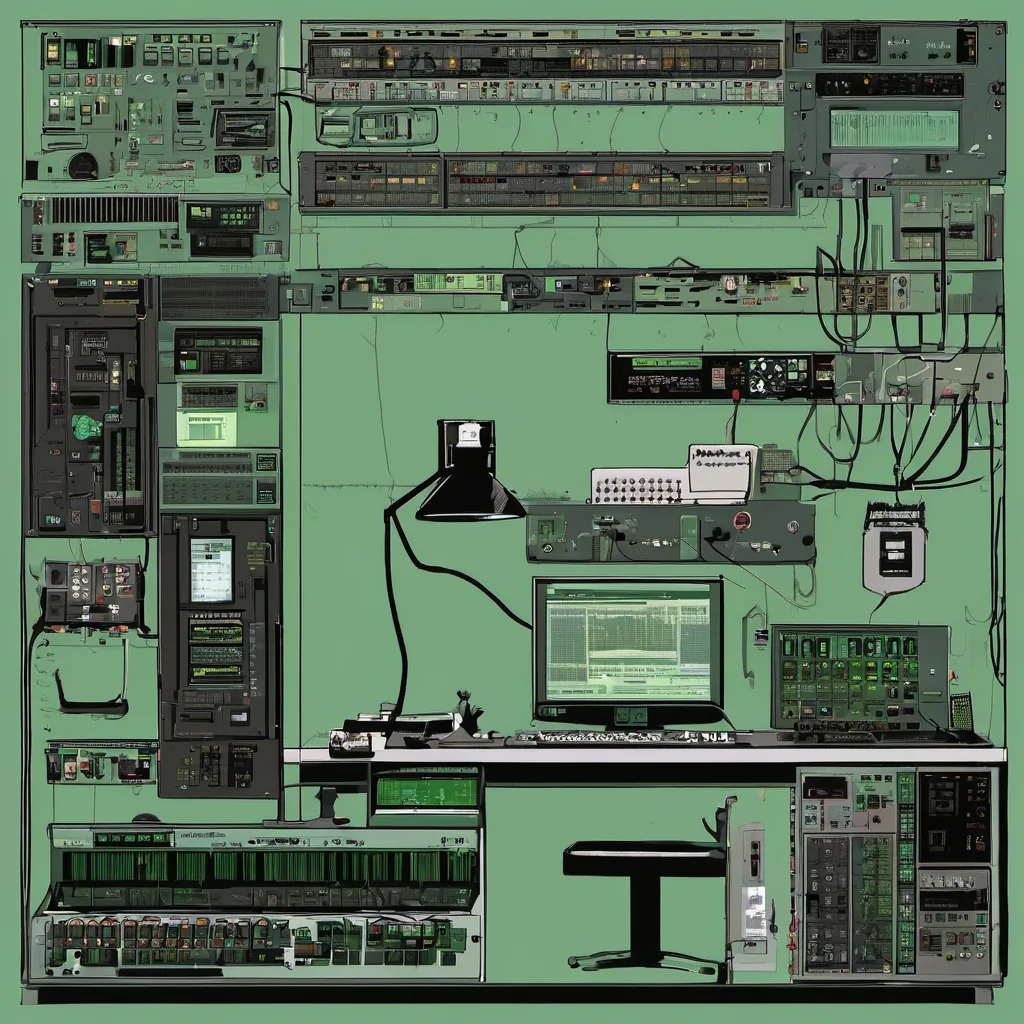

The Setup

We were using Kafka 0.9 with ZooKeeper as our coordination service. Our deployment used Docker Compose for simplicity, and we had a handful of nodes running in different environments (dev, staging, prod). Each node consisted of multiple Kafka brokers, forming a single cluster per environment.

Initial Investigation

I fired up kafka-topics.sh to inspect the topic partitions and offsets but didn’t see any obvious issues. Logs were noisy with various warnings, but nothing screamed “crash.” That’s when I decided to look at our monitoring stack—Prometheus + Grafana was our savior in these situations.

Prometheus & Grafana

Prometheus had been a game-changer since we adopted it last year. It allowed us to capture and visualize metrics from various services, including Kafka. We’d set up exporters for Kafka metadata like broker health, topic replication status, and partition lag. These metrics were great, but they didn’t give us the full picture.

I spent an hour poking around Grafana dashboards, trying to find a pattern in the data. The network latency graphs showed some spikes, which could be due to our load balancer or external network issues, but nothing conclusive. I decided to write a custom query to pull more granular broker metrics—CPU usage, memory usage, and disk I/O.

Digging Deeper

After running the new queries, I noticed something odd: one of the brokers was consistently using significantly more CPU than the others. This wasn’t supposed to happen with Kafka—usually, you’d expect all nodes to be balanced in terms of load. I logged into that broker and started digging through its logs.

The logs revealed a series of exceptions related to thread pool exhaustion. Kafka has multiple thread pools for different operations like consumer fetching, replication, and metadata requests. It seemed one of the pools was being overwhelmed by too many tasks.

The Fix

I decided to increase the number of threads in our problematic thread pool. This would distribute the load more evenly across the broker processes. I fired up a Docker Compose file with an updated config and redeployed everything to staging first. After confirming no issues, we rolled it out to production.

It was a relief when the broker metrics showed stable CPU usage. The network latency and other performance metrics also improved significantly. The team breathed a collective sigh of relief as our Kafka cluster started functioning smoothly again.

Lessons Learned

This experience taught me two key lessons:

- Custom Metrics Matter: While out-of-the-box metrics are useful, sometimes you need to dive deeper with custom queries to uncover the root cause.

- Thread Pool Configuration is Critical: Properly configuring thread pools can make or break your Kafka setup.

Looking back, I remember the frustration and sleepless nights as we worked through this issue. But it was also a reminder of why I love what I do—solving complex problems and making systems more reliable for our users.

As I closed my laptop, I knew there would be another challenge waiting tomorrow, but that’s the beauty of ops engineering. Keep learning, keep debugging, and never stop shipping code.