$ cat post/the-old-datacenter-/-we-merged-without-a-review-/-the-merge-was-final.md

the old datacenter / we merged without a review / the merge was final

Title: Kubernetes Complexity Fatigue Hits Home

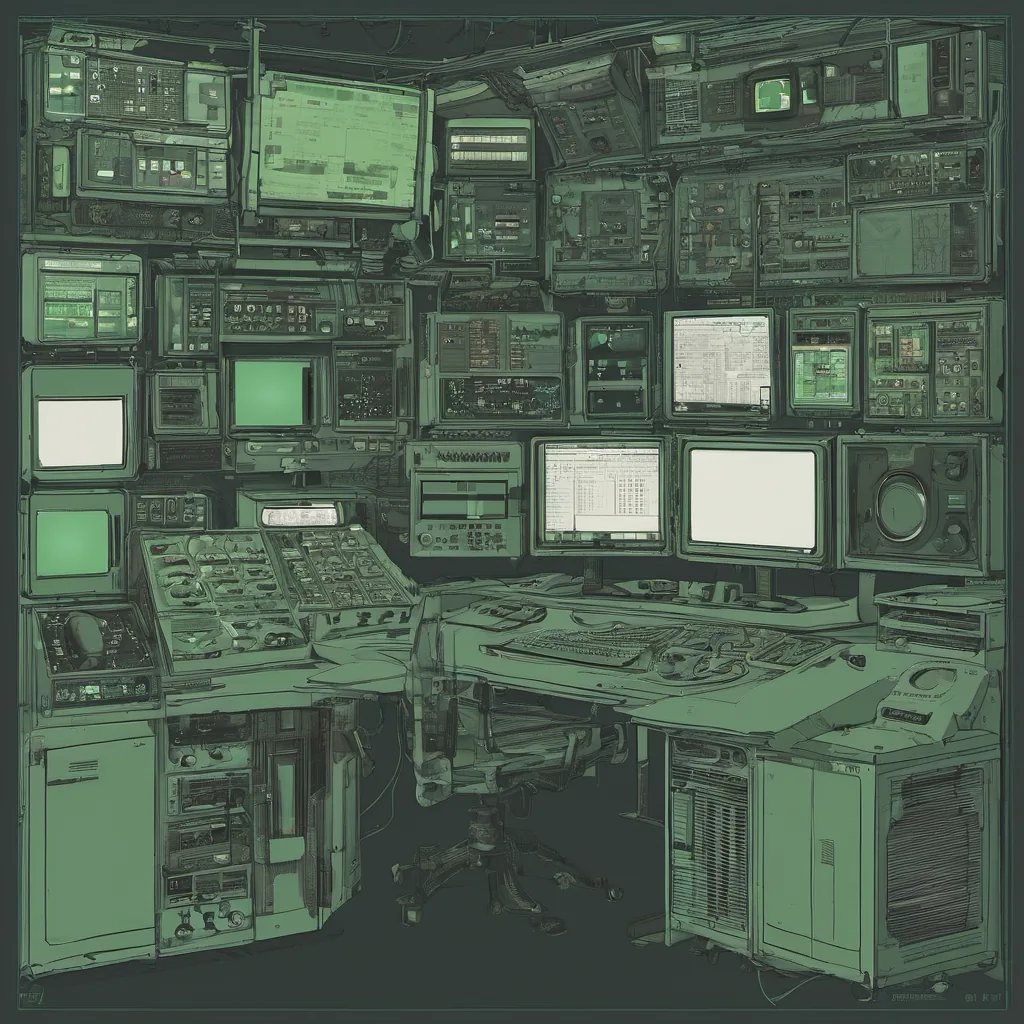

November 29, 2021. I wake up to the usual morning routine of checking email and Slack threads, but there’s something different in the air today. The complexity of our Kubernetes cluster has reached a breaking point. I’ve been feeling the weight of it for months—manual deployments, tangled Helm charts, and endless rolling updates that can leave your heart racing like never before. It’s time to address this issue head-on.

A few weeks ago, we received an update from our team about the latest Kubernetes version. They were excited about the improvements in eBPF support, but I couldn’t help thinking about all the old issues we’d been ignoring. It’s not just that new features are nice—each one adds another layer of complexity to our setup. Every time a new version comes out, it feels like we’re adding more threads to an already tangled ball of yarn.

The internal developer portal was introduced as part of this transformation. Backstage is supposed to simplify development and infrastructure management, but with all the customizations and integrations we’ve built up over the years, it’s a bit of a mixed bag. Some parts are smooth, others feel clunky. The documentation is sparse at best, and when you encounter issues, there’s a good chance they won’t be addressed anytime soon.

We’re also dealing with SRE roles proliferating across teams. I’ve been working closely with our new DevOps engineer, Alex, to streamline the deployment process. We’ve made some progress—automated tests and better logging—but it’s still not enough. The system is too complex for anyone to fully understand, let alone maintain.

Remote work has only exacerbated these issues. Our infrastructure needs have grown with the scale of remote workers, but scaling out physical resources is just one part of the problem. Managing software dependencies, ensuring security, and maintaining performance—these are all challenges that become more daunting when you’re not physically co-located.

Today, I’m working through a deployment issue with my team. We’re trying to upgrade our main application to a new version. The plan is straightforward: update Helm charts, run some tests, and push it live. But as we go through the motions, things start going south. A sidecar container goes into crash loop, and it takes us hours to track down the root cause—a misconfigured Kubernetes Service.

As we sit here in our respective remote offices, debugging this issue, I can’t help but feel a sense of frustration. We’ve invested so much time and effort into setting up our infrastructure, only for it to become a source of headaches and wasted productivity. The temptation is there to simply stick with what we have, but deep down, I know that’s not the right path.

Maybe eBPF will save us. Maybe ArgoCD or Flux can help us manage our deployments better. But at this point, it feels like every solution comes with its own set of challenges. The complexity fatigue is real, and it’s starting to affect morale. We need a radical change—something that simplifies everything without sacrificing the benefits we’ve gained from Kubernetes.

In the end, maybe it’s about finding the right balance between automation and manual intervention. Maybe it’s about investing in better tooling and documentation. Or perhaps it’s as simple as taking more time to understand our own infrastructure before making changes.

For now, I’ll keep plugging away at these issues, one deployment at a time. The road ahead is long, but we’ll get there. After all, that’s what engineers do—solve problems, even when they seem insurmountable.

Until next time, Brandon