$ cat post/devops-drama-and-the-chaos-of-ops.md

DevOps Drama and the Chaos of Ops

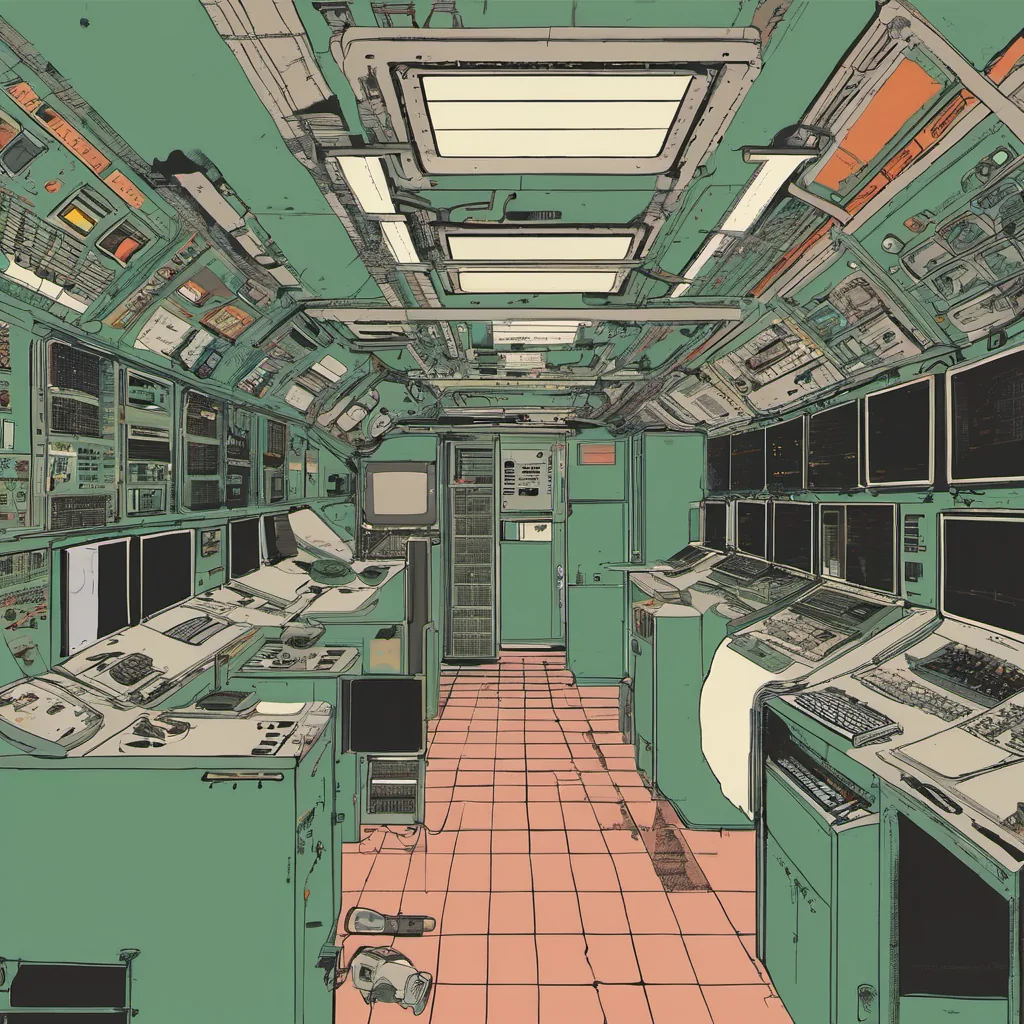

November 29, 2010 was a day when I felt like I was on the cusp of something big in ops. Back then, we were still navigating through the chaos of DevOps and the new world order it promised. Chef and Puppet were duking it out for our hearts and minds, and Netflix’s Chaos Monkey was just starting to make waves with its ruthless approach to system resilience.

I was working on a project where we were trying to refactor our infrastructure using Chef. We had been running everything manually until then, and the prospect of automation was exhilarating but terrifying. The first step was always the hardest: deciding how much to do at once. My team and I ended up doing it in small chunks, testing each change carefully before moving on to the next.

One particularly frustrating day, a simple update we thought would be straightforward ended up taking hours. It turned out that our old scripts were littered with hard-coded values for environment variables. As soon as Chef started running and replaced those hardcoded values with variables from our configuration management database, everything went south. We had to roll back the changes, identify which variable was causing issues, fix it, and then try again.

It’s funny how much you can learn when things go wrong. In this case, I learned that cleaning up old code is never a one-time task. Each change, each refactoring session, might reveal more issues. And sometimes, the simplest of changes can have the biggest impact. The experience left me with a newfound respect for the importance of having clean, modular code in your infrastructure.

Meanwhile, out in the wild, Steve Jobs was making headlines again, but this time it was for something different. I remember reading about his comments on mobile devices and how it felt like he was just trying to stoke up some drama. The tech world can be a bit like that sometimes—full of ups and downs, with big personalities dictating trends.

The Heroku acquisition by Salesforce was another major event that year. We were all curious if this would change the landscape of cloud hosting for good or just be a temporary blip. Cloud computing was still in its infancy, but it felt like we were on the verge of something revolutionary. Amazon’s re:Invent was kicking off shortly after Thanksgiving, and everyone was excited to see what new toys AWS was bringing to the table.

Back at work, I found myself in the thick of another argument about whether Chef or Puppet was the way forward. Both tools had their strengths, but neither was a perfect fit for our environment. We needed something that could handle complex systems while still being easy enough for junior engineers to understand and modify. After much debate (and a few heated discussions), we decided to stick with Chef, mainly because it seemed more approachable to new members of the team.

The NoSQL hype was in full swing, and I couldn’t help but feel a bit skeptical about how many of these databases were just trying to solve problems that SQL had already solved well. But hey, if you’re working on something big, sometimes you need to be open to new ideas.

As for DevOps, the term was still evolving, and we were trying to figure out exactly what it meant in practice. Chaos Monkey was starting to gain traction as a way of ensuring our systems could handle failure gracefully. We didn’t have that kind of tool yet, but I knew that one day, we would need something like it.

So there you have it—a snapshot of November 29, 2010, from the perspective of an ops engineer in the middle of all this chaos. It was a time when every decision felt significant and every problem seemed monumental. But even amidst the drama, I couldn’t wait to see what new tools and techniques would emerge next.

Cheers, Brandon