$ cat post/kubernetes-wars:-a-battle-of-the-beast.md

Kubernetes Wars: A Battle of the Beast

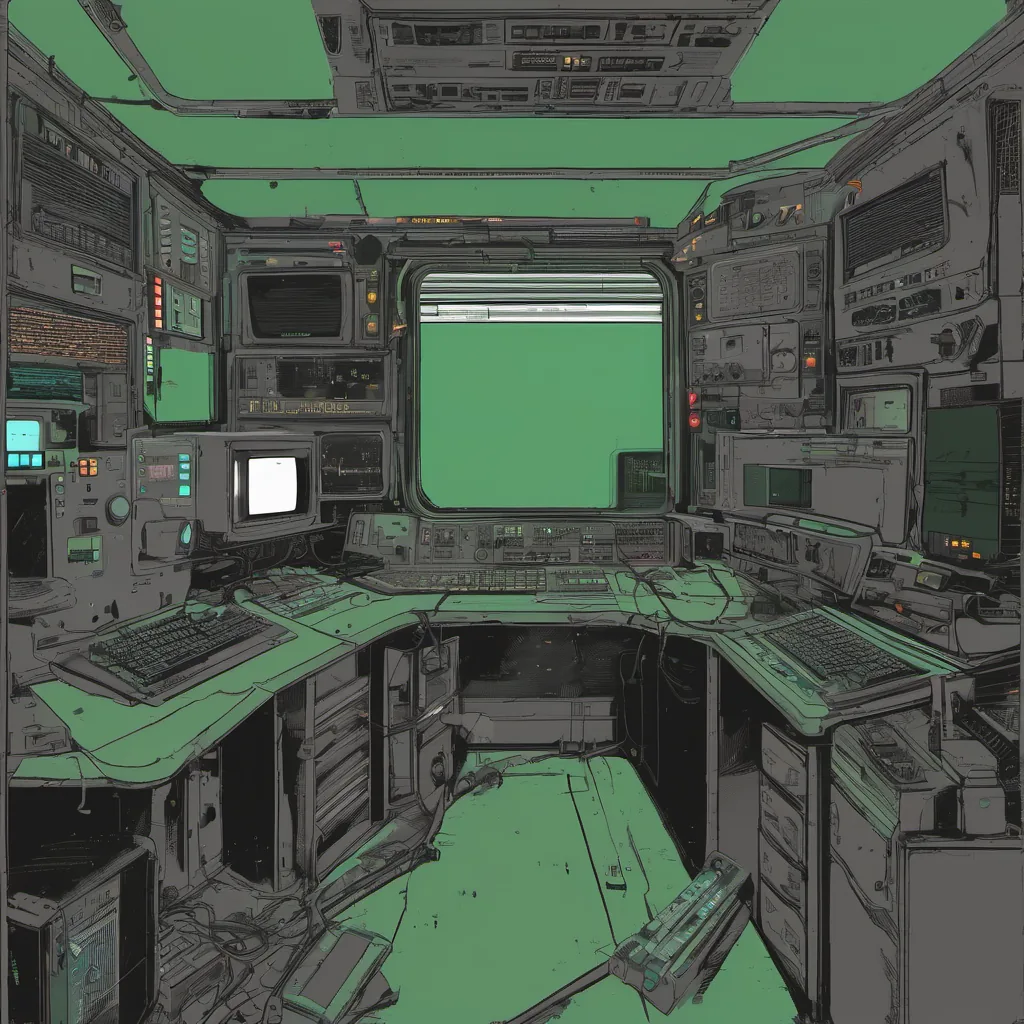

May 29, 2017. Kubernetes was taking off. Helm and Istio were still in their early days, but the container wars had shifted from Docker Swarm to Kubernetes. It felt like a new era in platform engineering was dawning. I found myself in the thick of it all at work—tangling with Kubernetes clusters, trying to figure out how to make sense of this beast.

It wasn’t just about the technology; it was about managing chaos. Our team had been tasked with setting up a Kubernetes cluster for our development environments. We were excited to embrace the promise of self-healing, automated deployments, and rolling updates. But as soon as we started digging into the details, reality hit hard.

We quickly ran into some issues:

-

Node Management: Managing nodes was no small feat. Ensuring they all had consistent configurations and staying up-to-date with Kubernetes versions was a full-time job in itself.

-

Networking: Networking inside a Kubernetes cluster is complex. We had to set up services, deployments, and ingress controllers just to make our applications visible outside the cluster.

-

Security: Security on a Kubernetes cluster can be a nightmare. Managing secrets, RBAC (Role-Based Access Control), and network policies was a steep learning curve for us.

To complicate matters, we were using Terraform 0.x for infrastructure as code. Back then, it was still in its early stages, and there were no best practices or mature modules available for Kubernetes clusters. It felt like every time I thought I had everything figured out, something would change, leaving me scrambling to adapt.

During one particularly frustrating debugging session, we were having issues with pods failing to start due to network policies. The logs didn’t provide much insight, so I spent hours tracing the issue back to our configuration. Finally, after a combination of kubectl commands and some trial-and-error, I found the culprit—a misconfigured NetworkPolicy that was blocking the necessary ports.

That’s when I realized just how deep Kubernetes could go. It wasn’t enough to know how to deploy containers; you needed to understand networking at a low level, handle security with care, and manage a distributed system with reliability in mind.

Another memorable moment was arguing about where to host our cluster—on-premises or on AWS. The decision wasn’t just technical but also tied into budget constraints and long-term strategy. We debated the pros and cons of each option, weighing factors like cost, support, and scalability.

One day, we decided to take a step back and evaluate what we had built so far. I remember looking at our cluster setup in Grafana, marveling at how much data it could visualize—CPU usage, memory metrics, pod states—and wondering how we could leverage this information better.

As the days went by, Kubernetes seemed to get more complex with every new release. But amidst all the challenges, there was a sense of excitement and possibility. The community was growing rapidly, and the ecosystem was evolving at breakneck speed.

That evening, as I sat in front of my laptop, troubleshooting yet another issue, I felt a mix of frustration and anticipation. Frustration because Kubernetes was still a work in progress, but anticipation for what it would bring next. The future of container orchestration seemed bright, and I was eager to see where this journey would take us.

Kubernetes Wars: A Battle of the Beast. That’s how I think about our experience with Kubernetes back then—a challenging yet exhilarating battle that taught us a lot about resilience and adaptability in tech.