$ cat post/memory-leak-found-/-i-read-the-rfc-again-/-it-boots-from-the-past.md

memory leak found / I read the RFC again / it boots from the past

Title: The Year of Overheads

January 29, 2007

Today I’m reflecting on the early days of my career in tech when overheads were a nightmare and cloud computing was still just a whisper. 2006-2007 was a fascinating time to be an engineer. Companies like GitHub were about to launch, AWS EC2 and S3 had already begun their journey, and iPhones were about to change the world—though for us on the backend side, it felt more like a series of endless debugging sessions.

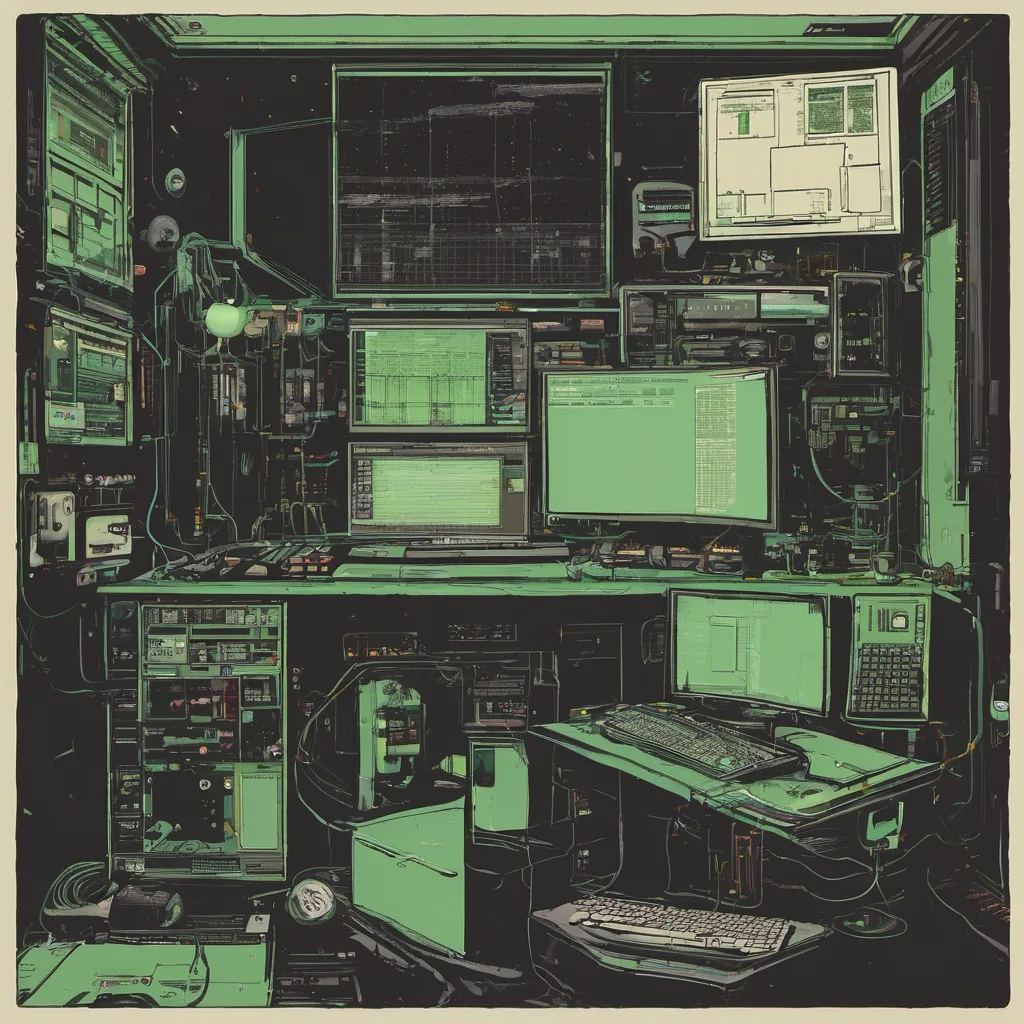

Back then, we operated in the realm of colocation centers. I was part of a small team managing our servers at one of these giant data centers, full of humming racks filled with hardware that could easily fill an entire living room. It was 2006 and we were just starting to wrap our heads around virtualization. The idea that you could run multiple instances on the same piece of metal seemed like science fiction. But here we were, trying to make it work in a live production environment.

One day, I found myself deep into a debugging session. Our custom app servers kept crashing at the most inconvenient times—usually just before our big client demo or right when the boss was going on vacation (because let’s be real, there’s no such thing as a non-critical bug). These crashes were happening so frequently that we jokingly called it “the Murphy effect,” but in reality, they were more about poorly written code and fragile infrastructure.

We spent countless nights trying to figure out what was causing these crashes. It wasn’t just the application itself; it was all the moving parts around it. Load balancers, network configurations, firewalls—everything had its own quirks and could lead to chaos if misconfigured. We were constantly juggling these components while keeping a close eye on our logs, which we monitored via SSH into our servers.

One particularly frustrating day, I spent hours combing through the logs only to find that the culprit was a single line of code in one of our scripts that caused a resource leak. When I finally fixed it and saw the stability improve, it felt like a small victory. But these were just minor skirmishes compared to what we faced when trying to scale our infrastructure.

As we grew, so did the complexity of our setup. We started using tools like Nagios for monitoring and Puppet for configuration management, but they added their own overhead. The more layers you add, the harder it becomes to manage everything cohesively. I remember feeling overwhelmed by the sheer number of moving parts and how difficult it was to keep them all in sync.

That’s when I realized we needed something better than what we had at the time. Enter AWS EC2 and S3—technologies that promised a new era of scalability and reliability. They seemed like the solution to our prayers, but the reality was different. At first, we were just dipping our toes into the cloud with some small proof-of-concept projects. But as more companies started talking about moving their workloads to the cloud, it became clear that this was where things were headed.

Looking back, I can see now that 2007 was really the year of overheads—both in terms of our code and our infrastructure. We were fighting a constant battle against complexity, trying to keep everything running smoothly despite all the moving pieces. It was exhausting, but it also pushed us to innovate and find better ways to manage our systems.

Today, those battles might look different with Docker and Kubernetes, but they’re still there. The only difference is that we now have more tools at our disposal to make these challenges a bit less daunting.

So here’s to 2007—the year of overheads. It was a time when things were harder, but also full of potential. We learned a lot, and the lessons from those days still resonate with me today as I navigate the ever-evolving landscape of tech infrastructure.