$ cat post/root-prompt-long-ago-/-the-terminal-remembers-me-/-the-secret-rotated.md

root prompt long ago / the terminal remembers me / the secret rotated

Title: Kubernetes Complexity Fatigue: A Personal Perspective

As I sit down to write this blog post on April 29, 2019, the tech world is buzzing with a mix of excitement and frustration. On one hand, there’s the awe-inspiring image of the first-ever black hole, a reminder that while we build our digital castles, nature continues to awe us with its grand designs. On the other hand, Google’s email service acquisition—Google Is Eating Our Mail—is making headlines, highlighting the shifting sands under our feet.

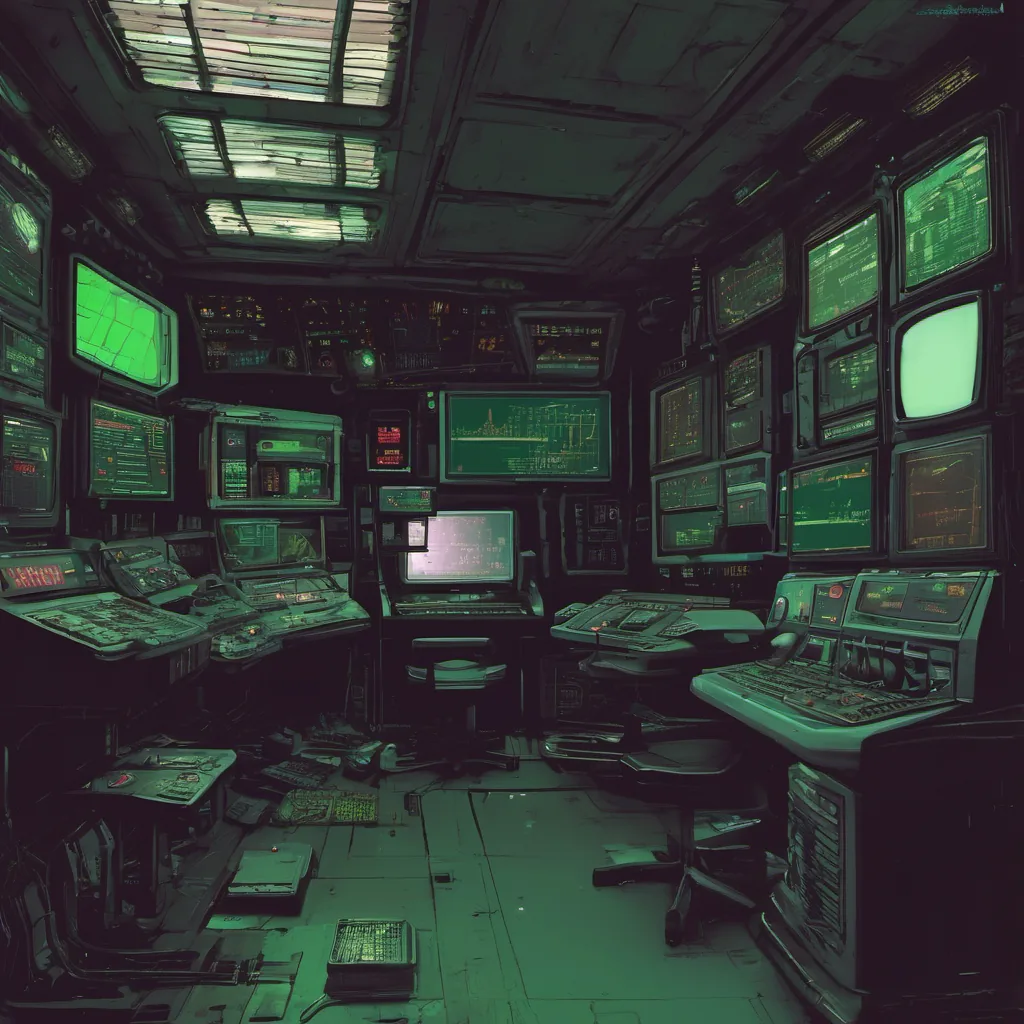

But for me, as an engineering manager and platform engineer at the height of Kubernetes complexity fatigue, my thoughts are focused on a different set of challenges: managing the intricate web of pods, services, and configurations that make up our cluster. It’s 2019, and we’re still grappling with the same issues that plagued us in 2018, only now it feels like the battle is even more complex.

The Complexity Conundrum

Every day, I find myself arguing about whether to stick with Kubernetes or embrace other tools for managing our infrastructure. We’ve got ArgoCD and Flux GitOps growing in popularity, offering a more declarative way of managing our clusters. Yet, these tools come with their own set of challenges: learning curves, integration issues, and the ever-present fear of breaking something.

One recent debug session stands out vividly. Our Kubernetes cluster had been acting up again, and as I delved into the logs, it became clear that a custom resource definition (CRD) we’d implemented was misbehaving. The CRD was supposed to manage our database backups, but somehow, it wasn’t triggering the expected events. After hours of tracing through the code, I finally found the issue: a subtle race condition in how the Kubernetes controller was handling notifications.

It’s moments like these that make me wonder if we’re overcomplicating things. Is the complexity necessary? Or are we just adding layers upon layers without really solving our core problems?

The Case for Simplicity

In my journal, I’ve been wrestling with this question: when do we draw the line and say enough is enough? Should we continue to layer on new abstractions, or should we step back and reassess if there’s a simpler way to achieve our goals?

One idea that has gained traction recently is eBPF. It’s a fascinating technology, allowing us to insert low-level hooks directly into the Linux kernel without modifying any code outside of it. While I haven’t had much chance to work with eBPF in my current role, its potential for simplifying and optimizing complex systems makes me hopeful that maybe there are better ways to handle some of our pain points.

Remote Work’s Impact

On a more practical note, the rise of remote-first infrastructure is pushing us to rethink how we manage our clusters. With many developers now working from home or co-working spaces, ensuring consistent access and performance becomes even more challenging. Tools like internal developer portals (like Backstage) are helping us centralize our knowledge and make it easier for team members to onboard and collaborate effectively.

Reflections

As I look back on this month, it feels like the tech world is at a crossroads. We’re making strides with new tools and technologies, but we’re also facing growing pains as these systems mature. The challenges of managing Kubernetes clusters, along with the constant need for innovation, are keeping us busy and engaged.

For now, I’ll keep debugging, experimenting, and questioning whether there’s a simpler path forward. After all, in tech, the journey is just as important as the destination.

That’s where I’m at on this April day. How about you? What challenges are you facing in your tech journey?