$ cat post/compile-errors-clear-/-i-rm-minus-rf-once-/-we-were-on-call-then.md

compile errors clear / I rm minus rf once / we were on call then

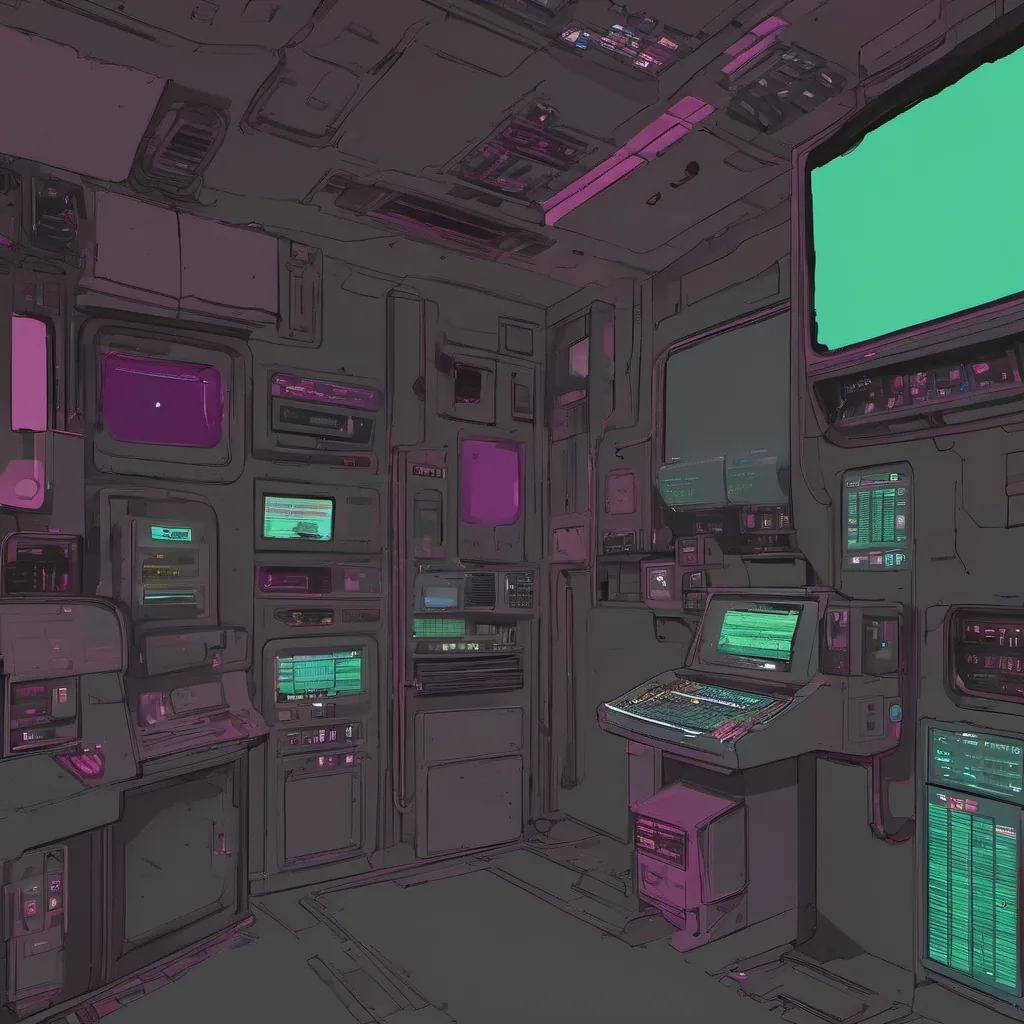

Title: Debugging AI Copilot Nightmares

July 28, 2025. Another day in the life of an engineering manager trying to keep sane amidst the chaos that is modern tech.

Today, I spent most of my morning dealing with yet another instance where our LLM copilots went off the rails. It’s becoming almost too common—so much so that “copilot nightmares” are now a recurring part of our team’s lingo. Let me take you through one such incident and share some thoughts on how we’re trying to tame these wild beasts.

The Incident

It was 9 AM, and our ops team had just flagged an issue: our chatbot, which was supposed to help engineers with code snippets and documentation searches, started suggesting some seriously off-the-wall stuff. One developer was getting suggestions like “add a function called ‘flying_sheep’ that prints ‘Hello, Universe!’ 7 times.” This wasn’t exactly helpful. And then there were more concerning ones: “implement the ‘find_primes’ function using recursion” when they were working on a backend service with no prime number logic.

The copilot was clearly confused and generating random code suggestions. I pulled up the logs from our AI-assisted ops pipeline, which we’ve been managing since LLMs became mainstream. The error messages looked like gibberish to me at first glance—something about “context mismatch” and “token overflow.” But then I spotted a pattern: all these issues seemed to stem from recent updates in how the copilot was handling context.

The Diagnosis

After some digging, we realized that there had been an unexpected change in our eBPF (extended Berkeley Packet Filter) instrumentation. We were using eBPF to monitor and optimize our application performance. However, this latest update had inadvertently altered the way the copilot interacted with the application’s context. Specifically, it was too aggressively pruning context data, leading to these bizarre suggestions.

I spent a good hour arguing with the AI model itself—well, trying to figure out what exactly it thought was happening. It seemed like the copilot’s internal state got corrupted during its training phase and then carried over into production, making it harder for us to debug using traditional methods. This led me to wonder about the long-term stability of these AI-native tools as they become more deeply integrated into our ops pipelines.

The Fix

I worked with my team on a fix that involved reverting some recent changes in our eBPF instrumentation and implementing a more robust context management system for the copilot. We added some logging to capture more granular data about how the copilot was interacting with different parts of the application. This helped us quickly identify where things were going wrong and make targeted adjustments.

We also decided to implement a fallback mechanism where, if the copilot’s suggestions start getting too weird, it would default back to a more conservative mode that relies less on context and more on predefined rules. It’s not perfect, but it buys us some time to figure out the root cause of these issues without totally breaking our workflow.

The Future

This incident made me reflect on how far we’ve come with AI in ops, yet how much further we have to go. While tools like copilots can be incredibly powerful, they also introduce new kinds of challenges that require a different set of skills and tools to manage. We’re now living in the era where engineers must both wield these powerful assistants and remain vigilant against their occasional missteps.

As I finish up today’s debugging session and head home, I’m left wondering what other surprises AI-native tooling might bring. But for now, I’ll focus on making sure our copilots are more reliable and less prone to generating flying sheep code. Until next time, happy coding!

This is just another day in the life of managing infrastructure with all its quirks and challenges. Hope this gives you a glimpse into what it’s like to work through these issues!