$ cat post/a-ticket-unopened-/-the-abstraction-leaked-everywhere-/-the-stack-still-traces.md

a ticket unopened / the abstraction leaked everywhere / the stack still traces

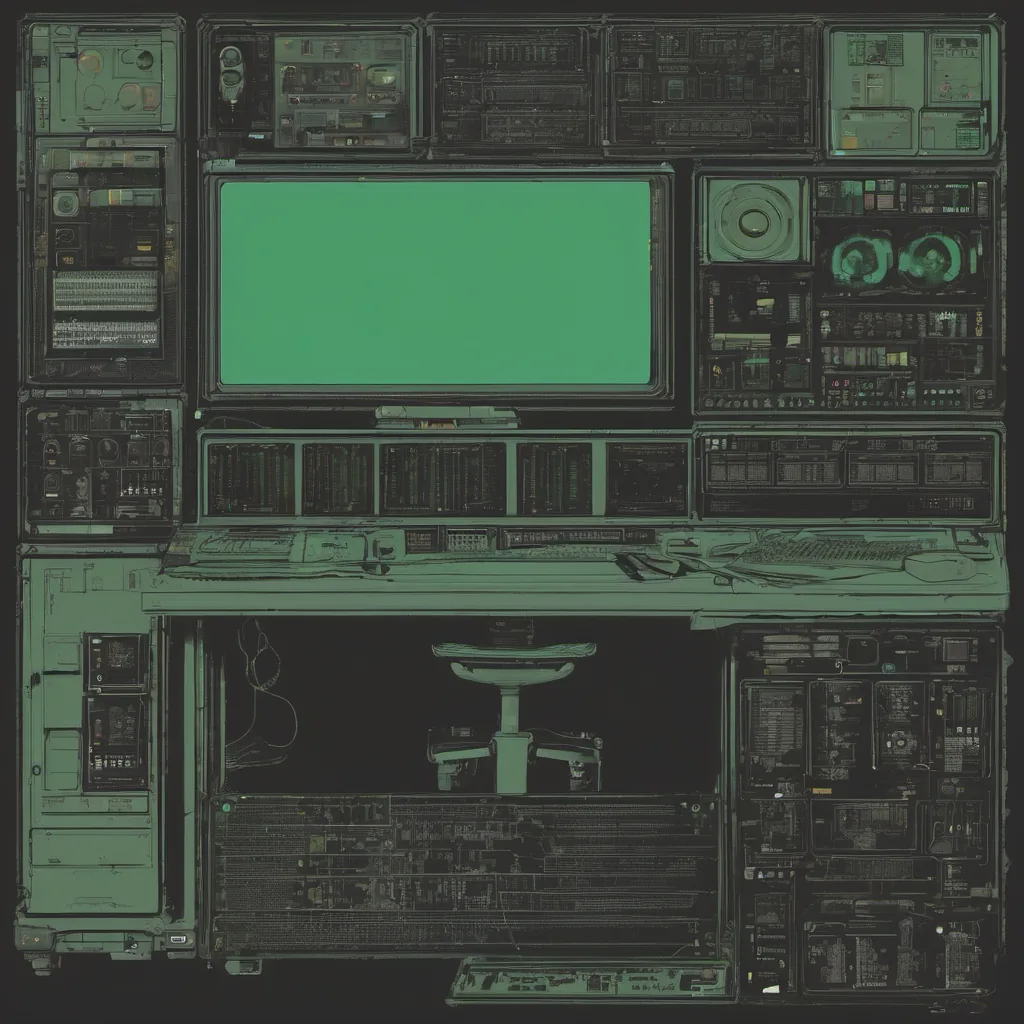

Title: Kubernetes Complexity Fatigue? I’ll Take That as a Compliment

December 28, 2020. The calendar says it’s the holidays, but my mind is still on work. I mean, how can you not think about Kubernetes when you’re dealing with a system that’s so complex it needs its own ecosystem to manage? It’s like having a Swiss Army knife for every single tool in your garage.

Today, I’ve been wrestling with an issue that’s really hit home the complexities of Kubernetes and the ecosystem around it. We had a service that was starting to lag, and as usual, we dove into the logs to try to figure out what was going on.

The Setup

Our infrastructure is built around Kubernetes, but we also use Backstage for our internal developer portal and ArgoCD for GitOps. Flux is doing its thing behind the scenes, keeping everything in sync with our Git repository. It’s a beautiful system, but it can be a nightmare when you need to troubleshoot.

The Issue

We noticed that one of our microservices was lagging under load. Initially, we thought it was just a scaling issue, but as we dug deeper into the logs and metrics, things got more interesting. There were intermittent spikes in CPU usage, which wasn’t consistent with what we saw on the live dashboard.

After some troubleshooting, I realized that we had a few eBPF programs running to gather network and system-level insights. These are fantastic for deep visibility into performance issues, but they can be tricky to set up and debug when something goes wrong.

The Debugging Process

I spent hours looking through the eBPF program code, trying to spot any inconsistencies or bugs. The more I looked, the more I realized that maybe the issue wasn’t with the microservice at all—it might be related to how we were handling traffic between services.

That’s when it hit me: the real problem was in our service mesh. We were using Istio for service discovery and traffic management, but apparently, there had been some recent changes or misconfigurations that were causing these spikes in CPU usage.

The Fix

Armed with this knowledge, I started digging into Istio’s configuration. It wasn’t pretty—multiple YAML files spread across different directories. But after a few hours of tweaking and re-rolling deployments using ArgoCD, we finally hit the sweet spot.

The service lag improved drastically. The logs cleared up, and our monitoring dashboard showed smoother lines. It was a satisfying feeling to get it working again.

Reflections

This incident has really brought home the complexity of Kubernetes and its ecosystem. While eBPF and GitOps are powerful tools, they can also make debugging a nightmare when things go wrong. I’ve been thinking about how we can streamline our process to handle these issues more effectively moving forward.

We might need to revisit some of our automation scripts and build better logging mechanisms. Also, maybe it’s time to reconsider the balance between giving engineers too much freedom and providing enough guidance on best practices.

The Broader Context

As I reflect on this experience, I can’t help but think about the broader tech landscape at the time. Back then, platform engineering was formalizing, and internal developer portals like Backstage were becoming more common. SRE roles were proliferating as companies realized the importance of reliability engineering. Meanwhile, the pandemic pushed us all to scale our remote infrastructure.

One article that stood out to me this month was about “No Cookie for You,” which dealt with privacy concerns in web cookies. It’s a reminder of how user data and security are becoming increasingly important considerations in tech.

Another story about Microsoft Teams vulnerabilities highlighted the importance of security in communication tools, something we definitely have to keep an eye on as we rely more on these platforms for collaboration.

Conclusion

As I wrap up this blog post, it feels like another milestone has been reached. Kubernetes and its ecosystem are here to stay, but they’re also a constant reminder that even with all the automation and tools at our disposal, we still need to be vigilant about performance and security.

So, if anyone out there is feeling the “Kubernetes complexity fatigue” (as it seems some are), I’ll take that as a compliment. It means we’re doing something right.

Happy holidays!