$ cat post/kubernetes-complexity-fatigue:-a-real-engineer's-perspective.md

Kubernetes Complexity Fatigue: A Real Engineer's Perspective

September 27, 2021 was a day that felt like the culmination of months of hard work and frustration. The tech world was buzzing with talks about platform engineering, internal developer portals, and SRE roles, all while dealing with the challenges brought on by remote-first infra scaling due to the ongoing pandemic. At the same time, there were some interesting developments in eBPF and GitOps tools like ArgoCD and Flux that were starting to gain traction.

I was working on a project where we had been experimenting heavily with Kubernetes for our application deployment needs. The complexity of managing stateful applications across multiple namespaces while keeping them loosely coupled was becoming more and more cumbersome. We had hit some roadblocks, and the “Kubernetes complexity fatigue” meme was starting to resonate with me.

One specific incident stood out. Our monitoring tool, which was based on Prometheus and Grafana, had started to slow down during peak load times. The initial diagnosis pointed towards CPU utilization of our Kubernetes nodes being a bottleneck. But as I delved deeper into the logs and metrics, it became clear that there were issues with how we were handling stateful workloads—statefulSets weren’t properly reconciling, leading to stale data in our monitoring system.

I found myself wrestling with a combination of tools: Helm for managing application deployments, Prometheus for monitoring, and JenkinsX for CI/CD pipelines. Each tool had its own set of configuration files that required constant tweaking. It felt like we were drowning in YAML files and trying to make sense of the Kubernetes API.

To add fuel to the fire, there was a HN thread about “a search engine that favors text-heavy sites” which sparked some interesting discussions on web design trends and their impact on SEO. As someone who deals with containerized applications deployed via CI/CD pipelines, this was somewhat tangential but it did make me reflect on how much we rely on tooling that often has its own quirks.

One of the more exciting developments during this period was eBPF gaining attention. I had read about it in some articles and was curious to see if it could help with some network monitoring tasks we were facing. However, the learning curve seemed steep, and there weren’t many concrete examples out there that directly addressed our use case.

Despite these challenges, I found myself increasingly drawn to tools like Backstage for internal developer portals and ArgoCD for GitOps. These platforms offered a way to centralize our documentation, monitoring, and deployment processes, which could potentially simplify some of the pain points we were experiencing.

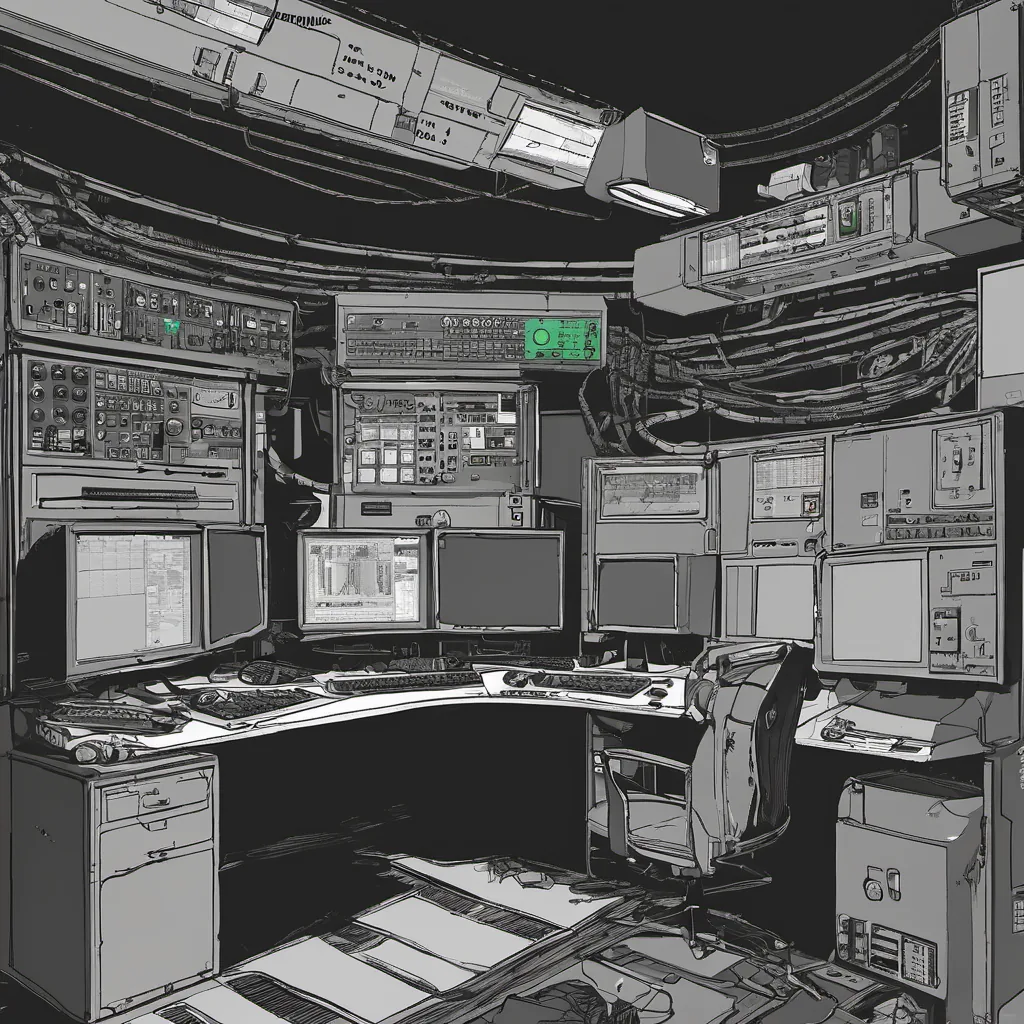

As I sat there in my home office, trying to debug an issue with one of our stateful applications, I couldn’t help but feel like I was in a battle against Kubernetes complexity. The codebase was growing, and so was the number of YAML files needed to manage it all. It was a frustrating yet enlightening experience that highlighted both the power and the pitfalls of using such a complex platform.

In the end, what helped me find some solace was focusing on small, incremental improvements in our processes. I started by cleaning up some old Helm charts and refactoring some of our statefulSet configurations to make them more modular. This didn’t solve everything, but it certainly made the pain more manageable.

Looking back at that day, September 27, 2021, marked a turning point in my approach to managing Kubernetes clusters. While there’s still plenty of work to be done, I’m cautiously optimistic about what tools like eBPF and GitOps can bring to the table. The journey continues, but for now, it feels like we’re moving forward.

This personal reflection captures both the struggles and the progress in managing a complex Kubernetes environment during a period where platform engineering and GitOps were gaining traction.