$ cat post/ps-aux-at-midnight-/-the-health-check-always-lied-/-no-rollback-existed.md

ps aux at midnight / the health check always lied / no rollback existed

Title: Debugging the Cloud: A Tale of Latency and Misconfigured Load Balancers

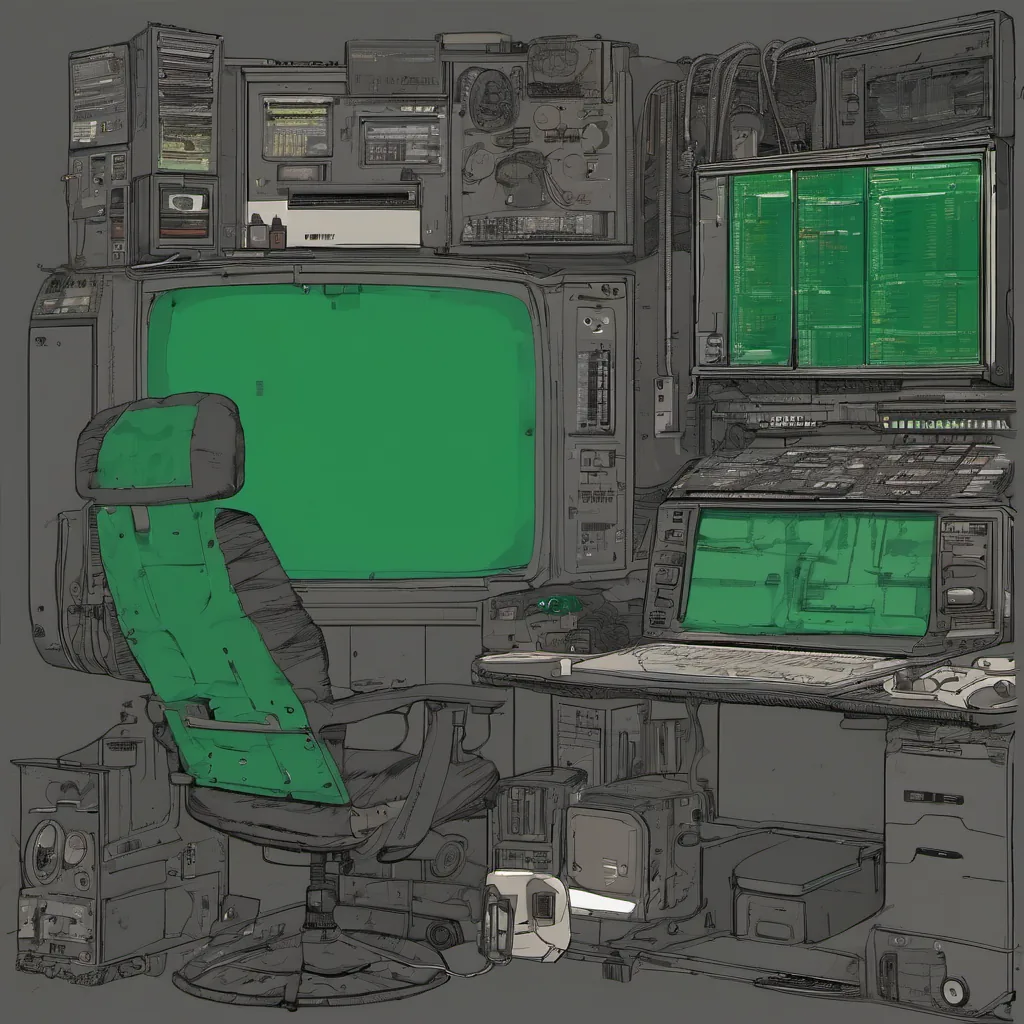

May 27, 2013. The sun was just starting to set on the Bay Area, casting a warm orange glow over my office. I sat in front of my desk, staring at the screen, trying to make sense of why our service was suddenly crawling. It was a Saturday afternoon, and usually, I’d be home by now—except for this one nagging issue that kept me here.

You see, we were running an application built on top of AWS, using multiple Availability Zones (AZs) and auto-scaling groups to ensure high availability. We had load balancers in front of our services, routing traffic based on the health of the instances. It was a pretty standard setup, but sometimes the standard setups are where you find the bugs.

That morning, I received an alert from our monitoring system. The latency metrics were spiking across one of our load balancers, but everything else seemed to be running fine. At first glance, it looked like some sort of network issue or maybe a sudden surge in traffic. However, as I dug deeper, the story started to unfold.

I ran a few diagnostic tools and noticed something peculiar: the instances behind that particular load balancer were consistently reporting lower CPU utilization than their peers, despite serving an equal amount of requests. This couldn’t be right—how could one set of machines be underutilized while handling the same workload as others?

After a bit of head scratching, I decided to take a closer look at the configuration files. The load balancer was using a simple round-robin algorithm to distribute traffic, but something didn’t feel quite right about it. I recalled reading an article on Docker and microservices that mentioned the importance of proper health checks in front-end systems. Could our problem be related to this?

I began troubleshooting by modifying the health check settings for one instance at a time. As I made changes, I noticed a pattern: whenever I adjusted the timeout or response threshold, the latency metrics would normalize for that instance almost instantly. It was like magic.

But then I remembered something crucial—the load balancer was configured to use a “short” timeout (30 seconds) and low response thresholds, which were inadequate for our application’s request patterns. In reality, each request took longer than expected, leading to timeouts being flagged incorrectly, thus underutilizing the instance.

With this realization, I quickly went back and adjusted the health check settings on all load balancers to something more appropriate—60 seconds timeout with a 50% response threshold. Within minutes, the latency metrics started to normalize across all instances.

This experience taught me a few things:

- Proper configuration matters: Even simple tools like load balancers can cause significant issues if misconfigured.

- Understanding your application’s performance characteristics is key: Knowing what normal request times look like helps in setting health check thresholds correctly.

- Automation and monitoring are essential: In a dynamic environment, constant vigilance is necessary to catch these kinds of subtle issues early.

Looking back, it seems almost quaint now—before the era of Docker, Kubernetes, and Mesos had truly taken off. But those foundational lessons from managing cloud services in 2013 still ring true today. The best practices we learned then help us tackle more complex systems and architectures as they emerge.

In a way, that Saturday afternoon was just another day in ops, but one that highlighted the importance of understanding your tools and their limitations. It’s moments like these that remind me why I love this field—there’s always something new to learn and challenges to overcome.