$ cat post/root-prompt-long-ago-/-i-watched-the-memory-climb-slow-/-the-patch-is-still-live.md

root prompt long ago / I watched the memory climb slow / the patch is still live

Title: A Laptop From Scratch and Other Oddities

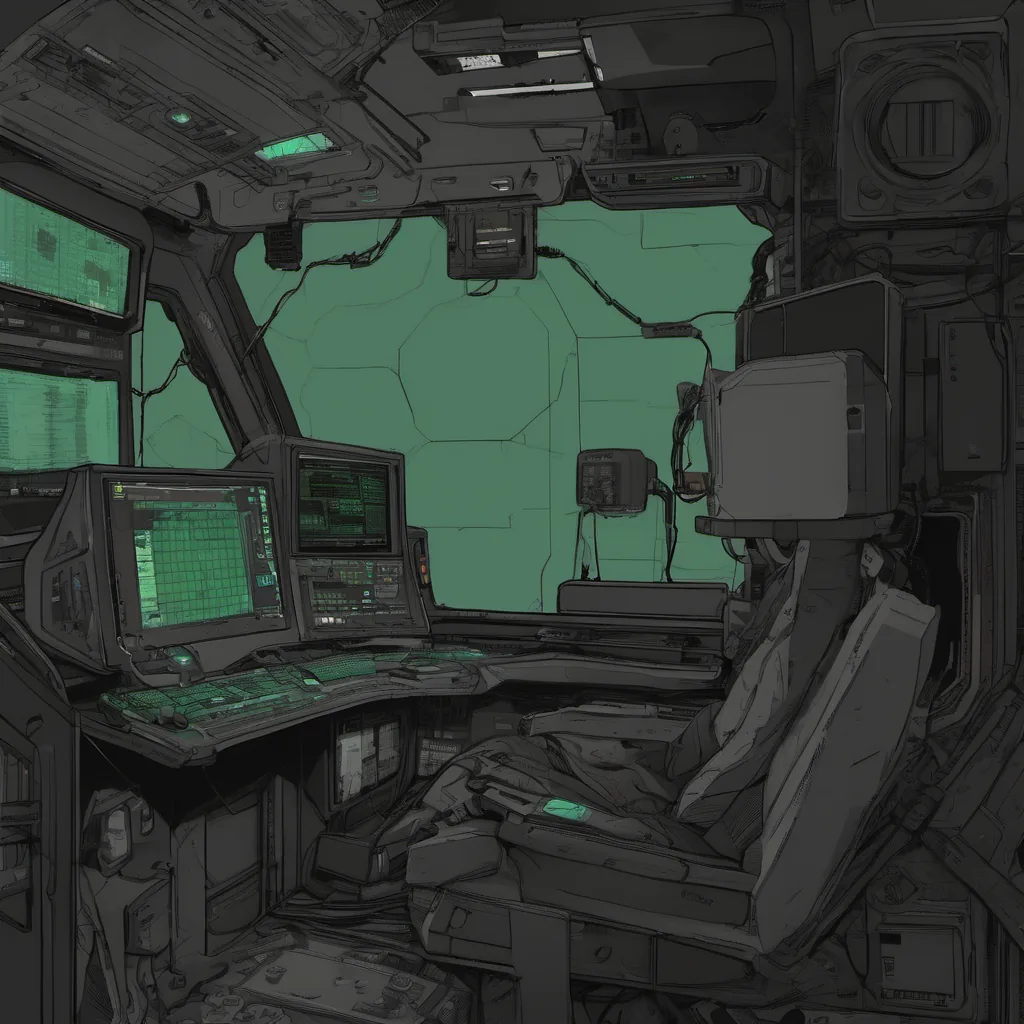

January 27, 2025. Another Monday in the world of AI-native tooling and multi-cloud defaults. I woke up with a new laptop on my desk—a custom build, all hardware sourced from the “Open Build” project. It’s an interesting setup: ARM-based for energy efficiency, running a fresh Linux distro, with eBPF and Wasm already integrated into the kernel. I feel like Neo with a choice between two laptops today.

First order of business: setting up my development environment. The new version of kubebuilder is out—post-hype, post-maintenance, but still essential for managing our multi-cloud workloads. It feels reassuring to know that Kubernetes is boring again, and that’s how it should be.

I spent some time wrestling with the new stimulation-clicker project. It’s a tool that can trigger hardware events on your device based on software inputs—pretty cool, but also a bit unsettling. I had to make sure my build server wasn’t susceptible to accidental clicks that could bring down our pipelines. Ensuring robustness against such attacks is becoming increasingly important in the age of AI copilots and agents.

On a different front, there’s been some chatter about the return of Pebble. Yes, the smartwatch company from back in the day is coming back with a new wave of devices that promise to integrate seamlessly with modern infrastructure. I’m skeptical but curious. If it’s anything like the previous version, though, it will probably just be a glorified fitness tracker with some clever APIs.

Today, I debugged an issue on our platform where eBPF programs were misbehaving in Kubernetes nodes running Wasm modules. Turns out, it was a subtle interaction between the two technologies that needed careful tuning. The problem wasn’t about security or performance—more about ensuring that eBPF could coexist nicely with the sandboxed environment of Wasm.

I also shipped a small feature for our AI-native ops tooling. We’ve been using a new open-source LLM-assisted copilot to help with configuration management, and I had to make some adjustments to support multi-cloud environments more effectively. It’s amazing how much these tools have improved over the years—what used to be clunky is now almost intuitive.

An interesting piece of news hit Hacker News today: someone built a 32-bit ARM-based laptop from scratch. While it’s not exactly practical for our day-to-day work, it’s an impressive feat of engineering and a reminder that technology is always evolving in unexpected ways.

On a lighter note, I had a discussion with my team about the ethics of deepfake technology. With DeepSeek-R1 making waves, there’s been a renewed focus on understanding how these tools can be used for good or ill. It’s not just about tech; it’s about responsibility and intent. We’re working on integrating more robust ethical checks into our AI models to ensure they’re used in beneficial ways.

Finally, I received an email from the team that acquired my startup back last week. They’ve come a long way since then, and seeing their progress is both humbling and inspiring. It’s been a whirlwind of changes, but it’s clear that we’re moving towards a future where AI is more integrated into everything we do.

As I close out another day, the tech landscape seems as dynamic as ever. From building custom laptops to defending against clickers and deepfakes, there’s always something new to grapple with. But for now, I’ll wrap up my work and look forward to whatever tomorrow brings.