$ cat post/the-swap-filled-at-last-/-we-patched-it-and-moved-along-/-we-were-on-call-then.md

the swap filled at last / we patched it and moved along / we were on call then

Title: Marching in Step with Chaos

February 27, 2012 was a day that felt like it had been plucked from the future, but I found myself living it. The DevOps buzz was everywhere; people were clamoring for ways to make our infrastructure more reliable and resilient, but few knew exactly how to do it. Chef and Puppet skirmishes raged on, each side claiming superiority in their ability to manage configurations. Netflix was already laying the groundwork for chaos engineering, while OpenStack was just starting its journey as a full-blown cloud platform.

I remember that day vividly. We were still using basic shell scripts and cron jobs for our deployment processes, but the idea of continuous delivery was slowly seeping into my consciousness. I was working on a project where we had to scale up an application from 10 instances to over 50 in response to growing user traffic. The old manual scaling methods weren’t cutting it anymore.

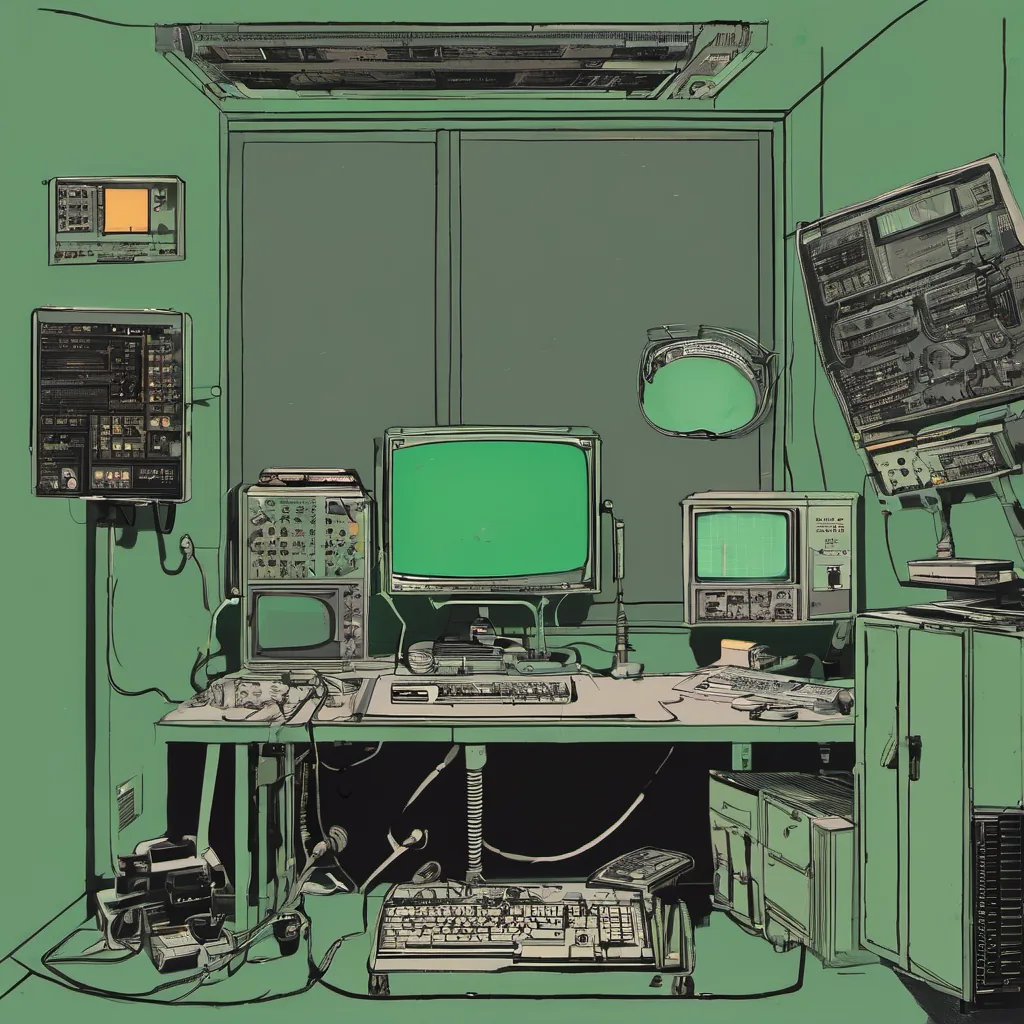

As I sat at my desk, staring at the endless loop of curl commands and top outputs, something dawned on me: I needed more than just scripts. I needed a way to ensure that our application remained robust under stress. That’s when I stumbled upon an article about Netflix’s Chaos Monkey—a tool designed to randomly take down instances in your cluster to test the resilience of your system.

The thought was both exhilarating and terrifying. Exhilarating because it meant we could actually test what happens when something fails, which is exactly what you want to know before it happens for real. Terrifying because it meant I would be putting our application through a series of painful but necessary tests.

I decided to implement the Chaos Monkey concept in our environment. It wasn’t an easy task; we didn’t have a lot of automation at the time, and we certainly weren’t used to injecting failures into our systems. But after days of coding and debugging, I finally had something that would randomly kill off instances, simulating real-world failure scenarios.

The first run was a disaster. The application went down like a stone in water, and panic set in as I scrambled to restore the environment. But when we started running Chaos Monkey again, this time more slowly, the results were enlightening. We discovered bottlenecks in our code that hadn’t been obvious before, and we identified areas where our infrastructure could be improved.

This experience taught me a valuable lesson about resilience testing. It wasn’t just about writing code; it was about understanding the failure modes of your application and preparing for them. The NoSQL hype had us thinking about scalability, but I realized that reliability was equally important.

Looking back, those early days of DevOps were a bit like trying to navigate a ship in fog—uncertain, but exhilarating. We were still figuring out how to balance automation with human oversight, and the tools we had weren’t always up to the task. But every day brought new challenges, and I was right where I wanted to be.

That’s why, even today, when faced with complex systems and unpredictable outcomes, I think back to those early days of chaos testing. It’s not just about the technical challenge; it’s also about embracing the unknown and learning from failure. The journey might be bumpy, but it’s worth it if you’re building something that will withstand the test of time.

Until next time, Brandon