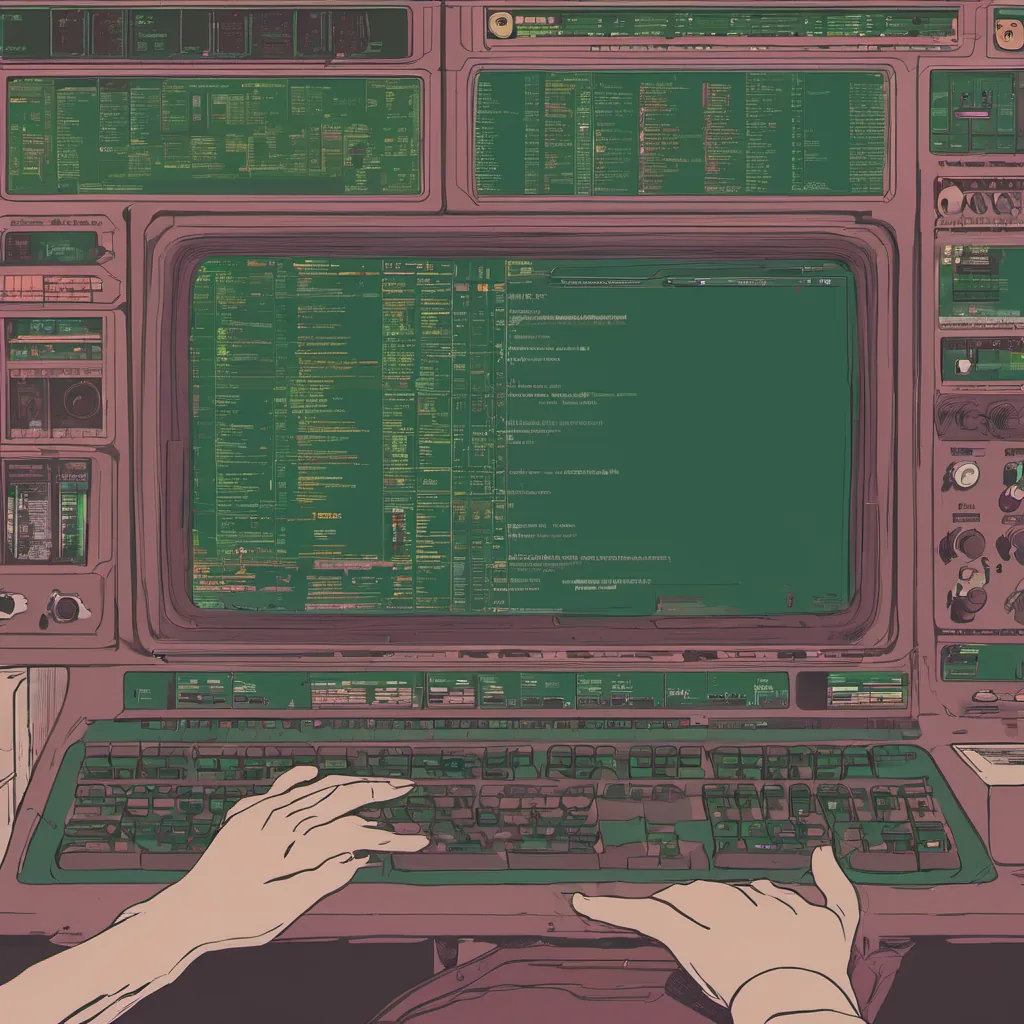

$ cat post/how-we-fought-the-aws-outage-of-'07.md

How We Fought the AWS Outage of '07

It’s been a while since we experienced that infamous AWS outage back in 2007. It was one of those moments where everything seemed to go wrong all at once, and you found yourself thinking, “Is this really happening?” But it wasn’t just any ordinary Tuesday; it felt like the world was coming to an end.

The Setup

Back then, our company had just transitioned its infrastructure from a colo (co-location) data center to AWS. We were excited about the potential of cloud computing and all the benefits it promised—elasticity, pay-as-you-go pricing, and reduced management overhead. But with any new tech stack, there are always growing pains.

The Morning of the Outage

I woke up on a particularly crisp August morning in 2007, just like every other day. My alarm went off at 6 AM, but my mind kept drifting back to the night before. I had a nagging feeling that something wasn’t quite right. Maybe it was the extra coffee I had poured this morning, or perhaps the way the sun felt on my skin.

I logged into the AWS console and checked the status of our applications. They were all running smoothly, but somehow, I couldn’t shake off the sense of impending doom. “Just trust me,” I thought, trying to convince myself that everything would be fine.

The Moment

By 10 AM, things started to unravel. One by one, our services began to fail. First came the website: “Service Unavailable” errors filled my browser. Then it was our email system, and finally, the database went down like a ton of bricks. Our applications were crashing left and right.

I quickly sprang into action, calling in backup engineers and updating the team on Slack. “Everyone, this is real,” I said, trying to stay calm while my heart raced. We needed a plan, and we needed it fast.

The Battle

Our primary database was down, which meant no transactions could be processed. We were losing customers left and right, and our support team was getting calls every five minutes. It was chaos, but we had to keep pushing through.

I started with the basics: making sure all of our application instances were up and running. I went through each one manually, checking logs for errors, and restarting services where necessary. But something was still off; no matter what I did, things just weren’t healing themselves.

It was around 12 PM when it hit me—the problem wasn’t with AWS itself, but rather a misconfiguration on our end. One of the load balancers had an incorrect timeout setting, causing requests to time out and fail. It turned out that we hadn’t properly tested this setup in our staging environment before going live.

The Resolution

After fixing the config, things started coming back online slowly but surely. By 3 PM, everything was up and running again, albeit with a few hiccups here and there. We spent the rest of the day patching up any last-minute issues and making sure nothing else went wrong.

Reflecting on that day, I realized how much work goes into maintaining a reliable system. AWS provided us with powerful tools, but we still needed to be vigilant about our own infrastructure. This experience reinforced my belief in the importance of redundancy, thorough testing, and having a solid disaster recovery plan.

Aftermath

The aftermath was a mix of relief and reflection. We held an internal debriefing session where everyone shared their experiences and what they thought went wrong. It was humbling to hear how some people had handled the situation better than others, but it also gave us valuable lessons moving forward.

In the weeks that followed, we tightened up our processes, added more monitoring, and made sure every single team member understood our architecture and potential failure points. That AWS outage in August 2007 may have been a nightmare to live through, but it ultimately taught us invaluable lessons about resilience and preparedness.

Lessons Learned

- Test, Test, Test: Make sure you thoroughly test your configurations before going live.

- Redundancy is Key: Always have backup systems in place.

- Communication Matters: Keep lines of communication open during an outage.

- Learn from Mistakes: Use incidents like this to improve and grow.

That day taught me a lot about the real-world challenges of managing cloud infrastructure, and I’m grateful for the lessons it imparted. As we move forward in our tech journey, these are the reminders that keep us grounded and focused on what truly matters—making sure your systems stay up and running even when the unexpected happens.