$ cat post/the-ai-copilot-dilemma.md

The AI Copilot Dilemma

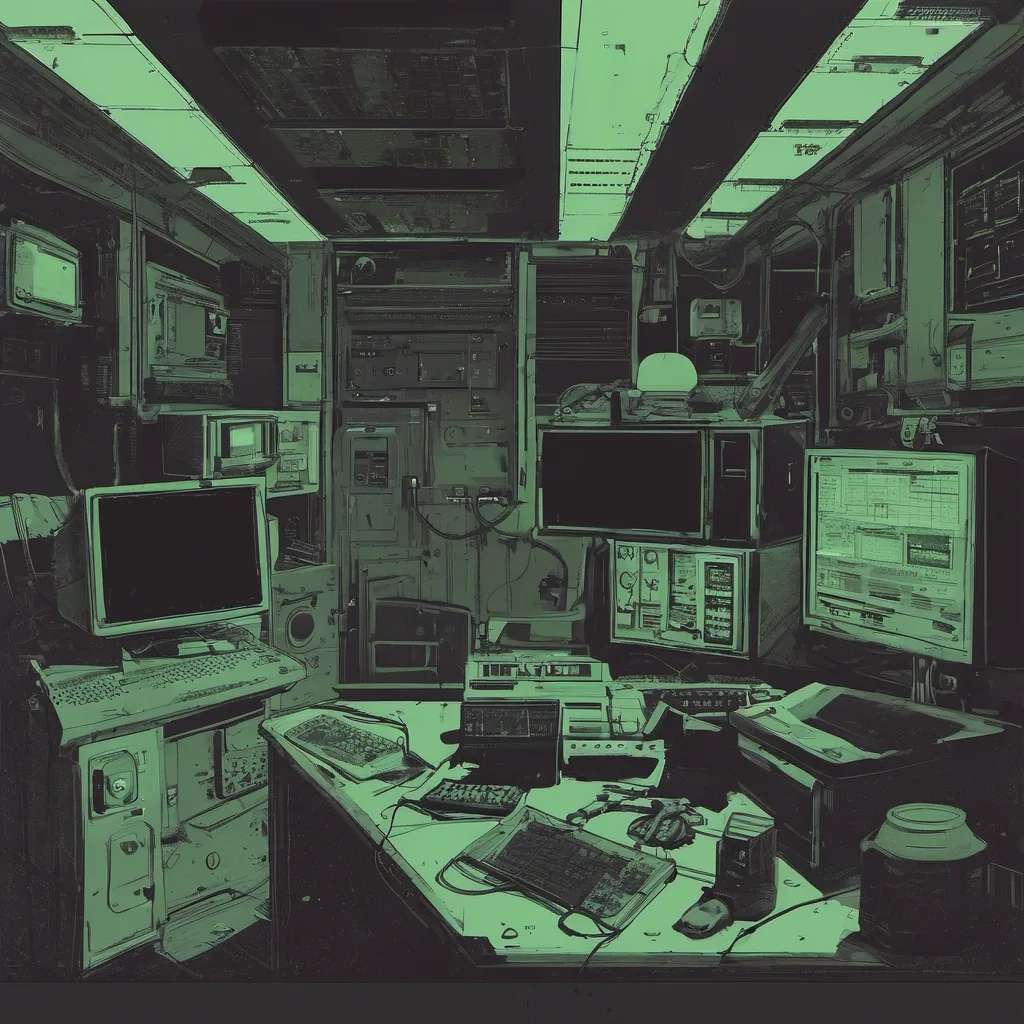

May 26, 2025. Another day in the world where AI is an integral part of my daily work. I’ve been managing a platform that’s built around LLM-assisted operations for the past few years, and it’s been both exhilarating and humbling.

Today, as I sat at my desk, staring at yet another error log from our Kubernetes cluster, I found myself thinking about how much has changed since 2013. That was when Claude 4 first hit the scene, a moment that felt like science fiction had come true but also a bit overwhelming in its complexity.

I’m part of a platform team that owns our AI infrastructure pipeline—essentially, we’re responsible for making sure all the shiny new tools don’t break things. Recently, I’ve been grappling with how to effectively integrate Claude 4 into our operations without turning it into a black box. It’s like having a copilot that can offer suggestions, but sometimes you just need to know why something is going wrong.

One of the big challenges has been figuring out how to debug AI issues when they arise. In traditional ops, I could step through code line by line or even use debugging tools to understand what was happening at each layer. But with AI, it’s often a matter of tuning hyperparameters and hoping for the best. It feels like we’re in the early days of understanding how to debug these things properly.

Last week, we had an outage that seemed straightforward—some network service went down, and our LLM-assisted ops suggested some changes that actually exacerbated the issue. I spent hours trying to figure out what was going wrong, eventually realizing that the AI’s recommendations weren’t taking into account the full context of our system’s dependencies.

On a lighter note (or perhaps not), there was this huge discussion around Redis being open-sourced again. The community is still abuzz about it, and I can see why; Redis is one of those tools where having control over its source code could be a game-changer for some teams. Personally, though, I’m more interested in how our team will integrate it into our caching strategies without causing any disruption.

Yesterday, we started toying with Wasm + containers. It’s fascinating to see how these two technologies are converging, but also a bit scary because they’re both still evolving rapidly. We ran into some performance bottlenecks trying to get them integrated seamlessly, and I couldn’t help but think about the irony of using an AI-powered tool to optimize our ops when it ended up causing us more headaches.

Speaking of ironies, I spent part of today arguing with a colleague about whether we should adopt multi-cloud as the default. They were all for it, citing resilience benefits, while I was skeptical because of the additional complexity it introduces. I mean, sure, it’s great in theory, but when every cloud provider has their own quirks and APIs, managing everything becomes a real headache.

On a personal note, I’ve been trying to stay on top of all these new developments by reading up on various articles from Hacker News. From the resurgence of Redis to discussions about gene editing treatments, it’s clear that tech is moving at an unprecedented pace. But amidst all this change, there are still fundamental principles like simplicity and reliability that don’t go away.

As I write this, I’m sitting in a quiet corner of our office, with my headphones on, trying to debug another issue. The AI copilot has given me some suggestions, but I know the best way forward is to keep things simple—no matter how tempting it might be to rely entirely on automation and fancy tools.

It’s been an interesting journey so far, and I’m looking forward to seeing what the next few years bring. For now, I’ll just focus on making sure our platform stays up and running smoothly while I grapple with the AI copilot dilemma one line of code at a time.

End of Post