$ cat post/the-rollback-succeeded-/-what-the-stack-trace-never-showed-/-i-wrote-the-postmortem.md

the rollback succeeded / what the stack trace never showed / I wrote the postmortem

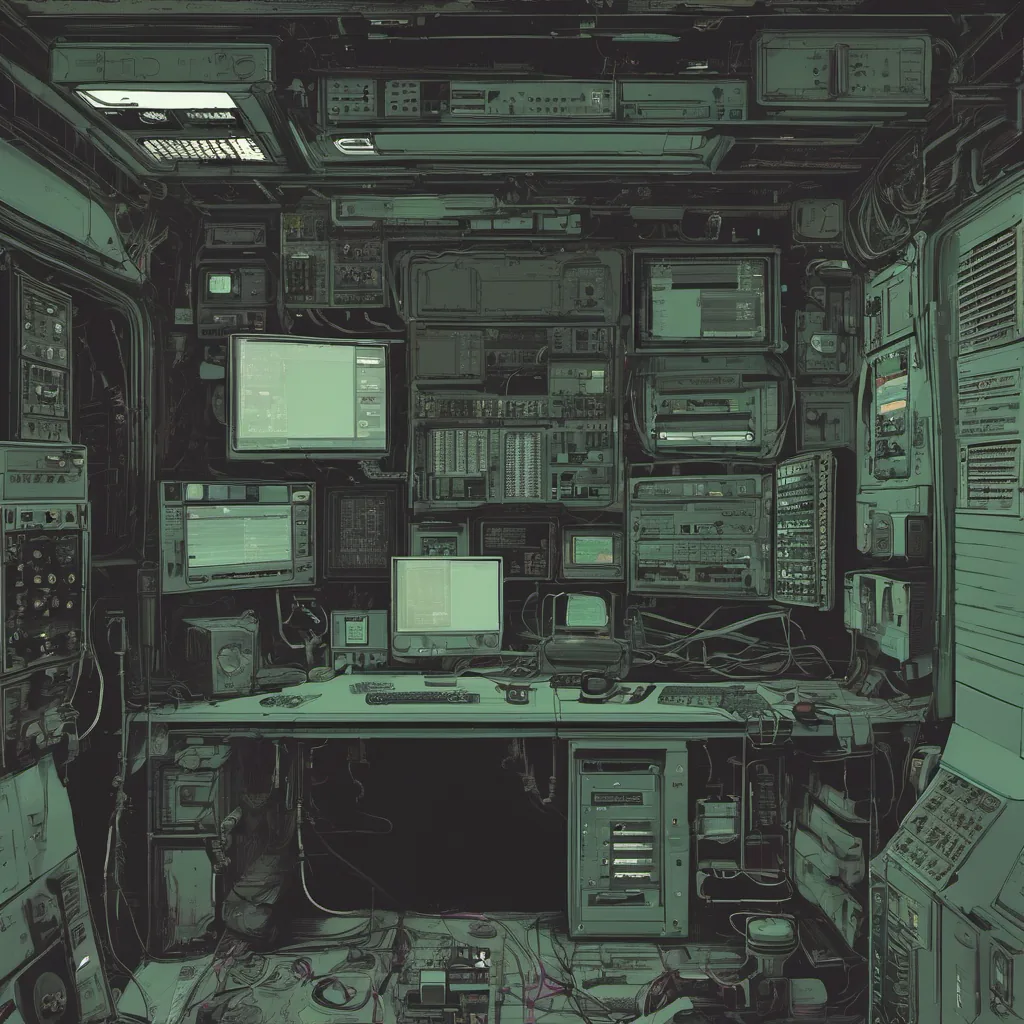

Title: A Tale of Two Servers: Debugging Chaos at Night

March 26, 2012. The date felt like a milestone for DevOps, but it was just another day at the office—well, sort of.

Today I had an unexpected run-in with one of our servers in production. You know, those silent, sneaky bugs that creep up on you when you’re not looking. It wasn’t the first time something like this happened, but today was different because it involved two servers and a bit of an adventure.

The Setup

We were using Puppet for configuration management, which was all the rage back then. Our stack consisted of Apache, Nginx, and various custom applications—all under the watchful eye of our beloved Nagios monitoring system. But sometimes, even with all these tools, Murphy’s Law still rears its ugly head.

The Incident

Around 3 AM, my pager went off, and I knew exactly what it meant: a server was acting up. After a few minutes of cursory checks via SSH, I realized that the problem wasn’t isolated to one server. It seemed like two of our web servers had suddenly stopped responding to requests.

I quickly logged into both servers and started running diagnostics. The first thing I did was check Apache’s error logs for any clues. Nothing jumped out at me, so I moved on to Nginx. That’s when things got interesting.

The Hype

Hype is a funny thing. Back then, everyone was talking about NoSQL databases and Heroku. But for us, the reality of ops work meant dealing with the actual infrastructure every day. And while I was following all these trends, they didn’t always align perfectly with our needs.

The chaos engineering techniques being pioneered by Netflix were fascinating but also a bit overwhelming. We weren’t big on injecting random failures into production, but we did need to ensure that our systems could handle unexpected conditions gracefully.

The Debugging

As I dug deeper into the Nginx logs, something caught my eye: an obscure 502 Bad Gateway error. It was rare enough for me to remember seeing it before, and that’s when it hit me—the configuration file. We had recently updated our Puppet manifests, but it seemed like not all servers picked up the changes.

I quickly checked our Puppet reports on our puppetmaster server and saw that both affected nodes were reporting the failure. It was a race condition in one of our custom modules, where we hadn’t properly handled edge cases during state transitions. The fix was simple: update the module and rerun Puppet on those servers.

The Resolution

After a few more hours of fine-tuning (and some serious coffee), both servers were back online, and everything was running smoothly again. I took a deep breath, feeling relieved but also humbled by the experience. Ops work is a constant dance between innovation and pragmatism.

Reflections

This incident highlighted the importance of thorough testing in production environments—something that’s often overlooked when you’re dealing with complex systems like ours. While DevOps tools like Puppet are invaluable for managing configurations, they can also introduce new challenges if not handled carefully.

As I lay down to sleep, I thought about how far we’ve come since those early days. The tech landscape has changed dramatically, but the core principles of robustness and reliability remain constant. We may have moved on from NoSQL fever to more mature database solutions, but the lessons learned during that night stay with me.

In the grand scheme of things, this wasn’t a groundbreaking event. But it’s these small victories and setbacks that make being an engineer such an interesting career path—full of challenges, learning experiences, and occasional late-night adventures.