$ cat post/a-segfault-at-three-/-a-port-scan-echoes-back-now-/-it-was-in-the-logs.md

a segfault at three / a port scan echoes back now / it was in the logs

Title: A Day in the Life of a Platform Engineer: December 26, 2016

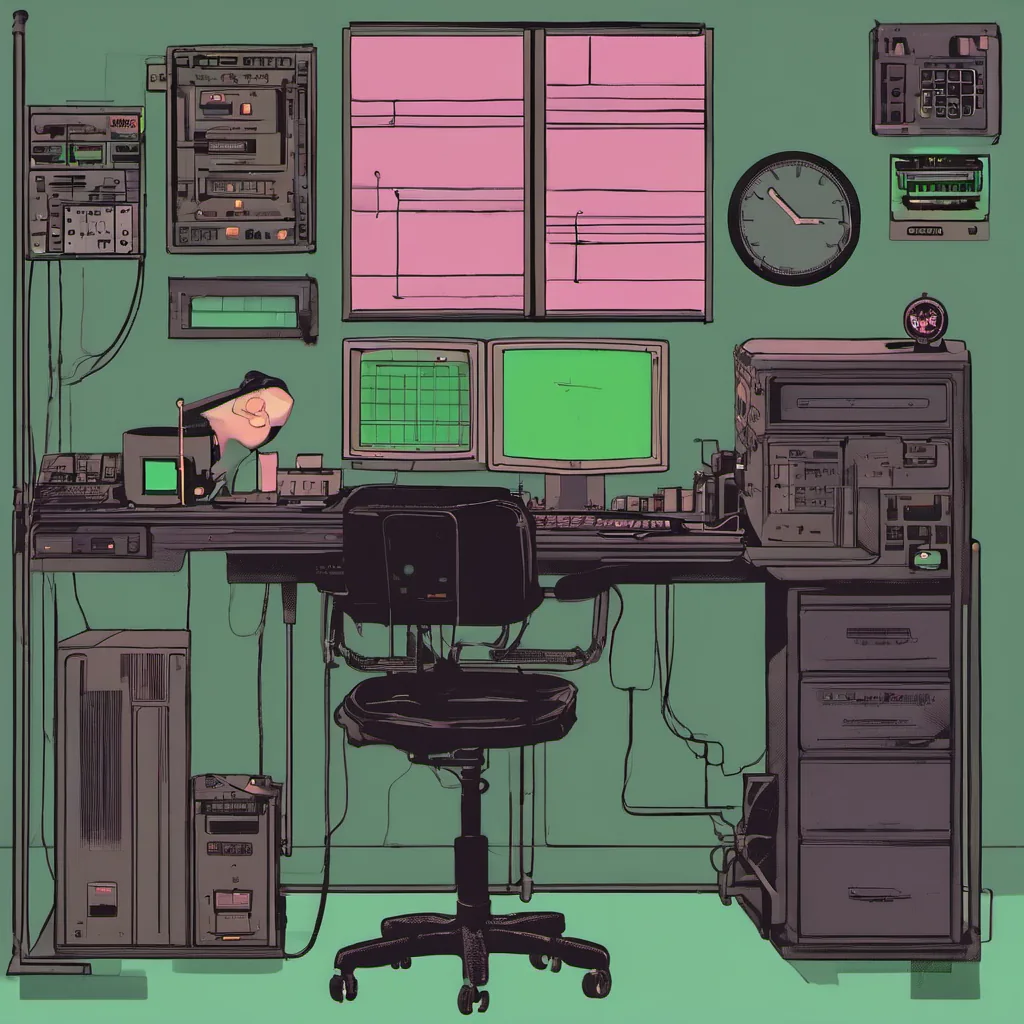

December 26, 2016. I woke up early and poured myself a cup of coffee, staring at my laptop screen as if it held the answers to all my problems. The world outside was still waking up, but in our ops room, the noise of servers and the occasional ping from Slack were already drowning out any semblance of peace.

Today’s task was straightforward: refactor our monitoring stack. We’d been using Nagios for years, but everyone was talking about Prometheus + Grafana as the next big thing. Time to take a look under the hood.

I started by going through our current setup. Nagios had served us well, but it was showing its age. The infrastructure was sprawling, and managing alerts across multiple services was becoming cumbersome. I logged into one of our monitoring boxes to poke around. It wasn’t pretty—scripts strewn about in a haphazard manner, and the nagios.conf file was a labyrinthine mess.

As I sat there, sifting through the config files, I couldn’t help but think about the emerging world outside. Amazon Go was starting to make waves with its cashierless store technology, and cat-proofing a cat feeding machine sounded like something out of a sci-fi novel. Meanwhile, inside our company, people were buzzing about Kubernetes and Helm, Istio and Envoy. GitOps was still an upcoming term, but the idea that code could manage infrastructure was tantalizing.

I decided to start small. I wanted to create a proof-of-concept with Prometheus and Grafana before making any big changes. After setting up a few test servers, I began to understand why everyone was excited. Prometheus’s flexibility and ease of setup were impressive. The data model made sense—metrics as time series—and Grafana’s dashboard capabilities were almost magical.

But there’s no such thing as a free lunch in ops. We had legacy services that needed to stay on Nagios for now, so I set up both systems side by side. This meant writing custom scripts and plugins to get our existing services to talk to Prometheus. It was like trying to teach an old dog new tricks.

Around midday, the team joined me in the ops room. We had a meeting where we could discuss the switch. There were mixed reactions—some were excited, others hesitant. The biggest concern was how we would handle alerting. With Nagios, we relied heavily on email notifications for critical issues. How would Prometheus handle this?

I proposed using Alertmanager to centralize and route alerts. This would give us more control over which alerts went where and when. It took some convincing, but the team eventually agreed to give it a try.

As the day wore on, I tackled one issue after another. There were bugs in our custom scripts, services that refused to play nicely with Prometheus, and even a few server restarts that left me cursing under my breath. By late afternoon, we had a working prototype with most of our services monitored by both Nagios and Prometheus.

It was a long day, but it felt like progress. We were moving towards a more modern monitoring stack, one that could scale better and provide us with richer insights. As I wrapped up for the evening, I couldn’t help but feel proud of what we had accomplished. The future might be uncertain, but today, at least, we had taken an important step forward.

Tomorrow would bring its own set of challenges, but for now, it was time to go home and enjoy some well-deserved downtime. Who knew what kind of side projects or hacks the internet had in store?

That’s a day in my life as a platform engineer in December 2016. The tech world was changing fast, and I felt lucky to be part of that change.