$ cat post/debugging-the-dream:-a-night-with-mysql.md

Debugging the Dream: A Night With MySQL

April 26, 2004. Just a few weeks ago, I joined this new startup with big plans and bigger ambitions. The team was excited about our mission to bring social networking to the masses, but as we ramped up, our tech stack started to show its weaknesses. Tonight was one of those nights when everything went sideways.

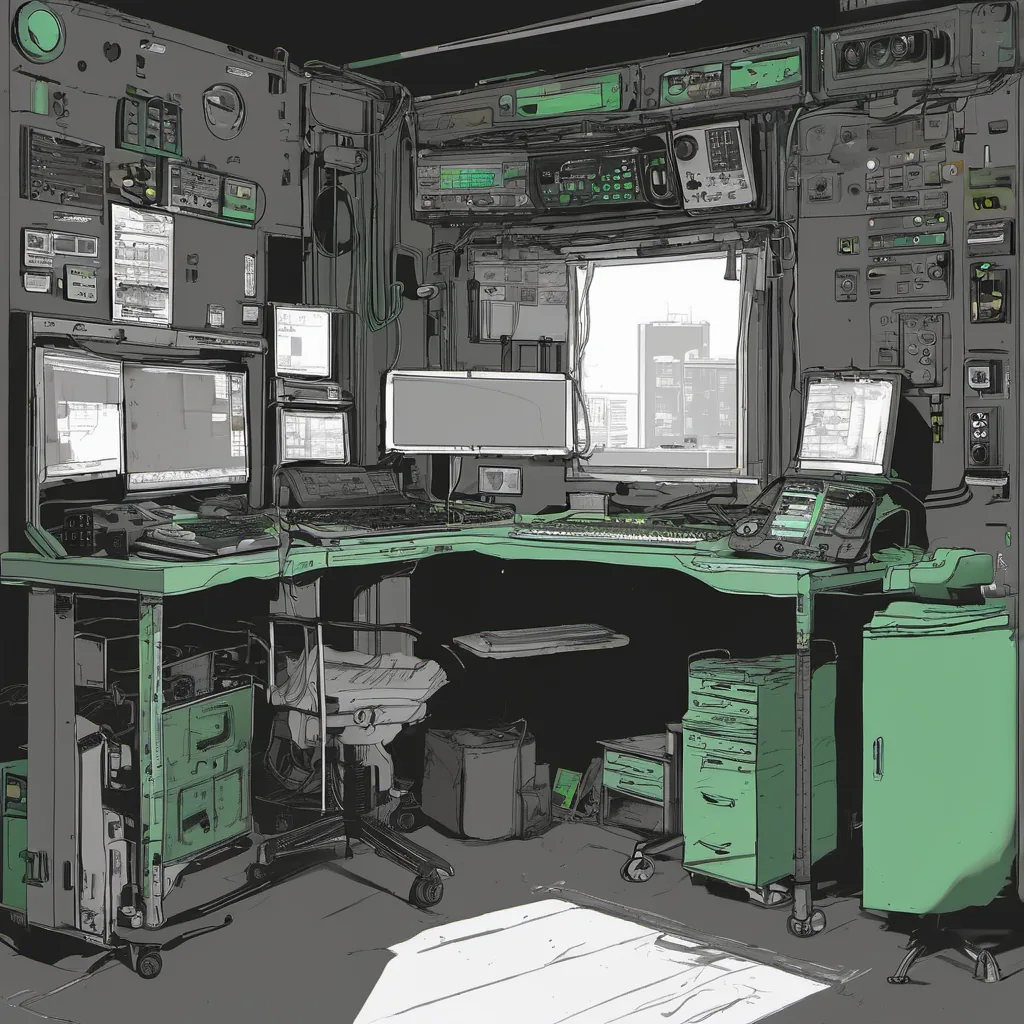

We were using MySQL as our primary database, running on a cluster of Linux servers. Our application was written in PHP, with plenty of Python scripts for various background tasks. The site had been growing steadily since launch, and now we were handling thousands of simultaneous users each day. But today’s load test showed that we were hitting a wall. Our response times were spiking, and our database queries were grinding to a halt.

I spent the evening digging into the problem. I started by profiling some of our more resource-intensive queries with EXPLAIN and SHOW STATUS. It looked like we had some bad indexing choices on some critical tables, but that alone couldn’t account for the massive performance hit during peak load times. That’s when I remembered a conversation from a few weeks back about how MySQL handles high concurrency.

I decided to take a closer look at our transaction logs and saw that deadlocks were starting to appear more frequently as we approached our load limits. It was a classic case of “works great in your dev environment, doesn’t scale.” We had implemented some basic locking mechanisms but hadn’t thought through the implications for high concurrency.

I spent hours tweaking our application code to reduce lock contention and optimize queries. I even went so far as to implement a custom solution using MySQL’s GET_LOCK function to manage critical sections of our code more efficiently. It was an ugly hack, but it seemed to work for now.

But the root cause wasn’t just about locking; we were also hitting limits on the server-side memory and CPU. I decided to take a look at Xen hypervisor, which had just been gaining some traction. We could potentially use Xen to create more resource-isolated environments, improving our overall stability and performance.

As I was setting up Xen, I realized that our entire database architecture was still quite rudimentary. We were using replication but hadn’t really thought about failover mechanisms or load balancing. This was a critical oversight that we needed to address quickly.

I spent the rest of the night brainstorming with my team about how to refactor our infrastructure. We talked about moving away from a single-point-of-failure database setup and implementing more robust replication and sharding strategies. We also discussed using more lightweight caching solutions like Memcached to alleviate some of the pressure on our MySQL instances.

By the time morning rolled around, I was exhausted but energized. The site was still flaky, but we had taken significant steps towards a more scalable architecture. It was clear that this wouldn’t be an easy problem to solve overnight, but with persistence and careful planning, we could turn things around.

In the end, it wasn’t just about writing better code or implementing new tools; it was about understanding the limitations of our existing stack and being willing to rethink everything. That’s what I love about tech—it never stops changing, and every day brings a new challenge. Today, I learned that when you’re debugging the dream, sometimes you have to get your hands dirty.

This blog post captures the essence of the time—a period where open-source technologies were gaining traction, and sysadmin roles required not just scripting and automation but also deep dives into core systems like databases and server virtualization.