$ cat post/man-page-at-two-am-/-a-webhook-fired-into-void-/-the-merge-was-final.md

man page at two AM / a webhook fired into void / the merge was final

Title: Navigating Kubernetes Complexity in 2019: A Tale of Two Problems

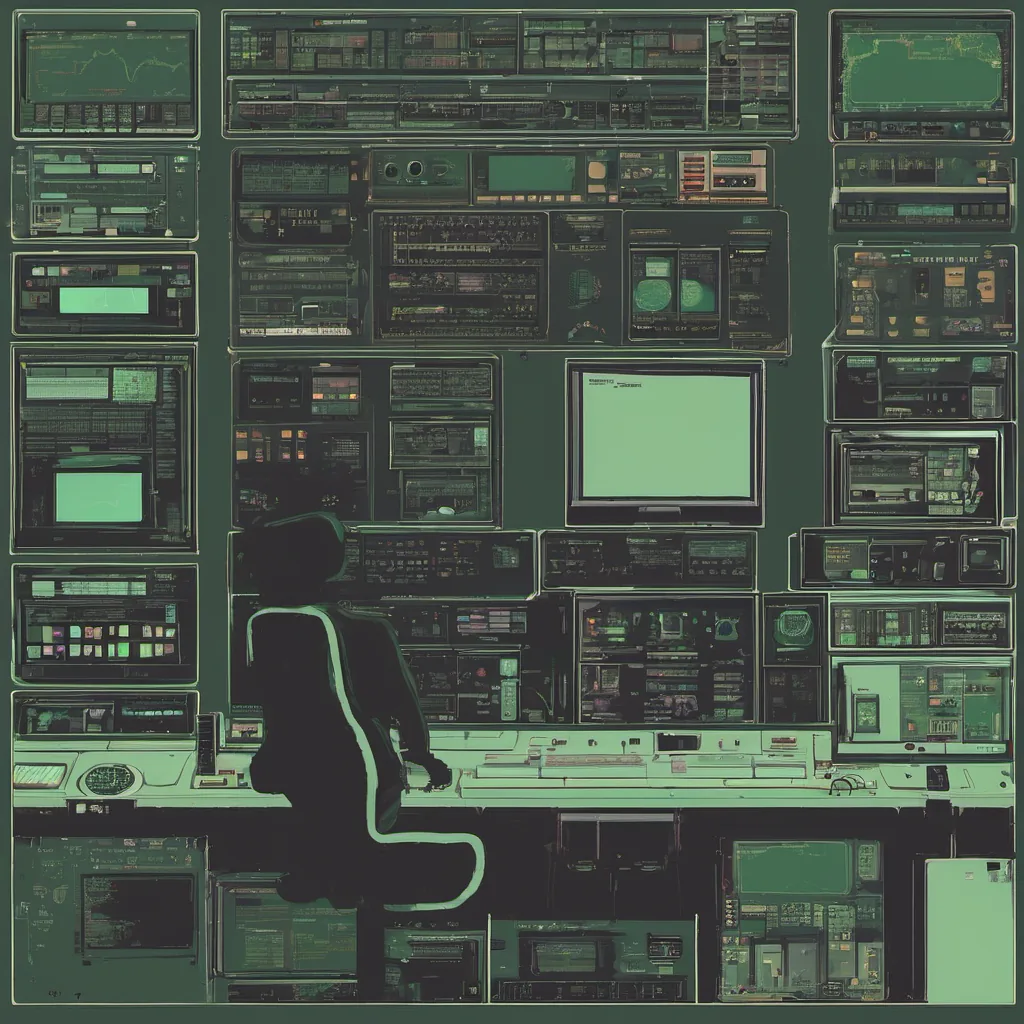

November 25, 2019. Just another day as an engineer trying to make sense of the chaos that is Kubernetes. I spent most of today debugging a cluster issue and arguing with myself about how much more complex it all seems to get.

Today’s problem started innocently enough: a simple service wasn’t responding. After a few minutes, I realized that it was a DNS resolution issue. The pod wasn’t able to resolve the service name in its DNS search path, which is always a fun one to debug.

I’ve had my share of DNS issues before—Pods not being able to reach other services, Services with misconfigured selectors, and so on. But this one was different. It hit me: Kubernetes is getting more complex, and I’m not sure if that’s a good thing or just necessary evil.

As the day wore on, I found myself in a meeting discussing how our platform should handle multi-cluster environments. The complexity of managing multiple clusters using tools like Kubespray, Ansible, and custom scripts was overwhelming. The thought of having to manage all this while ensuring high availability and consistency made me feel like we were building a Rube Goldberg machine.

But then, amidst the chaos, something caught my eye: eBPF. A lightbulb flickered in my head. Maybe there’s hope yet. eBPF seemed promising for debugging network issues, but I was skeptical about its broad applicability. The learning curve is steep, and it’s still a relatively new tool that requires deep understanding of the Linux kernel.

Back at my desk, I dove into some research on eBPF and realized that despite its complexity, it could be our savior in managing Kubernetes. It offered a low-level way to tap into the networking stack and perform detailed analysis without modifying the kernel. The prospect of writing small BPF programs was daunting but exciting.

That night, as I lay down to sleep, I wrestled with my feelings about the state of Kubernetes. On one hand, it’s a powerful tool that can manage thousands of containers across multiple clusters with ease. On the other hand, its complexity is a double-edged sword. Too many moving parts, too much configuration, and too many ways things could go wrong.

Tomorrow, I have another meeting to attend, this time about GitOps tools like ArgoCD and Flux. The thought of pushing code changes that automatically reconcile state across clusters gives me some hope. But the more I think about it, the more I realize we need a consistent approach for managing our infrastructure at scale.

In the meantime, I’ll be spending my weekends learning more about eBPF and experimenting with how to integrate it into our monitoring and debugging processes. Maybe it will help us tame this beast that is Kubernetes.

And as for the hacker news stories? They seem far removed from the day-to-day struggles of platform engineering. But then again, who knows what they might inspire down the line. Slack’s new WYSIWYG input box… maybe if only our documentation tools were just as intuitive.

For now, I’ll focus on making tomorrow a bit better than today. Complexity fatigue is real, but so is the thrill of solving hard problems.

This was my reality in 2019—fighting the good fight while wondering how much more we could take.