$ cat post/life-after-y2k:-debugging-apache-and-learning-from-the-bust.md

Life After Y2K: Debugging Apache and Learning from the Bust

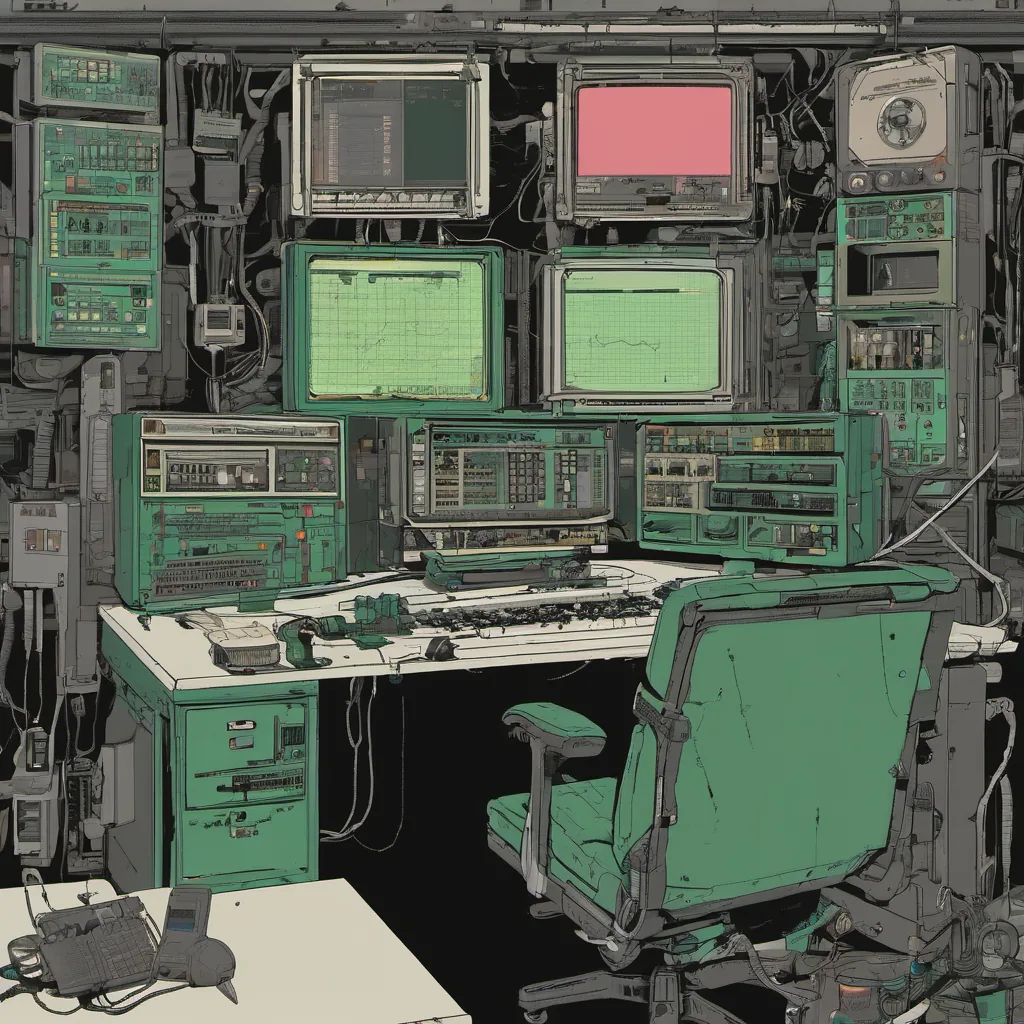

November 25, 2002. It feels like just yesterday, but in reality, I was deep into a world of post-Internet-bust chaos. The dot-com bubble had burst, leaving behind a trail of bankruptcies, layoffs, and a general air of uncertainty in tech circles. But for me, it was also the time when Linux on the desktop started to seriously challenge Windows, and Apache was everywhere.

Today, I found myself knee-deep in an Apache configuration nightmare. Our web servers were underperforming, and our logs were filled with errors. It’s a common enough issue, but the frustration of not immediately finding the root cause was eating at me. The logs hinted at a race condition between two modules, but every time I tried to reproduce it, it vanished into thin air.

I remember back to Y2K when we all scrambled to make sure our systems would handle dates properly. Back then, everyone was on high alert, and the pressure to ensure everything worked was immense. Now, with the dot-com crash, the same level of vigilance felt a bit misplaced. But I couldn’t afford to let my guard down.

I spent hours staring at the logs, trying different configurations, and running stress tests. Finally, I noticed something strange—there were inconsistent timestamps in the log files. Could it be a clock issue? After digging into our system clocks, I found that one of our servers was running 12 hours behind. This small discrepancy had led to a subtle bug where certain transactions were being processed incorrectly due to timing issues.

This discovery was a humbling reminder that no matter how robust your systems are, the smallest details can still trip you up. Debugging this issue required stepping back and looking at things from a different angle. I spent the next few days refactoring our logging code to handle clock differences more gracefully. It wasn’t glamorous work, but it was necessary.

Speaking of clocks, VMware was making waves in the server virtualization space. I remember my team arguing about whether we should move some of our development environments to VMs. There were concerns about performance and stability, but the idea of being able to quickly spin up new environments or replicate production scenarios was too compelling to ignore. Eventually, we decided to give it a try on a couple of our less critical machines.

It’s interesting how technology moves so rapidly. Just a few years earlier, VMware was still considered an experimental tool. Now, it had matured enough for us to seriously consider its adoption. This decision would have long-term implications for our infrastructure, and we were glad to be part of the early adopters.

As I write this, I can’t help but think about how far we’ve come since the heady days of 1998 when everyone was talking about Netscape’s market cap. The dot-com bubble may have burst, but the lessons learned during that time—about reliability, performance, and the importance of robust logging—still ring true.

And so, as I finish up for the day, I look forward to what tomorrow will bring. Whether it’s another Apache bug or a new challenge in virtualization, I’m excited to tackle it head-on. The tech world may be uncertain, but there’s always something to learn and improve upon.

It was a typical day in November 2002, filled with the mundane yet essential tasks of an engineer—debugging, arguing, learning. But looking back, those days shaped my understanding of how to approach challenges, no matter how daunting they might seem.