$ cat post/march-25,-2013---debugging-the-devops-nightmare.md

March 25, 2013 - Debugging the DevOps Nightmare

March 25, 2013. It was a typical Tuesday at my startup, but little did I know that today would mark the beginning of one of the most challenging weeks in our server ops history.

We had just implemented Docker containers as part of our infrastructure overhaul—embracing the new container hype. We were excited to finally move away from monolithic services and embrace microservices. But with that excitement came a bit of naivety, as we underestimated the complexity involved in orchestrating those containers across multiple machines.

Our first challenge came in the form of service discovery. CoreOS was hot back then with its etcd and fleet tools, so naturally, we decided to jump on board. We thought it would be a breeze setting up these services. Boy, were we wrong.

The first day went smoothly as we got Docker containers running across our servers. But when I tried to deploy my application, I ran into issues almost immediately. The service discovery wasn’t working as expected—our applications couldn’t communicate with each other properly due to network configuration problems. It turned out that the way we had set up etcd and fleet was causing a lot of confusion for our services.

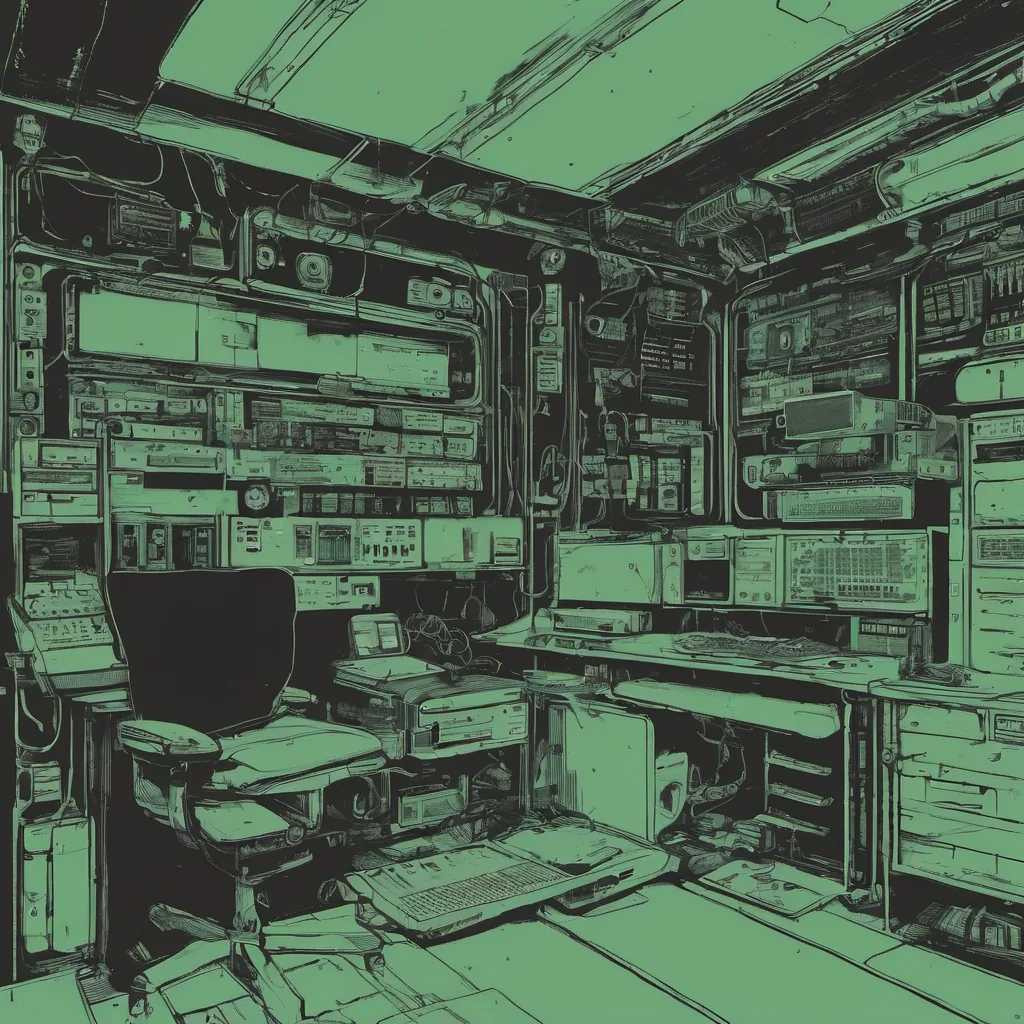

I spent hours trying to debug this issue, but the logs were confusing, and there didn’t seem to be much documentation on setting up service discovery in a Docker environment with CoreOS. I remember banging my head against the wall while staring at journalctl -u fleet output, hoping to find some clues.

After a particularly frustrating afternoon, I finally managed to get it working by tweaking our configuration files and adjusting the network settings. The application started communicating correctly, but I was left with a lingering feeling of dissatisfaction. We were supposed to be ahead of the game with this new technology stack, yet we spent half the day just trying to get the basics to work.

The next day brought more challenges. Our Kubernetes setup, which we were using for orchestration, had some unexpected behavior during deployments. We were using an early version of Kubernetes that was still in its infancy. It was riddled with bugs and required a lot of manual intervention. Every time I tried to deploy new code, there seemed to be a different issue cropping up—whether it was pod misconfigurations or network connectivity problems.

On top of all this, our application had some issues with the 12-factor app principles. We hadn’t fully embraced these best practices, and as a result, our deployment scripts were a mess. I found myself having to write custom scripts just to get everything to work properly. It was like trying to patch together a quilt with mismatched pieces.

By the end of that week, I was exhausted. We had managed to get our services running, but it came at a cost—hours of debugging and frustration. The tech stack we had chosen was cutting-edge, but it also brought its own set of problems.

Looking back, I realize that this experience taught me valuable lessons about the importance of proper planning, thorough testing, and following established best practices. We should have taken more time to understand these tools better before diving in headfirst.

In the months that followed, we refined our setup and eventually moved towards a more stable and reliable infrastructure. But those first few weeks were definitely a learning experience—both painful and rewarding.

And as for the tech world, it was truly an exciting time. Docker was just starting to gain traction, Kubernetes was on the horizon, and microservices were becoming all the rage. It felt like we were at the cusp of something big, but there were still many kinks to iron out.

So here’s to March 25, 2013—the day we got our feet wet in Docker and learned that sometimes the path forward isn’t always as smooth as we hope it will be.