$ cat post/the-old-datacenter-/-i-ssh-to-ghosts-of-boxes-/-the-merge-was-final.md

the old datacenter / I ssh to ghosts of boxes / the merge was final

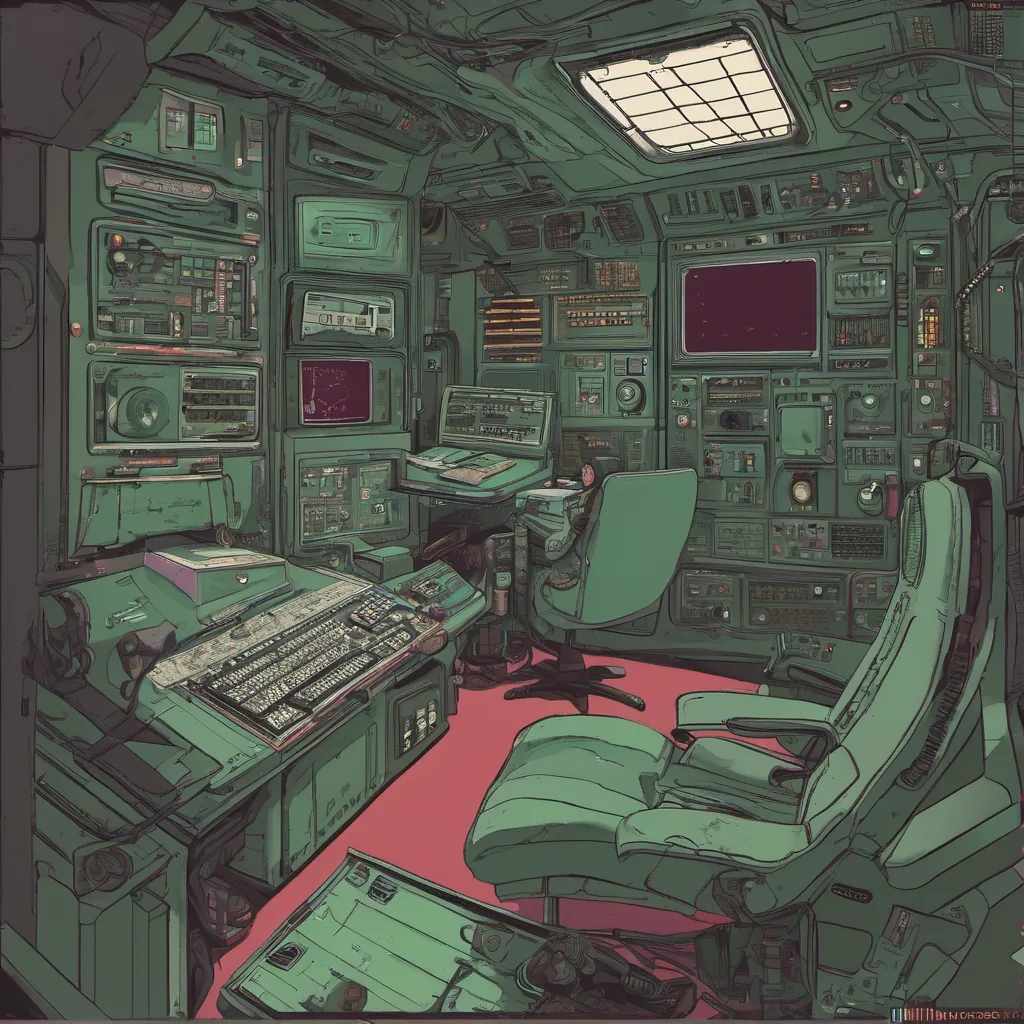

Title: When Logs Were a Chore

October 24, 2005. I can’t remember why today feels important in the grand scheme of things, but it was the day I finally got logging to work on our production servers.

I still recall those days before log rotation was an automated process. We were using good ol’ syslog for our application logs. Syslog is great; it’s simple and gets the job done, right? Not quite. Our application ran a few dozen services, each with its own set of logs. It was a bit of a mess trying to piece together what was happening in production when things went south.

We had an issue where our application seemed to be crashing every other week, but we couldn’t figure out why. The logs were scattered across multiple machines and not rotated properly. It took hours to find the relevant lines, let alone correlate them with user complaints or performance metrics. We were constantly firefighting, and it was wearing us down.

One day, I decided enough was enough. I started looking into centralized logging solutions. At that time, Graylog2 (which later became Graylog) was gaining some traction in our community. It promised to make log management easier by centralizing logs from multiple sources and providing a web interface for searching and analyzing them. The idea seemed promising.

I set up a proof-of-concept on one of the development servers. It went smoothly; I could see all the application logs in Graylog’s nice UI. But when I tried to implement it on our production servers, I hit roadblock after roadblock.

First was the issue with log rotation. Our application had custom logging behavior that didn’t play well with the default syslog configuration. We needed a way to capture those extra lines of information without breaking our existing setup. After much head-scratching and some trial-and-error, we finally got it working by using rsyslog for capturing additional logs and sending them to Graylog.

Next was the issue of performance. Our application produced a lot of log data, and Graylog wasn’t handling it gracefully. It would often take several minutes just to load a single day’s worth of logs. I spent hours optimizing the Elasticsearch configuration, tweaking queries, and even caching some results in memory to speed things up.

Finally, there was the nagging worry about security. We were logging sensitive information, and we had to make sure that Graylog wasn’t exposing any data it shouldn’t. We implemented a proxy server between our application servers and Graylog to scrub out any unnecessary details before they hit the central logs. This added another layer of complexity but made us feel better about storing potentially sensitive information.

By the end of the week, we had a working setup for centralized logging. It was far from perfect, but it was a huge improvement over our old system. We could finally see what was happening in production with just a few clicks. Bugs that used to take hours to track down were now resolved within minutes.

Looking back, implementing this solution felt like the start of a new era for us. Sysadmins were moving from being glorified support desk technicians to developers who wrote scripts and tools to automate and optimize operations. Python and Perl were still our go-to languages for automation, but we were starting to experiment with more powerful tools like Graylog.

That day, I felt proud that we had taken a step forward in how we managed our infrastructure. Logging wasn’t just about capturing data; it was about having the tools to understand and act on that data when needed. It’s funny how much of an impact something as simple as centralized logging can have on daily operations.

Now, years later, I look back at those early days with a mix of nostalgia and gratitude. The challenges we faced back then helped shape our approach to DevOps today. Logging has come a long way, but the principles remain the same: get your data in one place so you can make informed decisions when the going gets tough.

That’s how I feel about those early days of centralized logging. It wasn’t glamorous, but it was a significant step forward for us as engineers and operators.