$ cat post/man-page-at-two-am-/-the-proxy-swallowed-the-error-/-i-left-a-comment.md

man page at two AM / the proxy swallowed the error / I left a comment

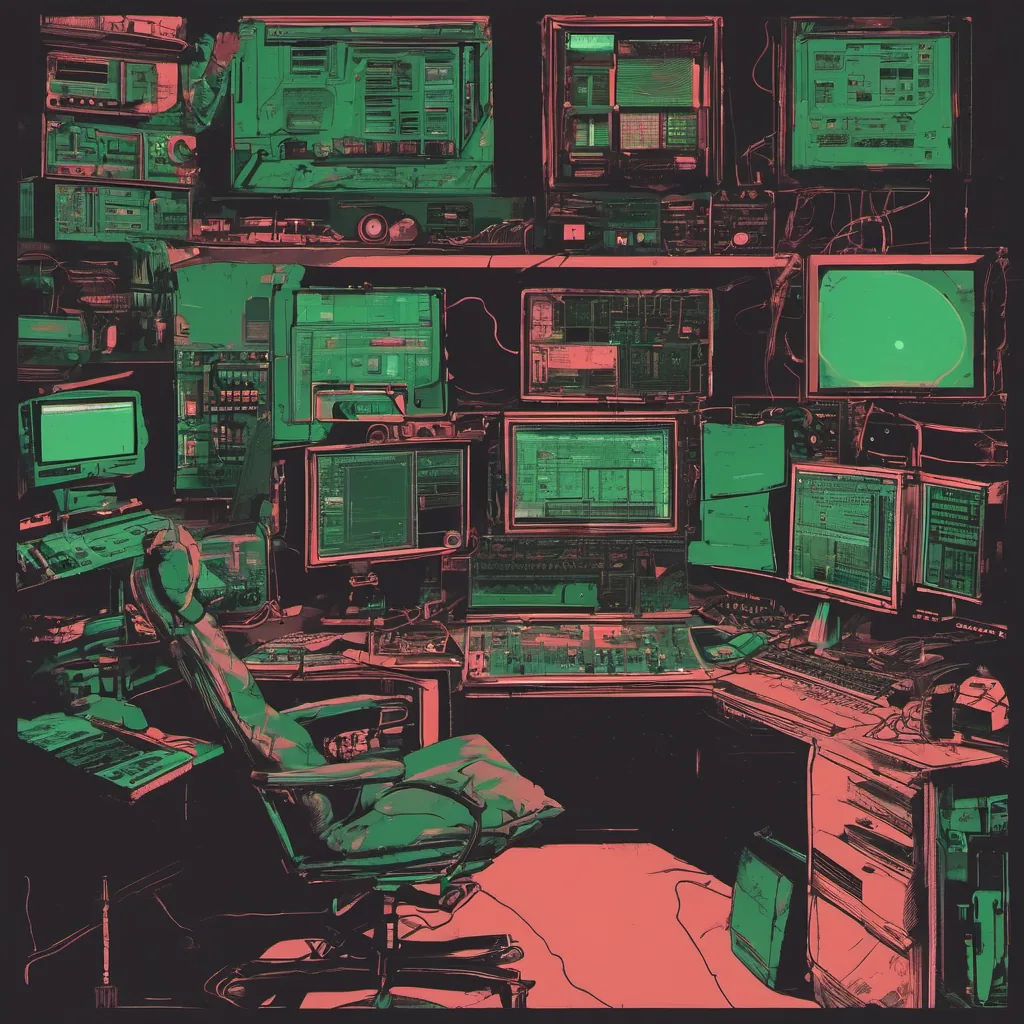

Containerizing Our Legacy: A Journey Through Hell

February 24, 2014. I remember it like it was yesterday. It was a time when everyone had Docker on their lips, and the term “microservices” wasn’t just a buzzword but something you actually started to think about implementing in your monolithic application.

It’s funny how things change so quickly. Just a few months ago, we were still debating whether or not containerization was worth the effort. Now, it felt like we had to choose between going all-in with Docker and being left behind.

We were working on an internal tool at my company that handled a critical part of our business logic. It was written in Java, running on a single server with a custom configuration file that was 1500 lines long and filled with magic numbers. In other words, it was the kind of codebase that makes grown men cry—or at least me.

Our goal for February was to containerize this tool. We had heard that Docker made deployment and scaling a breeze. But reality always has a way of complicating things. Here’s how we navigated those complexities:

Day One: The Setup

We started by setting up the Docker environment on our servers. It was surprisingly easy, given how well-documented Docker was becoming. We pulled down the official Docker image and ran some tests to ensure everything worked as expected.

But when we tried to integrate it with our internal monitoring systems, we hit a snag. The custom configuration file meant that our monitoring script couldn’t parse the environment variables properly. Back to the drawing board.

Day Two: Debugging

We spent most of the day trying to figure out how to pass in the necessary environment variables for the application to run correctly. We experimented with volume mounts and entrypoint scripts, but nothing seemed to work perfectly.

It was frustrating because we knew this tool needed to be containerized quickly, yet every change felt like a step backward. The team argued about whether it made sense to refactor the codebase first or just try to make do with what we had.

Day Three: A Breakthrough

Finally, on the third day, we hit upon an idea. Instead of trying to pass in all 1500 lines of configuration through environment variables, why not modify our application to read a JSON file from a volume mount? It was a hacky solution, but it worked.

We spent the rest of the week tweaking and optimizing this setup. By Friday, we had a working Docker image that could be deployed across multiple servers without issues. The feeling of accomplishment was immense—especially after all the headaches.

Lessons Learned

This experience taught us several valuable lessons:

- Understand Your Application: Don’t assume your application can easily fit into containers. Be prepared to make significant changes.

- Choose Your Tools Wisely: Docker simplified a lot, but there were still challenges in setting it up with our existing tools and processes.

- Iterate Quickly: Don’t get bogged down by perfectionism. Sometimes, a quick hack is better than nothing at all.

Looking Forward

As we wrapped up the project, I couldn’t help but think about where this journey would take us. The microservices trend was just starting to pick up momentum, and it felt like containers were going to be a key part of our future. It was both exciting and daunting.

For now, though, we had crossed another milestone. And that’s what counts—progress, even if it’s slow and painful.

That’s the story of containerizing our legacy tool. A mix of triumphs and tribulations, but ultimately a valuable lesson in adaptability and perseverance.