$ cat post/january-23,-2012---a-day-in-the-life-of-an-ops-guy.md

January 23, 2012 - A Day in the Life of an Ops Guy

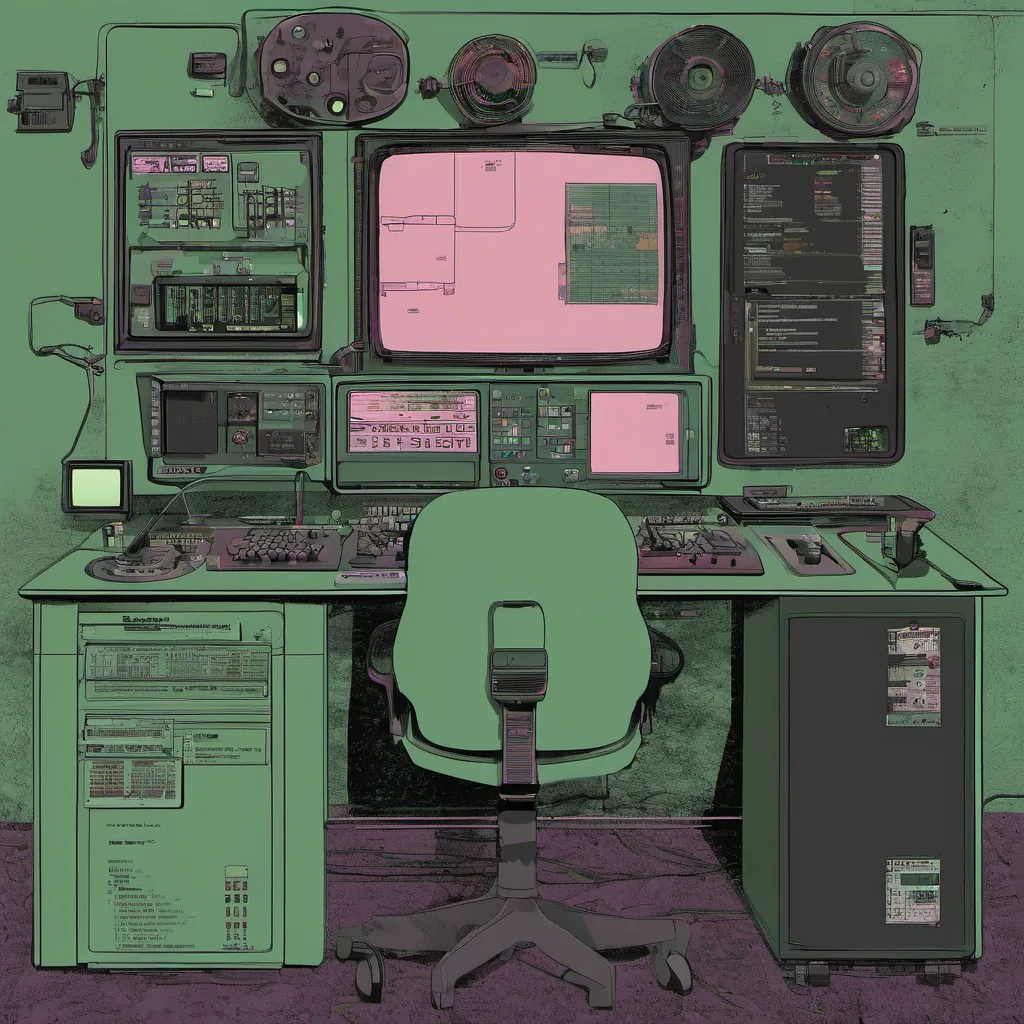

January 23, 2012 was another typical Tuesday. The sun was just starting to rise over San Francisco as I made my way into the office. Today promised a mix of configuration management battles and debugging nightmares. I had a feeling it would be one of those days where the line between chaos engineering and ops chaos blurs.

I arrived at my desk and fired up my terminal. The first thing on my to-do list was to review the latest Chef runs on our staging environment. We were in the thick of a rollout, and every commit was carefully monitored to ensure smooth sailing. However, today, something had gone wrong. A few servers reported failed Chef runs with cryptic error messages.

I started debugging by running chef-client -l debug on one of the affected nodes. The logs were filled with errors related to missing dependencies for a new package we just rolled out. It looked like I was dealing with a classic case of an outdated repository cache. After a quick search, I found that our CI server wasn’t updating the package repositories after each build.

I whipped up a small script to automate this task and pushed it to our Jenkins instance. While waiting for the next build to complete, I turned my attention to another issue: a critical service was down on one of our production clusters. The logs indicated an unexpected restart of our database service. A quick glance at the code changes showed nothing obvious.

The real culprit was a configuration change in the monitoring script that hadn’t been picked up during testing. This was a classic case where we didn’t have enough automated testing and monitoring coverage, leading to an outage due to human error. I quickly drafted a PR to fix the issue and set up additional unit tests to catch similar mistakes in the future.

After addressing these immediate issues, I decided it was time for a break. As I walked out of my cubicle, I passed by the Ops team’s war room where they were planning their next Chaos Engineering experiment with Netflix. The idea of intentionally breaking systems to understand failure modes resonated with me; it felt like a good way to validate our stability efforts.

On my walk back to my desk, I ran into my colleague, Sarah, who was excitedly discussing the release of Bootstrap 2.0. She had just been working on redesigning one of our web applications and wanted some feedback. While Bootstrap’s new features were impressive, I reminded her that the real challenge lies in maintaining consistent styles across multiple environments. We quickly jumped into a side discussion about how to balance responsiveness with performance, which led to several good ideas.

Back at my desk, I started drafting a blog post on the lessons learned from today’s issues: the importance of automated testing and monitoring, the need for robust CI/CD pipelines, and the value of continuous improvement. As I typed away, I couldn’t help but chuckle at how much had changed since the last time I wrote about Ops in 2010.

Looking back, it was clear that DevOps wasn’t just a buzzword; it was a way to drive better collaboration between development and operations teams. Today’s challenges were still there, but with each problem we solved, our processes became more resilient. The tech landscape was rapidly evolving, and I found myself excited about the journey ahead.

As the day drew to a close, I couldn’t shake the feeling that this era of rapid innovation would continue to push us out of our comfort zones. But hey, that’s why we love being ops guys—constantly learning and adapting to new challenges.