$ cat post/life-on-the-lamp-stack:-january-2006.md

Life on the LAMP Stack: January 2006

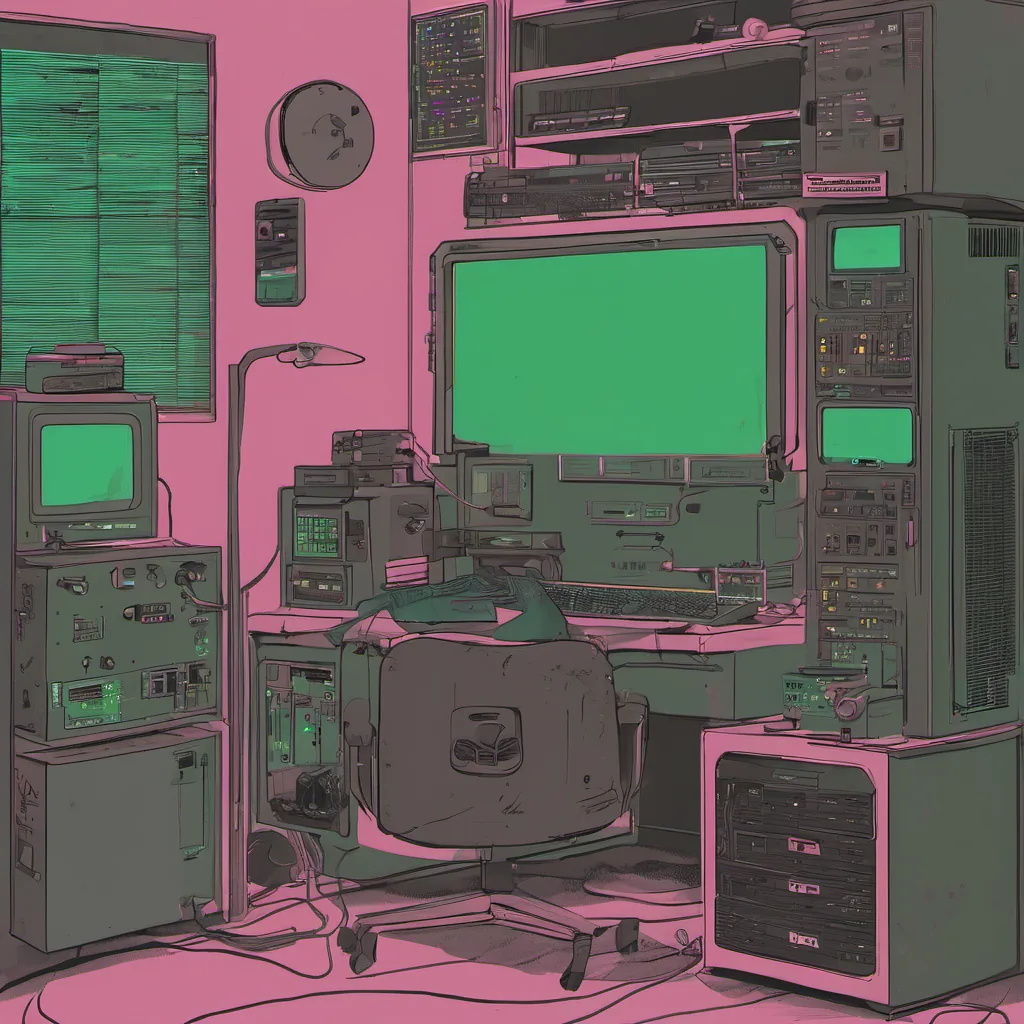

January 23rd, 2006. The year was young, and I felt like it was a banner month for my career at an early-stage startup. We had just shipped another round of features, and our user base was steadily growing, though we still needed more robust infrastructure to handle the load.

The server room was quiet as ever, but today’s debugging session brought me back to reality. The site was choking under a sudden surge in traffic from an unexplained source—probably some automated script or link-farming bot. I had to figure out how to scale our application and database without breaking too many things.

The Setup

We were running on the LAMP stack, a combination of Linux, Apache, MySQL, and PHP that had become the de facto standard for web development back then. This was my playground, but it wasn’t always the easiest environment to work in. We relied heavily on cron jobs and shell scripts to handle background tasks, and we were constantly fighting against slow database queries.

Debugging the Surge

The first step was to monitor where the traffic was coming from. Our logs showed a steady stream of requests hitting our site at irregular intervals. I pulled up top in one terminal window while running tail -f /var/log/apache2/access.log in another. The top output revealed that MySQL was under heavy load, and the database queries were indeed the culprit.

I started by adding some logging to a few key query statements using PHP’s built-in debug_backtrace(). This gave me insight into which parts of our application were causing the most stress on the database. I then used EXPLAIN in MySQL to get an idea of how these queries were being executed and where they could be optimized.

The Scripting Mess

As we dug deeper, it became clear that a lot of our automation scripts needed some TLC. We had a mix of shell scripts and Python scripts running cron jobs, but they were all over the place in terms of reliability and performance. Some of them were written by interns who had since graduated or moved on to other companies. They left behind a trail of undocumented hacks and spaghetti code.

I decided to take this opportunity to clean up our act. I wrote some bash scripts using sed and awk for parsing log files, and refactored the Python scripts into more modular and maintainable ones. It was hard work, but it paid off. The site ran smoother after these changes, and we had a better understanding of where to focus our optimization efforts.

Scaling Up

With the database queries under control, I turned my attention to scaling the application itself. We decided to use Xen for virtualization, which allowed us to spin up more instances if needed. However, setting this up required careful planning to ensure that we didn’t overload our network or cause any downtime during migration.

We set up a staging environment where changes could be tested before going live, and gradually moved some of the load to these new servers. This was an iterative process, but it paid off as the site’s performance improved significantly.

Lessons Learned

This month taught me that while the LAMP stack was robust, it wasn’t without its pitfalls. Debugging complex issues often required a combination of technical knowledge and patience. Scaling our application meant not only dealing with database queries but also improving our infrastructure management practices.

The sysadmin role continues to evolve, moving away from simple script writing towards more strategic thinking about architecture and automation. I’m excited to see where this journey takes us in the next few years, especially as technologies like Docker and Kubernetes start making their way into our toolchain.

For now, it’s back to debugging and optimizing—another day on the LAMP stack!