$ cat post/the-monolith-ran-/-the-secret-was-in-the-env-/-the-daemon-still-hums.md

the monolith ran / the secret was in the env / the daemon still hums

Title: February 23, 2015 - Kubernetes Is a Noisy Neighbor

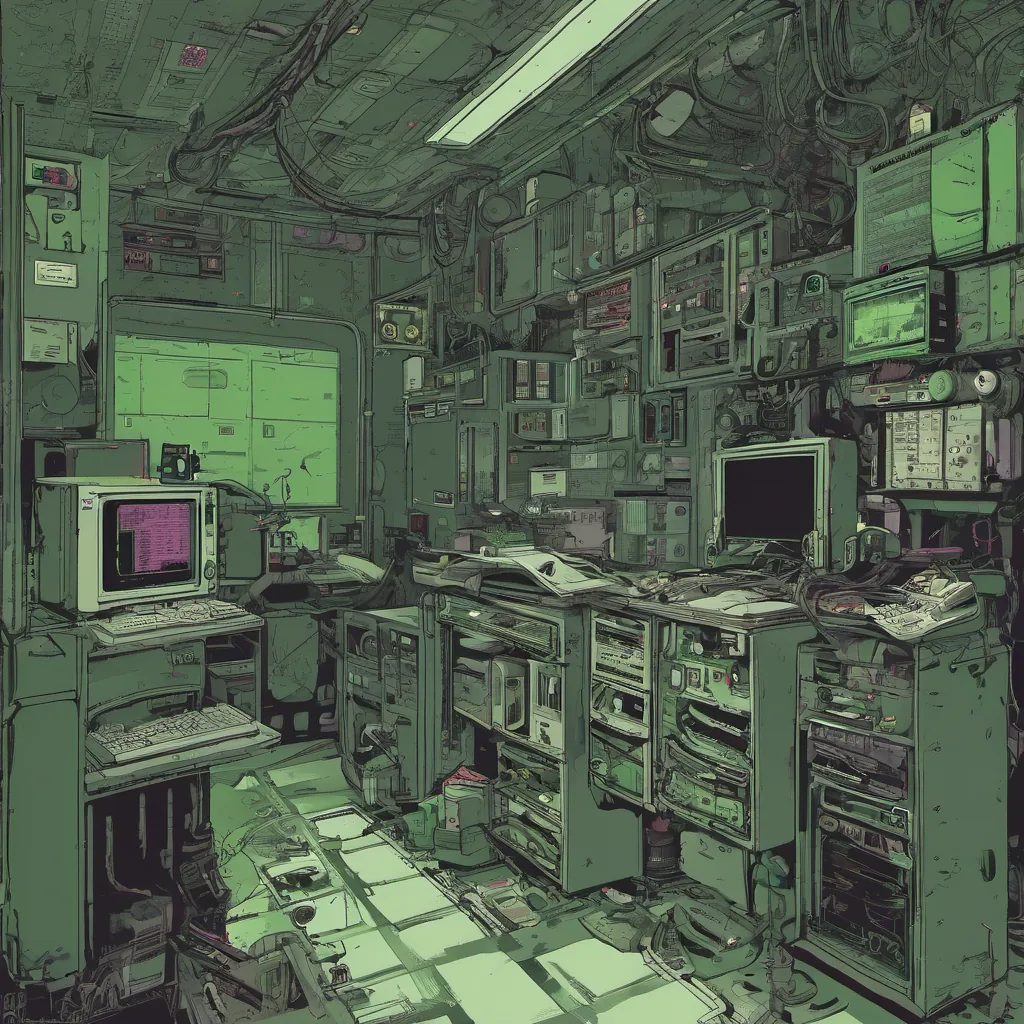

February 23rd, 2015. I sat in my office staring at a cluster of servers and a few dozen pods running on them, trying to figure out why our application was crashing. The problem seemed simple enough: we had set up a new deployment with Docker containers managed by Kubernetes, but something wasn’t working right.

A Brief Background

Just a year prior, Docker had started its rise to prominence. Microservices were still finding their footing, and while everyone knew that containerization was the future, the best practices for running them in production weren’t well-established yet. At my company, we were dipping our toes into this new world, using Kubernetes as the orchestrator.

The Problem

The application I was working on handled a lot of user data, so reliability and performance were critical. We decided to move our monolithic app to microservices with Docker containers, and Kubernetes seemed like the perfect tool for the job. However, things didn’t go exactly as planned.

When we deployed the new service, it went up and down like a yo-yo. Some pods would start fine, but others would crash almost immediately. The logs were cryptic, filled with errors that didn’t give us any clear clues about what was going wrong. I spent hours staring at the screen, trying to figure out if this was an issue with Kubernetes or our application.

The Investigation

I started by checking the Kubernetes logs, looking for any obvious issues like resource limits being hit. Nothing jumped out at me. Then, I decided to take a closer look at how resources were allocated within the pods themselves. That’s when it clicked—Kubernetes was using cgroups (Control Groups) in an unexpected way.

The Real Culprit

cgroups are used by Kubernetes to manage and limit resource usage for containers. However, because we had multiple containers running under the same pod, the limits were being applied differently than we expected. Specifically, the CPU and memory quotas set at the pod level weren’t splitting resources evenly between the containers.

This led to a situation where one container was hogging all the resources, while the others starved. The application that was supposed to be running in parallel ended up getting throttled to a crawl or crashing outright due to lack of resources. It turned out that our understanding of cgroups and Kubernetes wasn’t quite complete.

Solution

To fix this, I had to tweak our deployment configuration to ensure each container within the pod got an equal share of resources. We also needed to adjust the resource allocation in Kubernetes itself, making sure we properly defined the limits and requests for each container.

It was a frustrating debugging session, but it taught me a valuable lesson about the importance of understanding both your application and the underlying infrastructure tools you’re using. While I wasn’t thrilled with how noisy the pods were acting up, this experience solidified my belief in the power of containerization—and the need to stay on top of the nuances that come with managing these technologies.

Looking Back

In retrospect, this was a pivotal moment as we transitioned from monolithic applications to microservices. It highlighted both the benefits and challenges of using Kubernetes for deployment. As I write this, Kubernetes has matured significantly, but the lessons learned then are still relevant today—understanding the details of your infrastructure can make all the difference.

February 23rd, 2015, was just another day in tech, filled with debugging sessions and learning experiences. But it’s days like these that remind us why we love what we do—the thrill of solving problems and improving our systems one container at a time.