$ cat post/cold-bare-metal-hum-/-a-crontab-from-two-thousand-two-/-the-pod-restarted.md

cold bare metal hum / a crontab from two thousand two / the pod restarted

Title: Debugging Heroku’s PostgreSQL Hangs on February 23, 2009

On this day in 2009, I was part of a small team at Heroku tasked with maintaining the stability and performance of our PostgreSQL database service. The tech world was abuzz with news about GitHub’s launch, cloud computing taking off with AWS, and the iPhone SDK making waves. Meanwhile, we were knee-deep in real ops work.

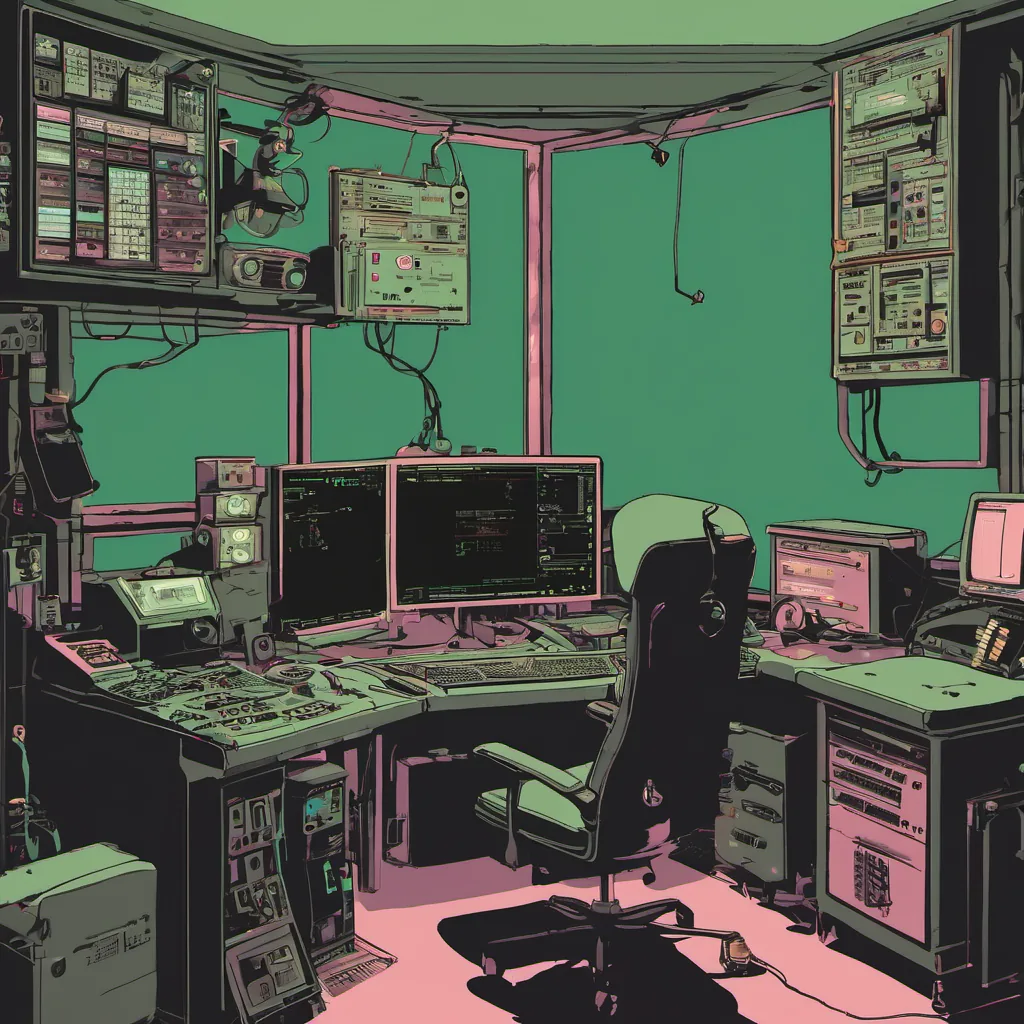

We had just received reports that some customers were experiencing issues where their databases would hang indefinitely when performing simple queries. It was a Friday afternoon, and our stress levels were already high due to the economic crash hitting tech hiring hard. As I sat down at my desk to debug this issue, I couldn’t shake off the feeling that this was going to be a long weekend.

The first thing we did was set up a reproducible test case using one of the affected customers’ databases. After some trial and error, we managed to get the exact same hang on our staging environment. We then started digging through logs and code to see if we could spot any patterns or anomalies.

One of my colleagues suggested we use strace to trace system calls being made by PostgreSQL during the hang. I was skeptical but willing to try anything that might give us some insight. After a few hours, I finally saw something that caught my eye: repeated calls to write(2) and read(2). It looked like there was a bottleneck in our file descriptor handling.

We decided to add more logging around those areas of code to get a better picture of what was happening. As we poked at the logs, it became clear that the issue wasn’t with PostgreSQL itself but rather with how Heroku’s infrastructure was interacting with the database. Specifically, we were hitting limits on our socket buffers and I/O handling.

To address this, we had to make some changes in both our infrastructure and the way PostgreSQL was being used by customers. We increased the buffer sizes and optimized the way data was written back to the filesystem. These tweaks required a careful balance between performance and stability, as any change could potentially introduce new issues.

After several hours of tweaking and testing, we finally had a fix that seemed to work consistently. We deployed it in production and monitored the logs closely over the next few days. The initial results were promising, with no more reports of hangs.

Reflecting on this experience, I realized how much you can learn from real-world debugging sessions like these. It was a reminder that even when things seem stable, there’s always room for improvement. And while it might not sound exciting compared to the latest tech news, solving day-to-day operational issues is what makes our services run smoothly.

Looking back, this episode also highlighted the importance of having robust testing and monitoring in place. If we hadn’t had those tools available, the fix would have taken much longer or even worse, could have introduced new problems down the line.

So here’s to another successful (if slightly stressful) day in tech ops! Who knew debugging database hangs would be such an adventure?

That’s how I spent February 23, 2009. Not exactly glamorous, but definitely fulfilling.