$ cat post/container-wars:-a-docker-developer's-perspective.md

Container Wars: A Docker Developer's Perspective

September 21, 2015 was just another day in the life of a tech developer until I saw that headline. “Amazon Web Services (AWS) in Plain English” had rocketed to the top of Hacker News with over 1,400 points. AWS! The elephant in the cloud hosting space, and suddenly it’s front-page news? Was there something new?

Turns out, Docker was starting to gain some serious traction. For a while now, I’d been skeptical about containers—after all, wasn’t everything supposed to be moving towards virtual machines (VMs) as they promised more isolation and better resource management? But with the rise of microservices and DevOps, containerization seemed like a natural fit for modern app development.

I was working on an internal project that needed to scale quickly. The team had decided to go with Docker because it promised faster development cycles and easier deployment. We’d been using VMs up until this point, but Docker’s promise of lightweight containers and the ability to run multiple apps in one machine made us feel like we were at the forefront of something big.

The first few days were smooth sailing—Docker seemed magical as we spun up new environments quickly. But soon enough, reality set in. The application started acting strange, and I found myself debugging what felt like a million moving parts. It was as if Docker had opened Pandora’s box. Every time I thought I understood how it worked, something would break.

One day, the logs from our container were just gibberish. We were using a custom image that we built, but now it was crashing on every request. After hours of digging through code and configs, I realized the issue wasn’t in my app at all; it was Docker. It turns out, some environment variables weren’t being properly set when running in a container, leading to our app misinterpreting some data.

This led us into an interesting discussion about best practices with Docker. Should we be using Docker Compose for managing dependencies? How do we deal with volume mounts and ensure consistent environments across machines? The SRE book, Google’s recent announcement of Kubernetes, and the rise of Mesos/Marathon all added to this confusion.

We ended up deciding on a hybrid approach: use Docker as our packaging solution but stick with VMs for deployment. It wasn’t the most elegant solution, but it worked. We stopped arguing about whether Docker was the future and started thinking about how we could make it work in our environment.

Meanwhile, Node v4.0.0 had just hit the scene, adding a lot of exciting new features that made me wonder why I hadn’t switched to JavaScript earlier. I mean, who knew asynchronous programming would become this big thing? The idea of React Native also intrigued me—maybe we could use it for some future mobile projects.

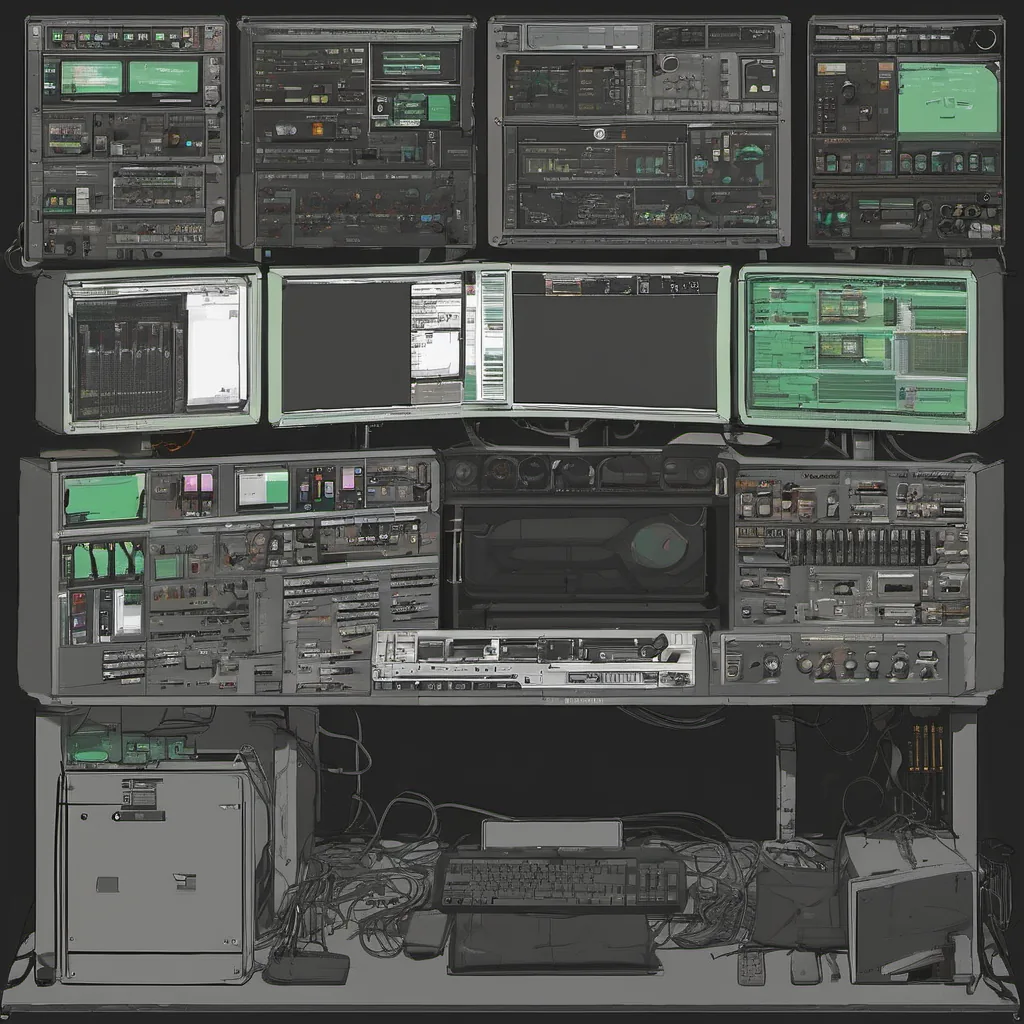

But back to Docker—it’s still around, and it’s not going away anytime soon. Whether you love or hate containers, they’re here to stay, and so are the debates surrounding them. As I type this, I’m sitting in front of a machine running several Docker containers—some for development, others for testing—and thinking about how far we’ve come since those early days.

In the end, it’s not about which technology is better; it’s about finding what works best for your team and your projects. Whether you’re using VMs or containers, at least now I know to expect a few debugging sessions in my future.

That’s where I was back then, mired in Docker hell but excited about the possibilities. Containers were just one piece of the puzzle in our ongoing quest to build better software.