$ cat post/a-segfault-at-three-/-a-rollback-took-the-data-too-/-the-deploy-receipt.md

a segfault at three / a rollback took the data too / the deploy receipt

Title: May 21, 2012 – A Day in the Life of an Ops Guy

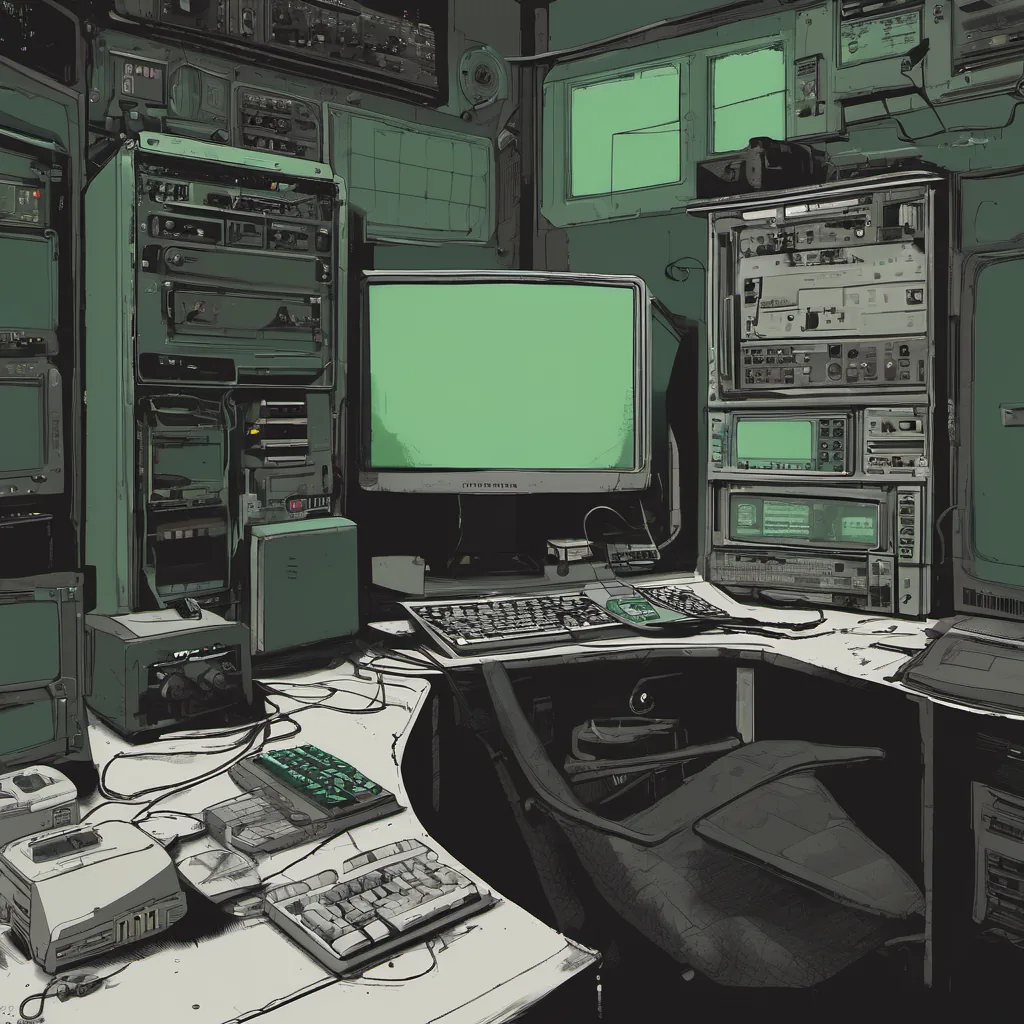

May 21, 2012 was another Tuesday with a normal start. The sun barely peeks through my window at 6 AM, but that’s when I have to be up. It’s not like we had fancy coffee machines or espresso machines back then; just a trusty Keurig and a box of Folgers. Today started off like any other: make some coffee, read the Hacker News headlines, and dive into my inbox.

The Morning

Hacker News was abuzz with news from the tech world. Plagiarism cases were heating up, and Oracle vs. Google was still making waves. But none of that seemed to matter when I got a call at 7:30 AM. One of our apps was flaking out in production. The logs showed nothing unusual, but something just wasn’t right.

I quickly hopped onto my laptop and started digging through the monitoring tools we had—New Relic for application performance, Logstash + Graylog for log analysis, and Nagios for infrastructure alerts. Our stack was a mix of Rails on top of a custom-built server framework that I helped build back in 2010.

The Debugging Marathon

After an hour of poking around, I realized something strange: our application was timing out when serving certain pages but only during peak hours. This wasn’t typical for us. Usually, we had caching and load balancers to handle spikes smoothly. But this time, the issue was sneaky.

I decided to enable debugging on the server side and deployed a new version with more verbose logging. The logs started filling up as users hit our site during lunchtime. Around 12 PM, I noticed something peculiar: there were intermittent network timeouts from specific IP addresses. It looked like some clients were dropping their connections before they ever reached us.

The Search for the Root Cause

I quickly jumped into our network monitoring tool, PRTG (Protocols Real-Time Graphing), which was giving me an overview of our network performance. I saw a spike in latency from one of the upstream servers. It seemed like something was going on with their network infrastructure.

I reached out to my network guy, Sam, who was on call that day. He had been dealing with bandwidth issues for the past couple of weeks due to a new project we were running. But this wasn’t a standard load increase—it looked like there might be some misconfigured routers or switches in our setup.

The Fix

After a bit more digging and coordination, we found out that one of our upstream servers had a misconfiguration that was causing packet loss during peak hours. Once Sam fixed the configuration, we saw an immediate improvement in our application’s performance. The timeouts disappeared, and everything started working as expected.

This experience highlighted how critical it is to have good network monitoring tools and well-defined upstream relationships. We learned to be more proactive about coordinating with our external providers and setting up better communication channels.

Lessons Learned

Debugging production issues can be a frustrating but also rewarding process. It’s easy to get stuck in the weeds, but stepping back to look at the bigger picture often helps you see what’s really going on. In this case, it wasn’t an app bug or infrastructure failure—it was a misconfiguration from our upstream provider.

It also reinforced the importance of having robust monitoring and logging practices in place. Without them, we would have been left scratching our heads for much longer.

The Day’s Wrap-Up

As I closed out my day with a cup of cold coffee (it’s what it takes to make it through these long nights), I couldn’t help but think about how quickly the tech world was moving. DevOps practices were gaining traction, and tools like Chef and Puppet were dominating config management wars. Heroku had just been acquired by Salesforce, signaling changes in the cloud landscape.

But for now, my job was to keep our systems running smoothly, and that meant being prepared for the unexpected. And that’s what I did today.

That’s how a typical day went down on May 21, 2012. Hope you found it interesting!