$ cat post/the-pager-went-off-/-i-rm-minus-rf-once-/-i-saved-the-core-dump.md

the pager went off / I rm minus rf once / I saved the core dump

Title: Managing AI Context in a Post-Hype World

April 21, 2025. It’s been a while since I last wrote about the ops world, but much has changed—especially with how we handle AI context across our platforms. Let me take you through a day in my life and some of the challenges we faced this month.

The Early Morning

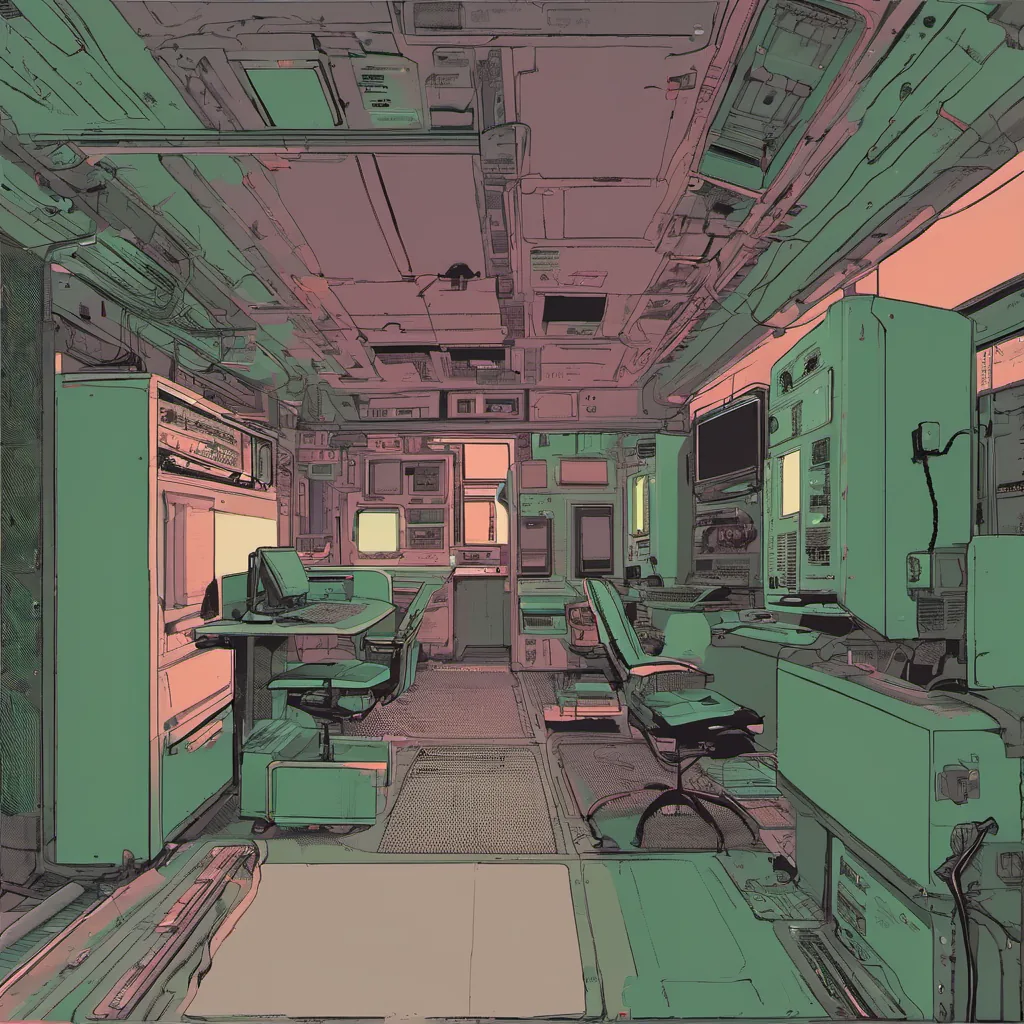

The alarm went off earlier than usual today, which is strange considering it’s still dark outside. I guess the era of remote work has blurred the line between wake-up time and the start of my day. As I sip on a cold cup of coffee, I realize that the first task at hand involves debugging an issue with our AI copilot. It’s not just any copilot; this one is integrated into our infrastructure layer, helping us manage complex service dependencies.

The Copilot Blues

The copilot has been working wonders in production for months now, but today it flagged a warning that something might be off. I open the dashboard and see that the AI suggests a potential outage in one of our key services. After reviewing the logs, I notice some strange patterns—more noise than usual. This isn’t just about the occasional hiccup; this is a deeper issue that could potentially impact user experience.

Digging into the Problem

I start by looking at the eBPF tracing data we’ve been collecting for months now. eBPF has proven to be a game-changer, allowing us to monitor and debug system behavior in real-time without impacting performance. As I sift through the traces, I realize that the copilot’s predictions are being skewed due to some recent changes in our service load balancing algorithm.

A Nasty Surprise

Just as I’m about to wrap up my analysis, a colleague joins me over video from her home office. She brings an update: “Hey, we just got a call from security—they found out that the copilot is logging user data without proper consent.” This is a serious breach of trust and our privacy policies. The copilot was supposed to be AI-assisted ops; it wasn’t meant to log personal information.

A Teachable Moment

After discussing with the team, we realize that while eBPF has been incredibly useful for monitoring, its integration with the copilot raised some security concerns. We need to ensure that any data logged by these tools is strictly regulated and complies with our policies. This incident highlights a critical aspect of managing AI context: it’s not just about the tools but also about the data they handle.

Moving Forward

We decide to implement stricter data handling protocols for all AI-assisted tools. We’ll start with auditing existing integrations, ensuring that no unauthorized data is being logged. Additionally, we plan to conduct regular training sessions on security best practices for our engineers who work closely with these systems.

The Day’s End

As the sun sets over my city, I take a moment to reflect on today. Debugging issues in AI-assisted tools is no easy feat, especially when they’re deeply integrated into our infrastructure. But what’s clear is that we’re entering an era where managing AI context will be as critical as writing clean code or optimizing performance.

In the post-hype world of 2025, AI isn’t just a buzzword; it’s something we need to manage with care and responsibility. Today was just one small step, but every step counts in building a safer and more reliable tech stack for everyone.

That’s my day in ops. What did you get up to today?