$ cat post/a-segfault-at-three-/-we-ran-it-on-bare-metal-once-/-it-was-in-the-logs.md

a segfault at three / we ran it on bare metal once / it was in the logs

Title: Y2K Echoes: Debugging a Real Linux Kernel Crash

November 20, 2000. The tech world is still reeling from the dot-com bust, but the Y2K bug has left a lasting echo in everyones’ minds. I remember the nights spent poring over code and configuration files, ensuring that our servers would not fail at midnight on January 1st. It was a tense time, with everyone fearing the worst.

Today, we’re dealing with something entirely different—bugs in our own infrastructure. Specifically, it’s a kernel panic on one of our critical servers running a heavily customized Linux distribution. This is no ordinary bug; it’s the kind that hits you right when you least expect it, and it’s happening now, of all times.

The server is part of our core storage system, responsible for serving terabytes of data to our users. It’s not just any server; this one handles our most critical transactions. The panic log indicates a page fault in the kernel space, followed by an undefined instruction exception. To someone who has been through Y2K, these types of messages are both comforting and terrifying. At least we know the system is still telling us what’s wrong.

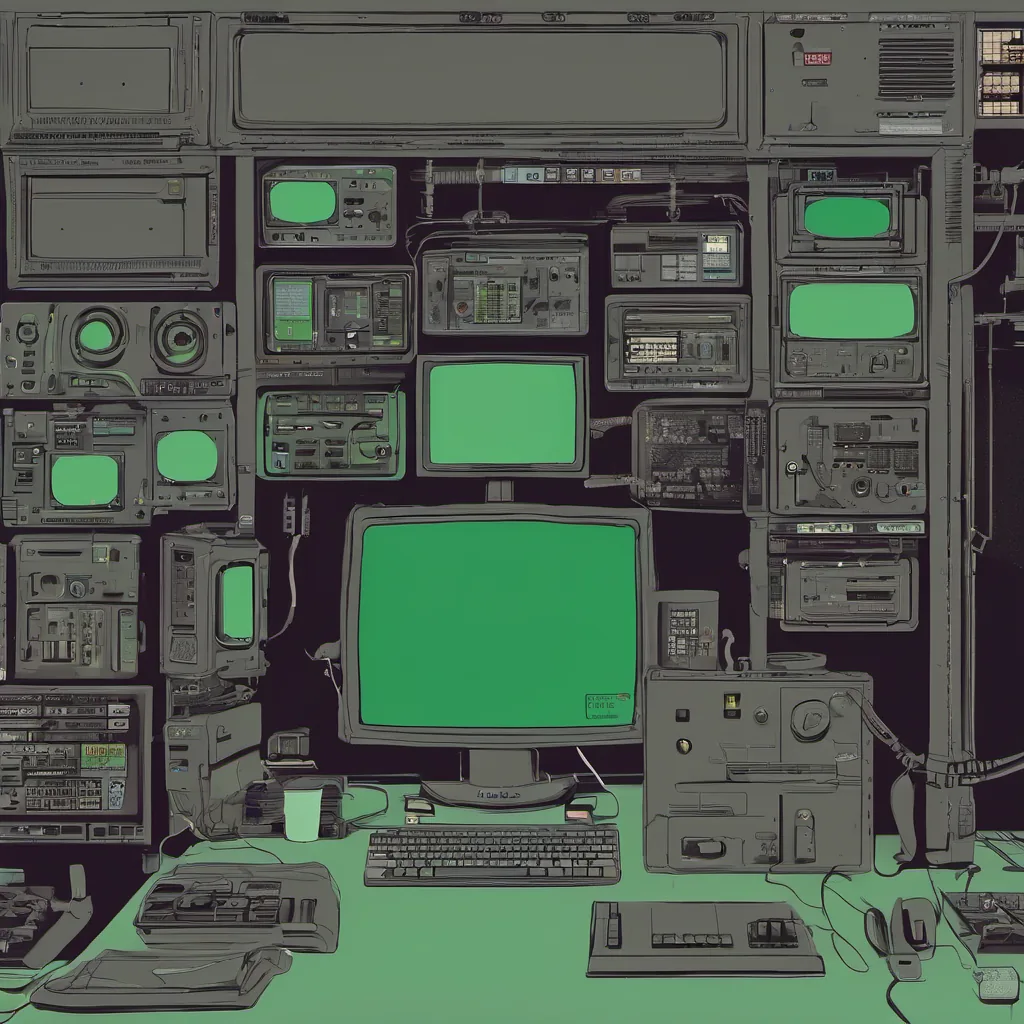

I sit down at my desk with a cup of cold coffee (the kind that stays lukewarm all day). The log buffer tells me the server crashed while handling an I/O request from one of our applications. The application has been running smoothly for months, but now it’s hitting some kind of kernel bug. I boot into single-user mode and start debugging step by step.

The first thing to do is check the module versions and make sure nothing was compiled incorrectly. I load all the modules, cross-reference their symbols with the kernel version, and then run dmesg again to see if any new information pops up. Nothing stands out. I move on to looking at the system call trace and backtrace.

The trace shows that a system call is being made from user space, but it’s not returning properly. This is where things get interesting. The system call in question is read(), which shouldn’t be causing such an issue. After reading through the kernel source code for the relevant functions, I find a potential culprit: a race condition in our custom buffer management code.

I decide to take a different approach and look at the hardware side of things. Maybe it’s not a software bug after all. I check the SMART status of the disks, ensure that there are no pending reallocations, and verify that the RAID arrays aren’t under stress. Everything checks out on the hardware front too.

The next step is to revert recent code changes one by one, hoping that something will reveal itself as the cause. I spend hours going through each commit, comparing function signatures, and trying to spot any differences in behavior. By now, it’s late, and my eyes are starting to feel heavy.

Finally, around 3 AM, I find a piece of code that looks suspicious. It’s part of our custom buffer management logic where we’re handling write-back caching. There was an optimization attempt made just a week ago to speed up the write path by skipping a few redundant checks. In my excitement to get it working faster, I might have introduced a race condition.

I recompile with debugging symbols and set breakpoints in that function. After stepping through the code, I see where the problem lies: when the system call is interrupted during the write-back process, there’s no proper handling of the context switch, leading to an undefined instruction exception.

With this insight, fixing it becomes a straightforward task of adding proper synchronization primitives around the critical sections. I apply the fix, reboot the server, and cross my fingers.

A few moments later, the server comes back up without crashing. I watch as dmesg prints out the normal boot messages, and then I log in to verify that everything is working correctly. The application starts communicating with the storage system again, and there are no more crashes or panics reported.

This experience reminds me of so many nights during Y2K when we were frantically trying to ensure nothing would fail. It’s a stark reminder that while technology has advanced, some core principles remain the same: always keep an eye on your systems, be prepared for unexpected issues, and never rush into optimizations without thoroughly testing them first.

As I sit here reflecting, I’m grateful for another day where the system didn’t fail us, even if it was just because of a small bug that required careful debugging. Y2K may have passed, but the importance of robust systems and good engineering practices remains evergreen.