$ cat post/the-kernel-panicked-/-i-git-bisect-to-old-code-/-the-signal-was-nine.md

the kernel panicked / I git bisect to old code / the signal was nine

Title: Kubernetes Complexity Fatigue and the Cost of Conformity

May 20th, 2019. The sun is setting over my local mountains, and I can’t shake this feeling that every day feels like a battle against Kubernetes complexity. It’s not that the platform itself has changed—it’s more about what we’ve built on top of it.

Today, we launched our new internal developer portal called “Backstage.” It’s meant to be a single pane of glass for all our microservices, and it’s mostly working well, but there are growing pains. We’ve started down the path of making every application conform to this template, with a bunch of mandatory annotations and labels. This is supposed to make everything more manageable, right? But boy, does it come at a cost.

I spent most of today debugging a mysterious issue where our Prometheus metrics just weren’t showing up on Backstage. Turns out, one of the services was mislabeling its metric names, which caused a validation failure in our scraping job. Once I fixed that, everything started working again. But why did it take me so long to figure this out? It’s because of all the complexity we’ve added just to get things “correct.”

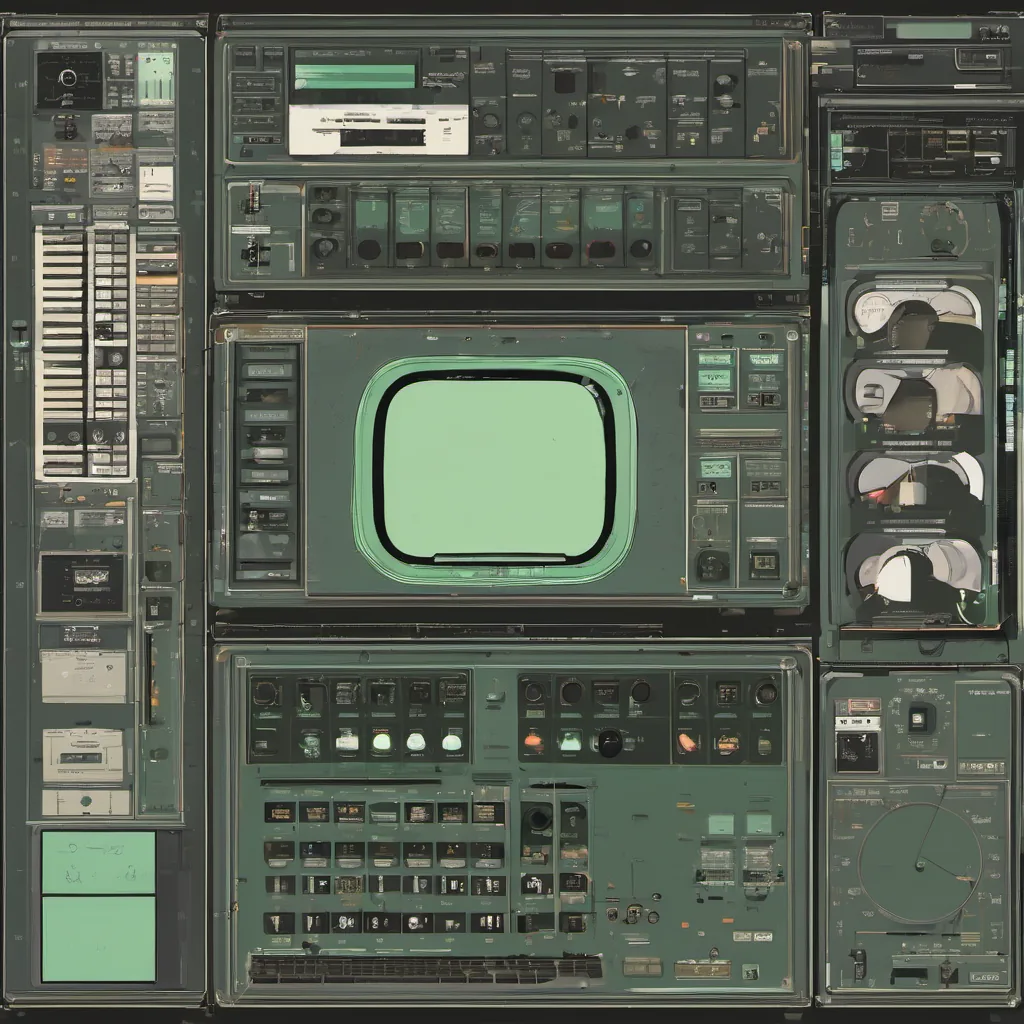

In the old days, when we were all running pods on bare metal and writing bash scripts to manage them, there was a certain freedom. Now, every service needs an environment variable for IMAGE_PULL_SECRETS, some extra labels for Kubernetes networking, and a dozen annotations that are only meaningful if you look up in our internal documentation.

It’s like we’ve built this giant Rube Goldberg machine just to make sure everything conforms, but the machine is so complex that it starts breaking at even the smallest of changes. And the irony is, I started down this path because I thought it would save time and effort. But now, every new service feels like starting a new project.

The other thing that’s on my mind is the rise of SRE roles. We’ve hired more Site Reliability Engineers, and they’re doing great work, but there’s an underlying tension. Our devops team is still very much about making sure everyone has the tools they need to deploy and run their services. Now, we have SREs who are focused on monitoring, logging, and ensuring stability. It’s like two parallel universes that occasionally collide.

Then, today, I read a few Hacker News stories that really hit home. The Chrome-to-Firefox switch made me think about how quickly things can change in tech. And “DigitalOcean Killed Our Company” was particularly resonant. It’s a stark reminder that even companies we thought were stable and reliable are not immune to the whims of the cloud.

In my day job, I’m constantly arguing with myself about whether it’s worth spending time on these microservices or if we should just go back to simpler architectures. The complexity fatigue is real, but so is the need for robust, scalable systems that can handle our growing user base.

As I sit here sipping some cold beer, I realize how much of my day was spent fighting Kubernetes’ quirks and how little time I actually had to think about innovation or new technology. eBPF is gaining traction, and it feels like a breath of fresh air, but we’re not there yet.

Tomorrow, back to the struggle. But for now, let’s enjoy the sunset and the quiet of my backyard.

P.S.: If anyone has tips on how to make Kubernetes less painful or recommendations for better ways to manage microservices, I’m all ears!