$ cat post/cron-job-i-forgot-/-the-pipeline-hung-on-step-three-/-the-pod-restarted.md

cron job I forgot / the pipeline hung on step three / the pod restarted

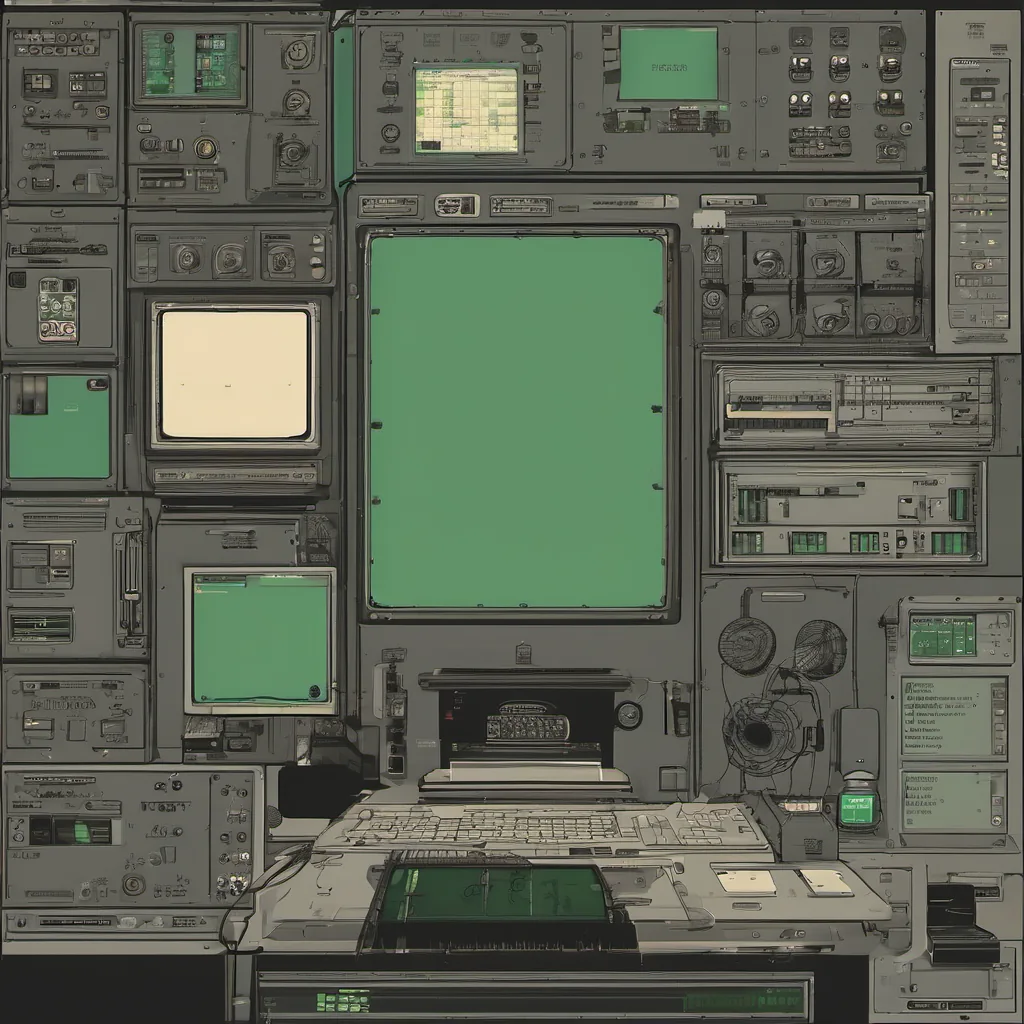

Title: Kubernetes Cluster Management Woes: A Journey Through Complexity

July 20, 2020 - It’s been a rollercoaster month. Just when I thought the world had stopped spinning due to the pandemic, something else was hitting us—a cluster of chaos with my Kubernetes setup.

You see, we’ve been trying to scale up our internal developer portal using Backstage and everything that comes with it—Armo, ArgoCD, Flux GitOps. The idea is fantastic: one place for all your developer tools, a single source of truth, and automation galore. But oh boy, have I had my hands full.

One particularly trying week was when the cluster started acting up. Pods were getting stuck in a mysterious “ContainerCreating” state, and it wasn’t immediately clear why. After hours of kubectl commands and digging into logs, I finally traced it back to a networking issue. Turns out, some changes we made to our network policies had unintentionally blocked certain pod-to-pod communication.

This was just the tip of the iceberg. As the complexity of Kubernetes grew, so did my hairline, though not as fast as the frustration level. The sheer number of options and choices—Helm charts, operator patterns, CRDs (Custom Resource Definitions), etcd clusters—can be overwhelming. It’s like trying to navigate a dense forest with no map, except this forest is constantly growing.

On top of that, there was an ongoing debate at work about whether eBPF should become part of our stack for better performance and observability. While it sounds promising, the learning curve is steep, and integrating it correctly in such a complex environment can be tricky. It’s like trying to teach your old dog new tricks when he’s already got so many.

The other day, I had a particularly heated discussion with a colleague about SRE vs Platform Engineering roles. We were both passionate but disagreed on how much automation is enough and where the line between developer responsibility and platform engineering should be drawn. It’s funny—just as we started to agree on one point, some new piece of infrastructure went down, and the whole conversation shifted.

And then there was that infamous Hacker News post about Apple keeping its 30% cut even after a refund. While it’s easy to get sidetracked by these kinds of discussions, it’s important to stay focused on what we’re building here—reliable systems for our developers so they can do their best work.

Looking back at the month, I’ve learned that with great complexity comes great responsibility. We need to keep refining our practices, asking the hard questions, and not being afraid to change course when necessary. After all, our goal is to make sure that every developer can focus on what they do best—writing code—and not spend their time troubleshooting infrastructure issues.

So here’s to another month of Kubernetes challenges, internal debates, and personal growth. May the next month bring more clarity and less frustration!

That’s where I was last July 20th, trying to wrangle a complex cluster while navigating the shifting landscape of platform engineering tools and practices. If only we could bottle some of that self-deprecation and turn it into productivity juice!