$ cat post/kubernetes-complexity-fatigue-and-the-long-tail-of-tech-debt.md

Kubernetes Complexity Fatigue and the Long Tail of Tech Debt

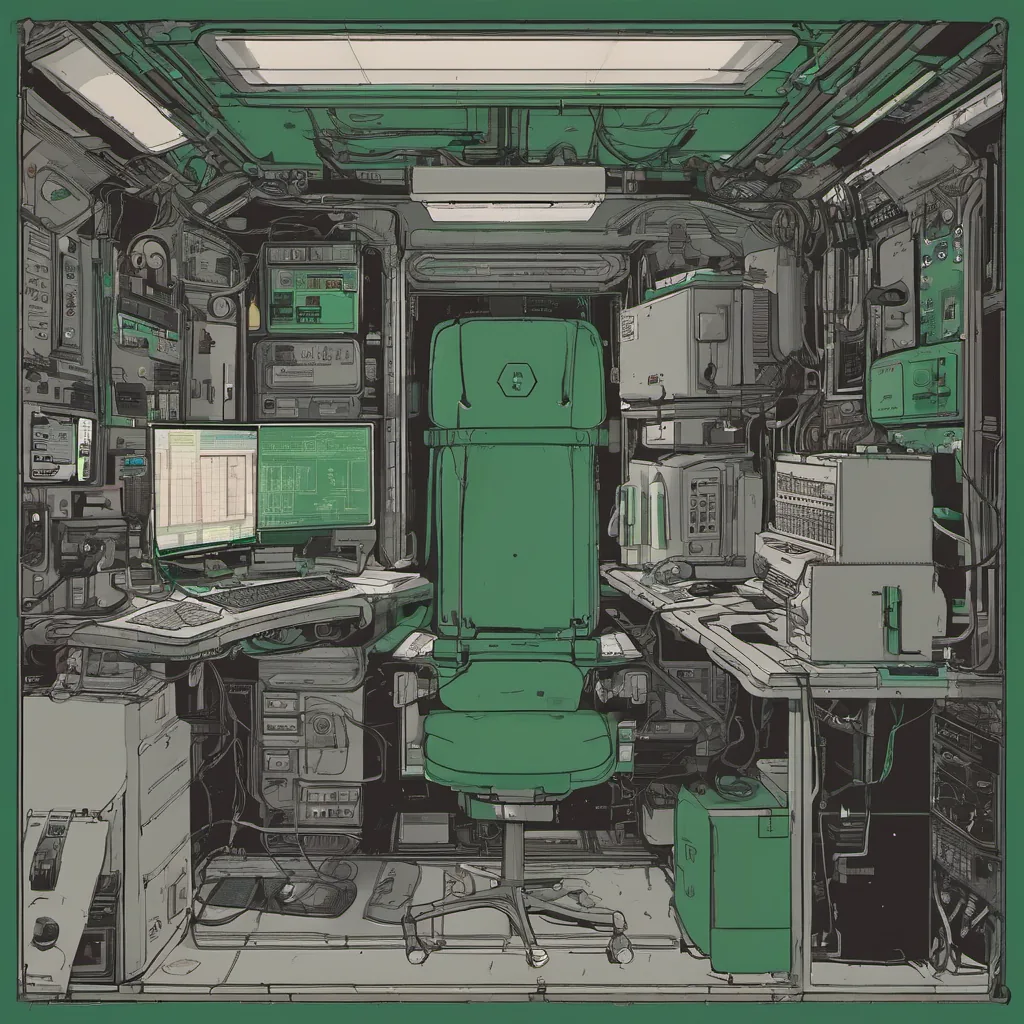

December 20, 2021. It’s a quiet Monday afternoon, and I’m sitting in my home office with the winter sun streaming through the windows. The calendar says it’s 2021, but after all these months of remote work, I feel like time has frozen. This is an era defined by platform engineering formalizing, internal developer portals (like Backstage), SRE roles proliferating, and infrastructure scaling due to a global pandemic. Yet, as much as the world around me moves forward, it feels like we’re dealing with the lingering effects of choices made in earlier times.

I’ve been wrestling with Kubernetes complexity fatigue. It’s not just about managing deployments or scaling services; it’s about understanding the nuanced interactions between multiple layers and the sheer number of ways things can go wrong. We’re at a point where the initial excitement of containerization has given way to a more sobering reality: the architecture we built on top of Kubernetes is complex, fragile, and riddled with tech debt.

A few weeks ago, I spent an entire weekend debugging an issue in our production cluster. A service that should have been simple turned out to be a tangled web of dependencies, misconfigured resource requests, and unoptimized eBPF scripts. It was like unraveling a mystery—every fix led to another problem, and by the end of it, I felt exhausted but somehow more knowledgeable about all the ways things can go wrong.

This week, we were hit with an AWS us-east-1 outage, which reminded me that no matter how robust our infrastructure is, external factors still have a significant impact. The sheer volume of comments on HN about this outage shows the shared frustration—everyone seems to be holding their breath and hoping for stability in what feels like a continuously shifting landscape.

I recently had an argument with one of my team members about whether we should invest more time into maintaining our internal developer portal (Backstage). My stance is that while it’s important, it’s not as critical right now as ensuring the reliability of our existing systems. The back-and-forth felt like a microcosm of broader industry discussions—do we prioritize building shiny new tools or focus on the fundamentals?

Evidently, I’m not alone in feeling this way. On HN, there’s a thread titled “Are most of us developers lying about how much work we do?” that resonated with me. It’s easy to get lost in the day-to-day minutiae and forget what truly matters. I found myself reflecting on all the bugs I’ve spent countless hours fixing—many small, some significant. But is this a sign of progress or just more tech debt?

The Log4j RCE Found thread brought home the importance of security practices. We’ve had to scramble to patch our systems and educate ourselves on what’s at risk. It’s a stark reminder that while we might be good at building things, we’re often not as good at securing them.

In the midst of all this, I can’t help but think about TikTok streaming software being an illegal fork of OBS. It’s humbling to see how far copying and pasting code without understanding its implications can go. Maybe it’s time for more mentorship and guidance in our team around these kinds of issues.

As we look back on 2021, I find myself wishing for a simpler time when Kubernetes felt like the future of everything. But as I close this blog post, I’m left with a sense that complexity is here to stay—and so are the challenges it brings. The next few years will be about finding balance—between building and maintaining, between innovation and reliability.

Until next time, keep your wits about you and don’t forget why you started doing tech in the first place.