$ cat post/man-page-at-two-am-/-the-alert-fired-at-three-am-/-the-secret-rotated.md

man page at two AM / the alert fired at three AM / the secret rotated

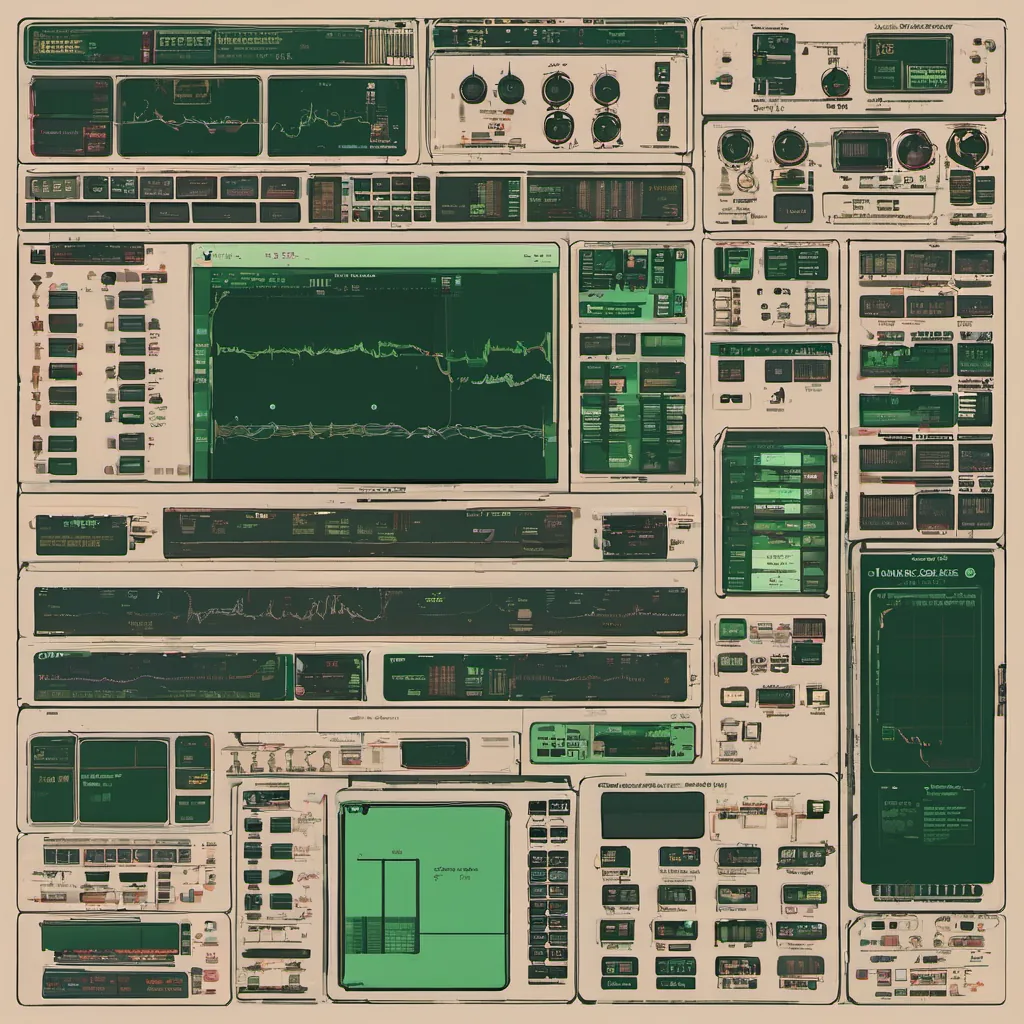

Title: Container Hell: A Real-Time Debugging Adventure

April 2015 was a wild month. The tech world was abuzz with the latest developments in microservices and containerization. Docker had taken off like wildfire, and everyone seemed to be talking about Kubernetes and Mesos. I remember thinking, “OK, time for me to catch up,” as my team dove into these new technologies.

We were using a mix of containers and Mesos at work, trying to figure out the best way to scale our infrastructure while maintaining stability. We had built a few simple microservices and were running them on Docker containers, managed by Marathon. But boy, oh boy, did we run into some issues.

One Friday afternoon, as I was going through my usual post-mortem checklist of things that could go wrong, something didn’t feel right. Our service was acting up, and the logs weren’t giving me any clues. It felt like a container hell—those unpredictable and frustrating scenarios where everything seems to be working fine until it all falls apart.

I decided to step into one of our containers and take a look at the system state using top and ps. What I found was disheartening: CPU usage was sky-high, but not in any obvious processes. It was like a ghost process, eating up resources silently. I tried some basic profiling commands, but nothing seemed to pinpoint the issue.

As the day dragged on, I spent more time debugging than I care to admit. I ended up spending several hours looking at the container’s environment and configuration files, hoping to spot something odd. Finally, I found it—a rogue process running in the background that was causing the high CPU usage. It turned out to be a cron job that we had forgotten about, scheduled to run every minute.

Debugging this issue felt like being stuck in quicksand—every time I thought I had a handle on it, something else would come up. But eventually, after some late-night debugging sessions and a few cups of coffee (or more accurately, tea), we got it sorted out.

The process wasn’t just about solving the immediate problem; it was also about ensuring that such issues wouldn’t crop up again. We reviewed our deployment scripts and added checks to ensure that all cron jobs were properly vetted before being deployed. It was a humbling reminder of how quickly things can go wrong in dynamic environments like containers.

This experience highlighted for me the importance of thorough testing and robust monitoring. Containers are incredibly powerful, but they require meticulous attention to detail. I also learned that sometimes the best way to debug is not by looking at the logs or code, but by stepping into the container itself and exploring its environment.

Reflecting on this event, it makes me appreciate how far we’ve come with tools like Kubernetes and Prometheus, which provide better visibility and control over our containers. It’s also a reminder that as much as I love the bleeding edge technologies, sometimes the old-fashioned methods can still be incredibly valuable when things go wrong.

In the end, it was another good learning experience, one that helped me grow both personally and professionally. The tech world is always evolving, but the core principles of debugging and problem-solving remain timeless.