$ cat post/the-old-datacenter-/-the-proxy-swallowed-the-error-/-i-blamed-the-sidecar.md

the old datacenter / the proxy swallowed the error / I blamed the sidecar

Title: March 19, 2001: The Year the Dot-coms Blew Up and I Learned to Love Bash

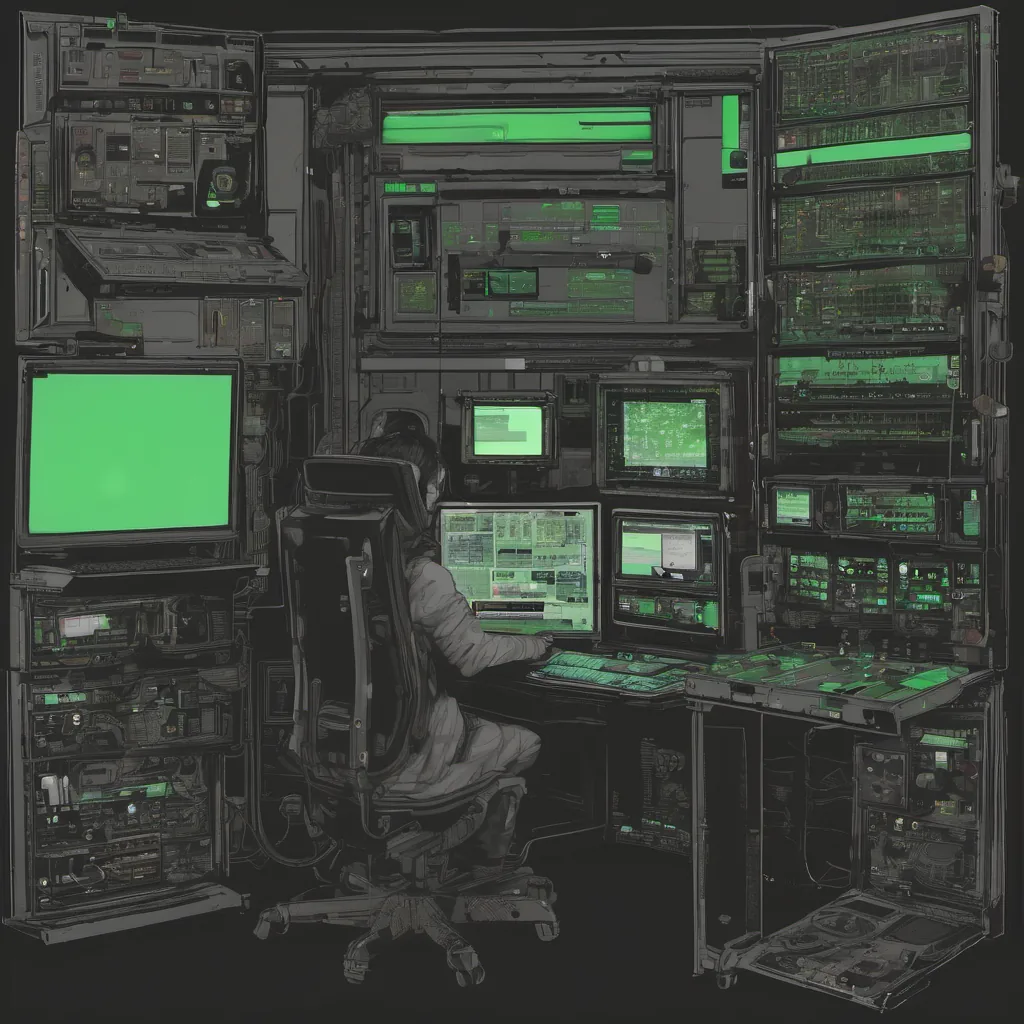

March 19, 2001. The air was thick with the scent of Y2K dodged and dot-com dreams crushed. Back then, my workdays were a mix of writing shell scripts in bash, debugging Apache configurations, and wrestling with broken Sendmail setups on a variety of Linux servers. It’s hard to believe it’s been over 20 years since I faced those challenges.

The tech world was on edge. Dot-coms were shutting down left and right, laying off thousands. The once-ubiquitous optimism had turned to panic in the server room. I found myself arguing with colleagues about whether we should even be running our applications on Linux or if we needed to revert back to Windows servers for stability (a discussion that still echoes today in different guises).

One day, a critical service went down and stayed down. It was one of those mornings where you wake up feeling groggy but realize your coffee mug is on the desk, ready to be filled with a warm drink that will soon turn into an ice-cold cup at 2 PM. The culprit? A simple bash script gone rogue.

We had a cron job that ran nightly, responsible for generating reports and sending them via email. It worked flawlessly until one night when it decided to run out of memory. Bash scripts can be tricky beasts—especially when you’re working with large datasets or complex logic. The script was trying to process hundreds of thousands of records in a single pass, without proper error handling.

The logs were a mess, showing errors related to memory exhaustion and core dumps. Debugging it was like trying to untangle a ball of yarn. I spent hours staring at the code, adding set -x commands to trace execution paths and understand where things went wrong. It wasn’t pretty, but it did help.

I remember sitting there, frustration building as the clock ticked past 8 AM. The team was getting antsy, waiting for the script to finish so we could get a report on what had gone wrong. I knew if this happened again, it would be bad news—both for my reputation and the trust in our automation.

That’s when I realized I needed a better way to handle these large data sets. I started thinking about implementing chunking or using background processes with proper error handling. Bash isn’t perfect for every task, but with a few tweaks, it can do wonders.

In the end, I rewrote the script, added logging, and made sure it ran in smaller, more manageable chunks. It worked like a charm, and we haven’t had issues since. The team was relieved, and my boss gave me a pat on the back. Little did I know that years later, this experience would still come up in discussions about automation best practices.

That day taught me several things:

- Always have a plan B for your scripts.

- Logging is crucial for debugging.

- Bash can do complex tasks but has its limits.

As I look back on 2001, I remember those chaotic days vividly. The dot-com bust was real, and it forced us to rethink many of our assumptions about technology and business. But amidst the chaos, we learned valuable lessons that still resonate today. And bash scripts? They’re still a part of my daily toolkit, albeit with more robust error handling now.

In the end, March 19, 2001, was just another day in ops, but it taught me to love bash and appreciate its power for simple tasks—just as long as I don’t let it get out of hand.