$ cat post/green-text-on-black-glass-/-a-crontab-from-two-thousand-two-/-we-were-on-call-then.md

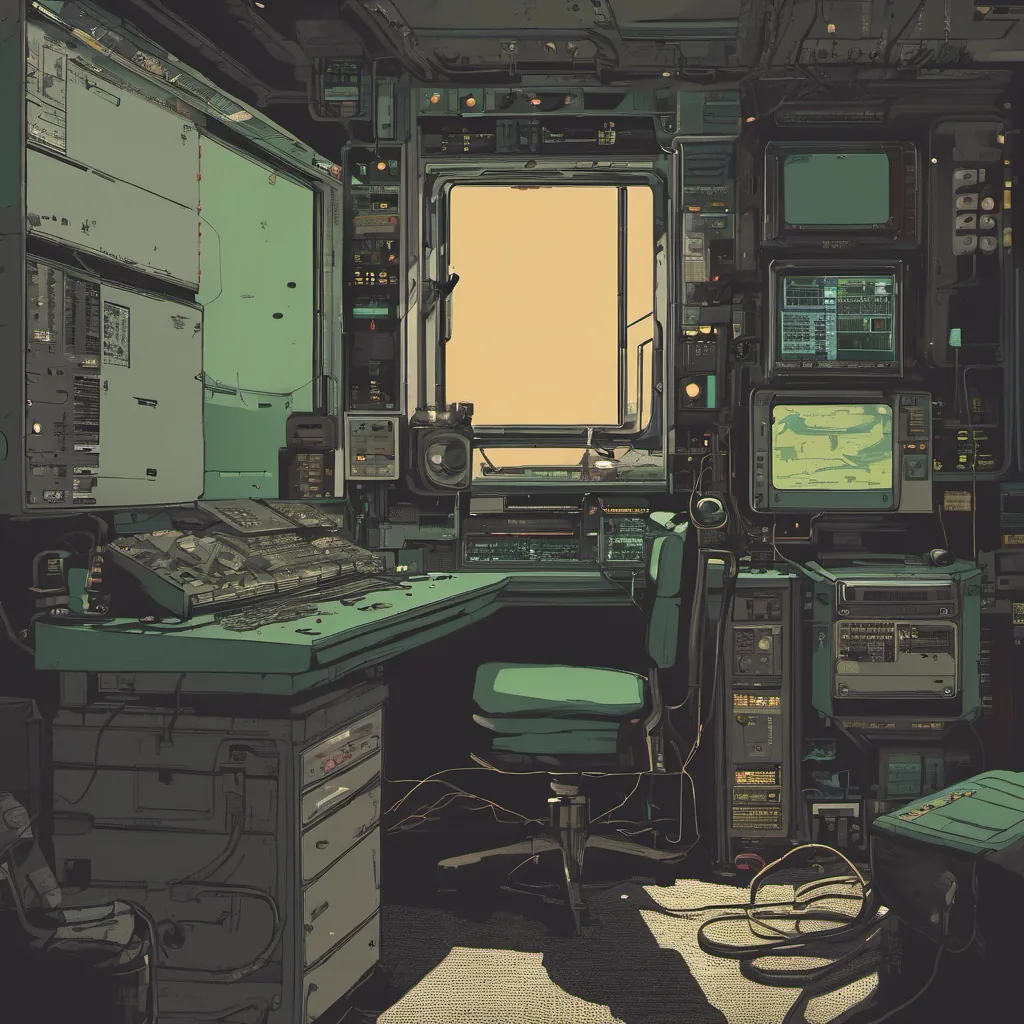

green text on black glass / a crontab from two thousand two / we were on call then

Title: Kubernetes Complexity Fatigue Hits Home

July 19, 2021 felt like the day I finally hit my own personal wall with Kubernetes. It was a moment of clarity, a realization that even after years of working in this space, there are still challenges and trade-offs that can really bring you to your knees.

I’ve always been an advocate for embracing complexity—after all, technology is often about solving complex problems. But sometimes, it just gets too much. Over the past few months, I found myself arguing with team members, struggling with setup scripts, and even questioning whether we should have moved towards a containerized architecture in the first place.

One of our internal projects had started running into some serious issues. We were trying to scale up an application that was originally designed for small, single-node setups on Kubernetes clusters. The deployment scripts worked fine for a few nodes, but as soon as we hit 10+ nodes, things began to fall apart. Logs were getting harder to follow, monitoring metrics became less reliable, and troubleshooting bottlenecks felt like solving a Rubik’s Cube blindfolded.

The problem seemed to stem from two main areas: networking and storage. Our application was relying heavily on local state, which didn’t play well with the Kubernetes networking model. Additionally, our storage layer wasn’t properly configured for distributed systems, leading to inconsistencies across nodes.

Debugging these issues felt like a never-ending game of whack-a-mole. I’d fix one thing only to discover another issue lurking just out of sight. It was during this time that I realized the true extent of Kubernetes complexity fatigue—feeling overwhelmed by the sheer number of knobs and dials you need to turn.

That’s when I started exploring alternatives. I’ve heard a lot about eBPF lately, but as someone who’s spent years wrestling with Kubernetes, it was hard to shake off the feeling that every tool comes with its own set of trade-offs. Still, the idea of a more lightweight and potentially faster solution piqued my interest.

Around this time, ArgoCD had been getting some buzz. I decided to give it a shot as a way to manage our deployments more efficiently. With GitOps becoming more mainstream, I thought maybe it was time to embrace another layer of abstraction. It turned out to be a mixed bag: while ArgoCD simplified certain aspects of the deployment process, it introduced its own set of complexities and required careful configuration.

As I sat there late into the night, staring at my screen trying to untangle yet another Kubernetes mess, something inside me shifted. Maybe it was the realization that sometimes, simplicity is underrated. Or maybe it was just that I needed a break from all the complexity.

Looking back, 2021 didn’t just bring new technologies like ArgoCD and eBPF; it also brought home the reality that even seasoned engineers can hit their limits with Kubernetes. The next step for me will be to explore other solutions while maintaining an open mind towards adopting new tools. After all, in tech, there’s always something new to learn.

In the meantime, I plan to take a break from deep Kubernetes work and focus on some smaller projects that might not require as much overhead. And who knows? Maybe taking it easy will give me the perspective needed to approach Kubernetes with fresh eyes when I return.

Until then, back to the drawing board. But with a bit more appreciation for the simpler solutions hiding in plain sight.