$ cat post/a-shell-i-once-loved-/-a-webhook-fired-into-void-/-config-never-lies.md

a shell I once loved / a webhook fired into void / config never lies

Title: Kubernetes Complexity Fatigue: When 40 Pods Is Too Many

August 19, 2019. The world felt different today.

It’s been a while since I’ve wrestled with a problem that felt as real and tangible as the day it first hit me in mid-2018. Back then, we had just transitioned to using Kubernetes for our platform at work. It was like a breath of fresh air—a promise of better tooling and more robust infrastructure. But now, here I am, knee-deep in a sea of pods, each one representing a potential source of pain.

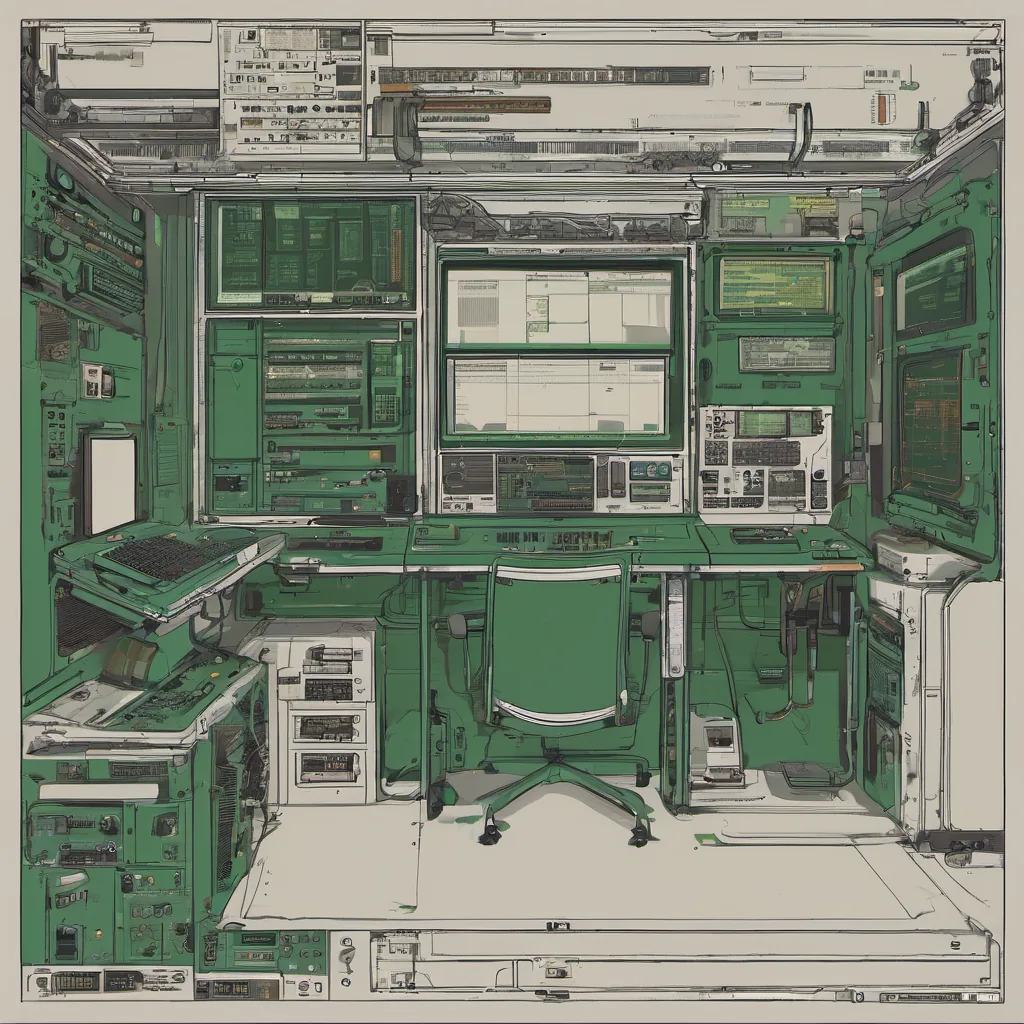

It all started innocently enough. We had a few dozen services running on Kubernetes, each with its own set of deployments, rolling updates, and secrets management. The platform team was handling it with relative ease—after all, we were the experts! But as the days turned into weeks, then months, I found myself staring at the console more and more often.

The problem wasn’t just that there were too many pods; it was the complexity of managing them. Every pod had its own set of configurations, secrets, and dependencies. We started to see issues pop up regularly—pods crashing, hanging, or misconfigured. It felt like every change we made to one part of our cluster would inevitably cause a ripple effect somewhere else.

One particular day, I found myself arguing with a colleague about the right way to manage our deployment pipeline. He was advocating for a more complex approach that involved multiple steps and layers of abstraction. I argued back, saying we needed something simpler and easier to understand. “We’re not just making it hard,” he said, “we’re building a fortress.” And then it hit me—maybe we were.

I remember the day vividly when I realized we had crossed a line. We started with Kubernetes as a tool for automation, but now, it felt like we were over-complicating everything. The platform team was spending more time debugging and maintaining infrastructure than actually building new features. It was like our application code had morphed into an intricate web of interdependent pods, each one a potential point of failure.

That’s when I decided to take action. We needed to simplify things. My plan? Start with the basics: clean up our deployment processes, reduce the number of pods, and standardize as much as possible. It was like decluttering my desk after years of accumulation—every step felt liberating but also daunting.

The first thing we did was create a new set of guidelines for deploying services on Kubernetes. We decided to limit the number of pods per application to 40—a manageable number that would allow us to focus on quality over quantity. It might seem arbitrary, but in practice, it worked wonders. Suddenly, our cluster became more predictable, and issues were easier to pinpoint.

We also started using tools like ArgoCD and Flux to manage our deployments better. These tools allowed us to version control our Kubernetes manifests, which made rollbacks a breeze. And they helped us maintain consistency across environments, reducing the risk of configuration drift.

But it wasn’t just about technology; it was about culture too. We had to make sure everyone on the team understood why we were doing things this way and that simplicity wasn’t an excuse for mediocrity. Instead, it was a conscious choice to focus on quality and maintainability.

Looking back, I realize that Kubernetes complexity fatigue isn’t just about managing a cluster of pods; it’s about finding the right balance between flexibility and ease of use. We learned that sometimes, less is more. And while it took us a while to get there, we emerged stronger for it.

In the end, our journey from 40+ pods to a simpler, more manageable infrastructure taught me that in tech, as in life, simplicity often wins out over complexity. It’s not always easy, but it’s worth striving for.