$ cat post/first-loop-i-ever-wrote-/-a-timeout-with-no-fallback-/-i-left-a-comment.md

first loop I ever wrote / a timeout with no fallback / I left a comment

Title: Debugging a Network Storm on Halloween

October 31st was just around the corner, and I found myself in the midst of a network storm that felt like it could have been a part of some spooky tale. It started innocently enough with a few strange logs popping up in our monitoring system, but before long, my colleagues and I were knee-deep in troubleshooting what turned out to be a cascading failure across several of our key services.

We had recently moved from using Chef to Puppet for our infrastructure automation, part of the ongoing DevOps shift we were making. The transition was going smoothly until this Friday when everything went haywire. Our monitoring tools started alerting us about timeouts and connection issues between two critical clusters. We quickly found that one cluster was experiencing higher-than-normal latency and packet loss.

“Could it be a routing issue?” I asked myself as I dug through the network configuration. “Or could it be Puppet’s fault? Maybe we missed something in our migration scripts.” The tension grew as more services began to report issues. It felt like a classic case of Murphy’s Law being at play—something small and seemingly innocuous had cascaded into a larger problem.

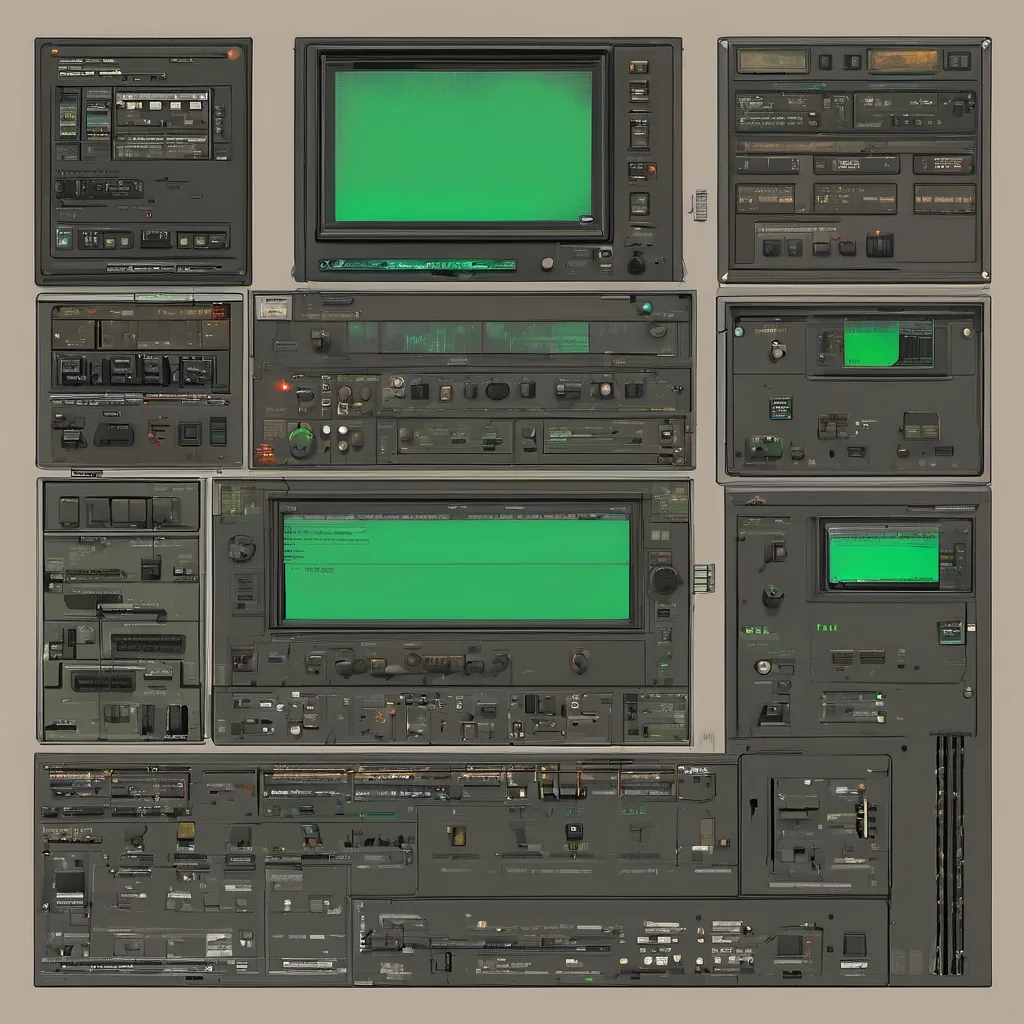

I pulled up the latest version of the network diagram I had hastily drawn on my tablet (the days before modern digital tools were fully embraced). The architecture looked fine, but there was something off. I called over a colleague who had been with us longer than me to review it. “We should double-check our route maps,” he suggested. “Sometimes a simple misconfiguration can cause this.”

Together, we started tracing the flow of traffic between the clusters, looking for any discrepancies in routing and firewall rules. After what felt like hours of intense debugging, we finally found the culprit: a recent change to one of our BGP (Border Gateway Protocol) configurations had inadvertently introduced a loop.

As soon as I spotted it, relief washed over me—followed by the realization that this was exactly why we needed robust testing and rollback plans in place. “We need to revert this immediately,” I said, initiating the rollback process through our GitOps workflow. The change was reverted, and within minutes, the network traffic stabilized.

But the problem wasn’t just solved; it highlighted a few key lessons for us as an organization:

- Automation Tools Aren’t Always Bulletproof: While Puppet had helped streamline some of our infrastructure management, we still needed to be vigilant about configuration drift and human error.

- Testing and Validation Are Non-Negotiable: Even with tools like GitOps and automated testing in place, changes should always come with rigorous validation steps.

- Communication and Collaboration Matter: In a crisis like this, quick decision-making and clear communication were crucial. Our ability to work together as a team paid off.

Reflecting on the day, I couldn’t help but think about how events outside our immediate control—like the launch of OpenStack or the excitement around AWS re:Invent—could sometimes distract us from the everyday challenges that come with running production systems. Yet, it was precisely these kinds of real-world problems that pushed us to continually improve and evolve.

The ghostly figures of Halloween were fading into morning light as I logged off my computer, satisfied knowing we had weathered another storm. Tomorrow would bring new challenges, but for now, the network was stable, and our spirits were high.