$ cat post/the-monolith-ran-/-a-kernel-i-compiled-myself-/-i-strace-the-memory.md

the monolith ran / a kernel I compiled myself / I strace the memory

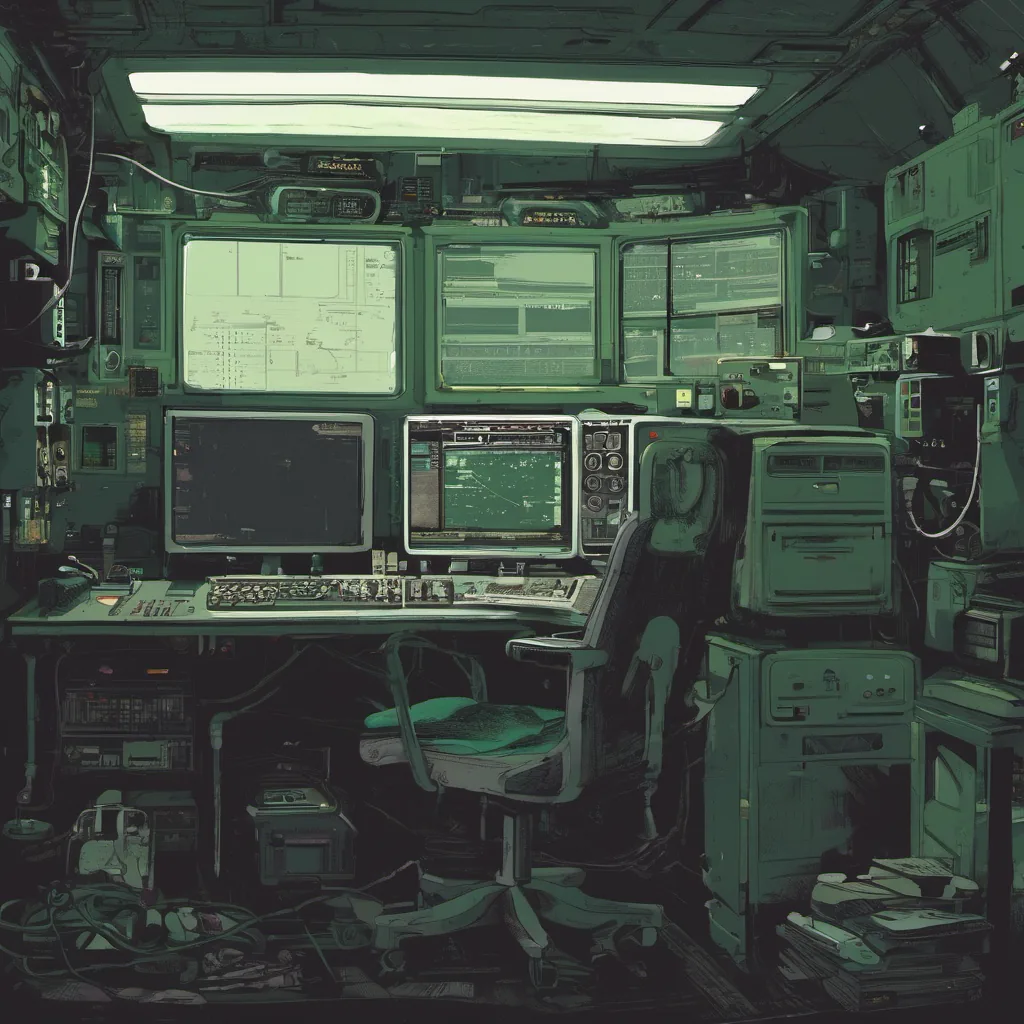

Debugging a Distributed System’s Nightmares

October 18, 2004. I remember it well. The air was thick with the excitement of what web technology could do and the tension of late nights trying to make sense of it all.

The Setup: A Wild Web 2.0 World

Back in ‘04, the web was just starting its transformation into a more dynamic, interactive space. We were building a platform for a startup that was aiming to be one of those new wave sites — think early Digg or Reddit, but with a twist. The tech stack? LAMP (Linux, Apache, MySQL, PHP). It felt like the whole world was running on it.

The Nightly Battle: Distributed Systems in Chaos

One night, something went horribly wrong. Our platform had been working fine up until now, handling user interactions and serving content without a hitch. But that night, everything decided to throw a tantrum. User sessions were timing out, database queries were timing out, and the server logs were filled with error messages.

I quickly started debugging by looking at our Apache access and error logs. The first thing I noticed was a spike in 504 Gateway Timeouts — the servers weren’t able to finish their work within the allotted time frame. That pointed towards an issue either on our end or with one of the services we were relying on.

The Investigation: A Web of Dependencies

To figure out what was going wrong, I had to dive deep into our system architecture. We had a few microservices communicating over HTTP and a caching layer to improve performance. But as it turns out, the caching layer wasn’t working as expected.

I decided to use strace on one of the problematic servers to see where exactly things were failing. The output showed that the cache requests were hanging indefinitely, which was odd because everything else in our logs looked normal. After a few hours of tracing and debugging, I realized we had a race condition with how our caching layer was handling concurrent writes.

The Fix: A Python Script to the Rescue

To fix this, I wrote a quick script using Perl to simulate the cache behavior under stress. But as soon as I started looking at production logs again, I realized that while this was helping me understand the issue, it wasn’t enough to resolve it in production. The solution had to be something more robust and automated.

I sat down with my team and we decided to use a Python script to monitor cache writes and ensure they completed before moving on to the next task. We set up a cron job that would run this script every few minutes, checking for any hanging processes. If it found one, it would restart the service responsible for the cache.

The Aftermath: Lessons Learned

After deploying this fix, things stabilized significantly. But I couldn’t shake off the feeling of having barely scratched the surface. We realized that our approach to distributed systems and caching was still naive. The web was evolving so quickly; we needed to keep up with new tools and techniques.

That experience taught me a few key lessons:

- Distributed systems are complex, and race conditions can be tricky.

- Automation is your friend, but it needs to be well-designed and tested.

- Always have a plan B — in our case, that meant having a Python script ready to monitor the system.

The Evolving Sysadmin Role

Looking back at this time, I see how much the sysadmin role was changing. We were transitioning from simple web server administrators to platform engineers who had to deal with complex distributed systems. The tools and technologies were evolving rapidly, but so too were our responsibilities.

Fast forward a decade or two, and things have changed even more dramatically. But that night of debugging taught me some valuable lessons about resilience, the importance of automation, and the need for constant learning in this ever-evolving field.

This was just one of many nights, but it stands out because of how much it highlighted both the challenges and the excitement of working with new technology at a time when everything felt like it could change overnight.