$ cat post/the-dns-lied-/-the-orchestrator-chose-wrong-/-the-signal-was-nine.md

the DNS lied / the orchestrator chose wrong / the signal was nine

Title: Debugging Digg’s Early Days

April 18, 2005. I remember this day like it was yesterday. It wasn’t a particularly momentous event in the grand scheme of things—just another day in the trenches of a young engineering team dealing with the joys and pains of building something for the masses.

At the time, Digg was still just an idea—a social news site that would allow users to submit links and vote on them. The concept was simple, but implementing it was anything but. We were using Perl as our primary language, and Python as a side dish, to build out our platform. Xen hypervisor was giving us some breathing room by allowing us to run multiple services without the overhead of physical machines, which felt like a luxury compared to the monolithic servers we had before.

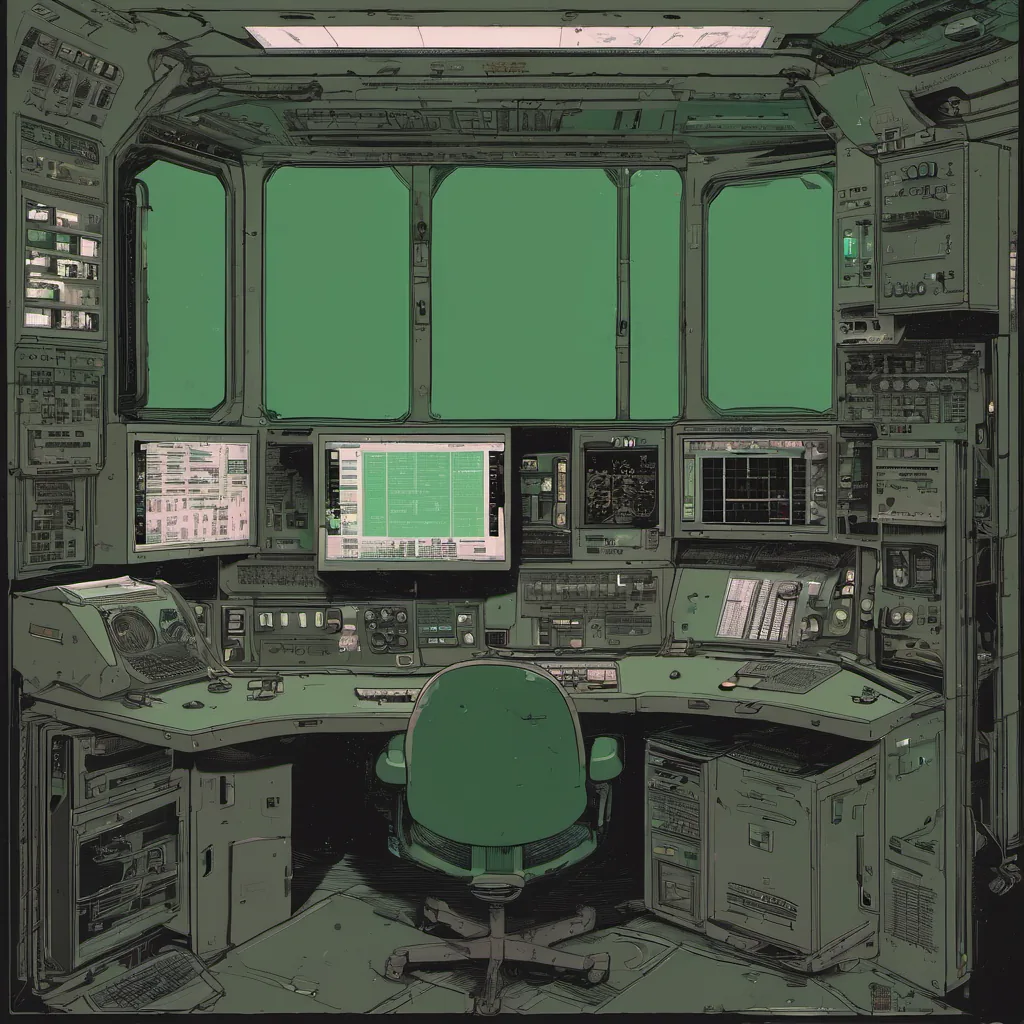

One day, I found myself staring at a wall of log files in the middle of the night. The site was down, and there were no obvious signs of what went wrong. It turned out to be one of those late-night debugging sessions that feel never-ending until you find something so trivial it almost makes you want to laugh.

The issue? A simple misconfiguration in Apache. Yes, you read that right—a configuration error. I was working on the front end, trying to optimize some pages, and accidentally altered a virtual host setting. This tweak caused all requests to be routed incorrectly, leading to the site going down. It was a classic case of “works on my machine.”

The team quickly sprang into action. We rerouted traffic from our staging environment, which wasn’t affected yet, and worked through the issue while trying not to panic too much. By morning, we had it fixed, but the experience left us thinking about how important it is to have good logging practices in place.

It was a humbling reminder that even the most trivial changes can have big impacts. We spent some time after fixing things discussing ways to improve our deployment and rollback strategies to minimize downtime. We started looking at tools like Apache’s mod_rewrite for better URL handling, but also realized we needed a more robust monitoring system to catch these issues before they became catastrophes.

One of the most interesting discussions came up when someone suggested using Python scripts for some of our logging processes. The idea was that with the rise of open-source stacks and tools like MySQL (we were still on 4.1, I think), we could start building more sophisticated monitoring systems. This sparked a debate about whether Python or Perl should be our go-to scripting language moving forward.

In the end, we decided to stick with Perl for most things, but kept an open mind about using Python when it made sense. The move towards dynamic languages was happening, and we wanted to stay current while balancing our existing expertise.

That evening, as I walked out of the office after a long day, I couldn’t help but feel both relieved and frustrated. Relieved because the site was back up and running smoothly, frustrating because I knew this wouldn’t be the last time something like this would happen. But I also felt proud that we had managed to recover quickly and learn from it.

Looking back, those early days at Digg were a whirlwind of learning and growing. We started small but had big ambitions, and every day brought new challenges and opportunities. It was clear then, as it is now, that the sysadmin role was evolving rapidly—more scripting, more automation, less firefighting. But it was all part of the journey.

This post captures a slice of life from early Digg development, focusing on practical challenges faced by an engineering team during a pivotal time in the rise of Web 2.0 platforms and open-source technologies.