$ cat post/a-merge-conflict-stays-/-i-watched-the-memory-climb-slow-/-the-daemon-still-hums.md

a merge conflict stays / I watched the memory climb slow / the daemon still hums

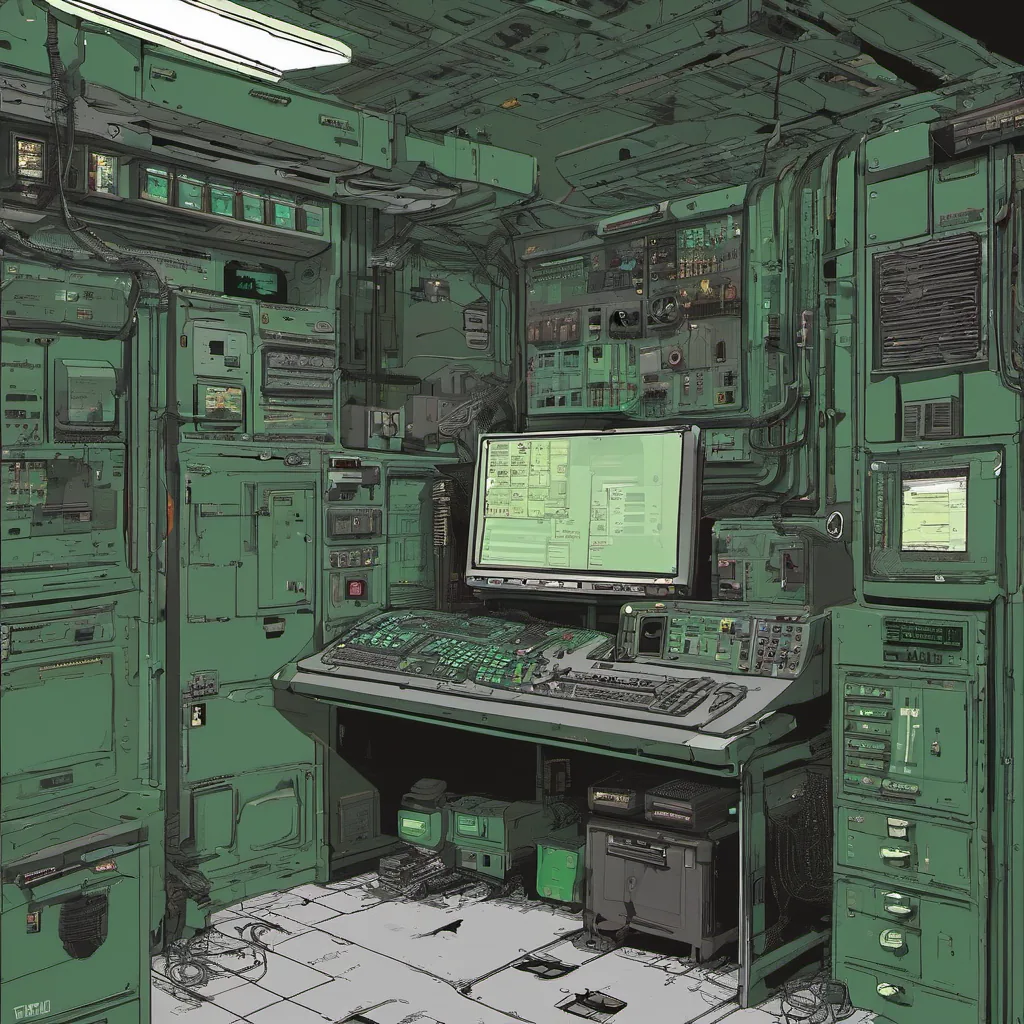

Title: Debugging AI Copilots in Real Time

November 17, 2025. I can’t believe it’s already here. A decade ago, the idea of AI copilots was still more Sci-Fi than reality. Now, they’re everywhere—assisting engineers, managing infrastructure, even doing our mundane tasks. The technology has come a long way, and so have my opinions on its reliability.

Today, I spent most of my morning debugging an issue that would’ve been a nightmare just five years ago. Our platform team had recently integrated an AI copilot into the build process for one of our critical services. It was supposed to streamline the deployment pipeline by predicting potential issues before they became actual ones. But it wasn’t cooperating.

The issue began when I noticed a spike in latency for one of our microservices. The logs showed everything seemed normal, but something wasn’t right. I fired up my terminal and ran top on the instance, only to see the AI copilot’s process taking 90% of the CPU. That’s when I realized it was likely an overzealous prediction model running a detailed analysis that should have been backgrounded.

I opened the copilot’s logs and saw it had generated a massive amount of telemetry data, which was being processed on-the-fly instead of batched. The system wasn’t designed to handle this load during normal operations, leading to the observed latency spike. I knew we needed to change how these models interact with our infrastructure.

After a bit of brainstorming, we decided to leverage eBPF (extended Berkeley Packet Filter) to create a more lightweight and efficient way for the copilot to interact with the system without hogging CPU resources. We rewrote parts of the copilot’s agent in C using eBPF hooks to minimize overhead during high-demand periods.

The refactor was a success, but it highlighted some challenges we hadn’t anticipated. Specifically, there was still a need for fine-grained control over what data the AI could access and how often it could run its predictions. We needed better policies around model execution to ensure that even under heavy load, our services remained responsive.

On a related note, I attended Cloudflare’s outage post-mortem today. It wasn’t exactly what I expected—a detailed walk-through of their systems, but more like a reflection on the broader implications of AI-native tooling in production environments. The Cloudflare team had to manually intervene and throttle some AI-driven features during the incident, which reinforced my belief that no matter how smart these tools are, human oversight is still necessary.

As we wrap up the day, I’m left thinking about how much has changed since those early days of AI copilots. They’ve evolved from experimental side projects to critical components of our infrastructure, and now they’re almost indispensable. But with great power comes great responsibility—especially when it comes to maintaining a balance between efficiency and reliability.

Tomorrow is another day, and there’s always more to learn. For now, I’m hitting the pillow knowing that even if AI copilots can’t do everything for us, we can still use them to make our jobs easier and more efficient.

Peace, Brandon