$ cat post/debugging-the-devops-dilemma:-when-llms-start-to-code.md

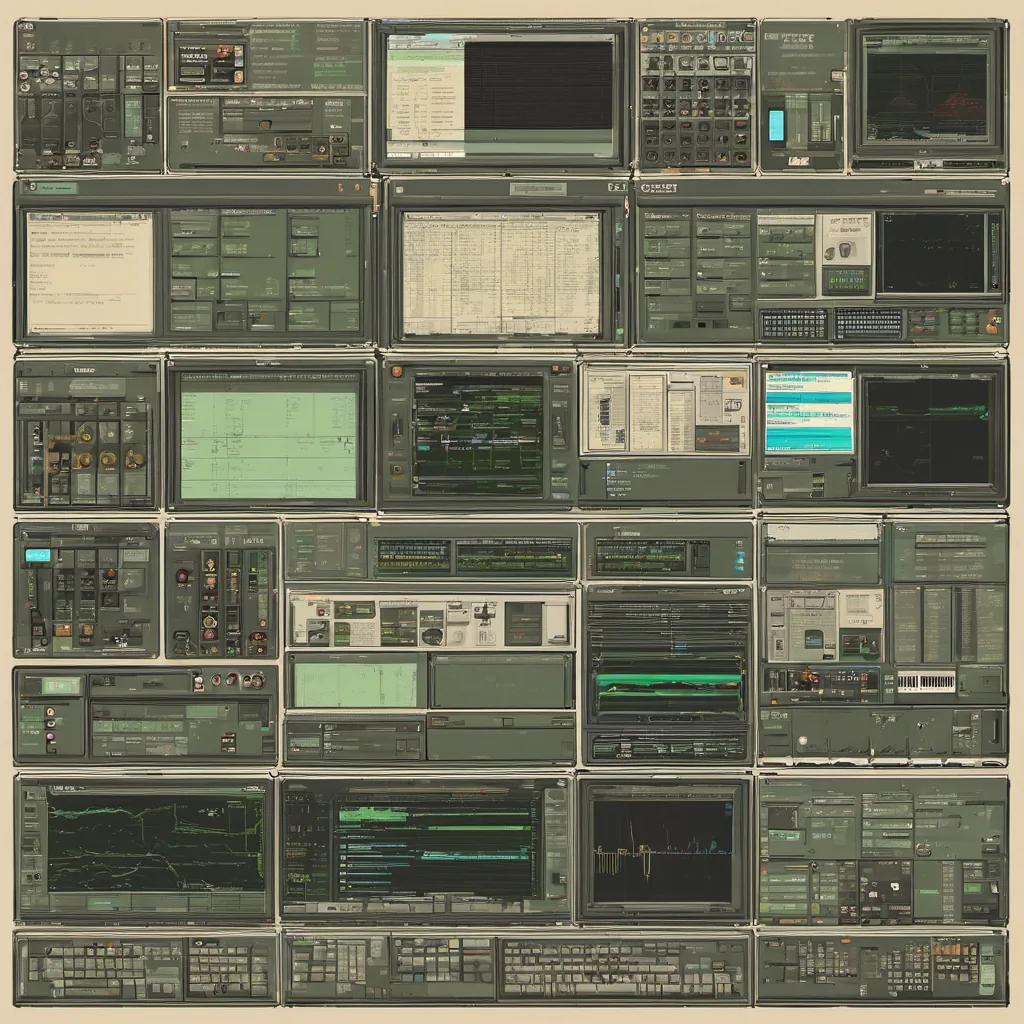

Debugging the DevOps Dilemma: When LLMs Start to Code

October 16, 2023. I can’t believe it’s been a month since the AI/LLM infrastructure explosion post-ChatGPT really hit its stride. As platform engineers, we’re all trying to navigate this new reality and figure out how these magical tools fit into our day-to-day work.

The Setup

A few weeks ago, I found myself in the middle of a debate with my team. We were evaluating how to integrate some LLM capabilities into our existing CI/CD pipeline. The goal was simple: use an LLM like Anthropic or Stability AI (S4) to help automatically generate and test code snippets for certain tasks—like API integration tests, or even initial skeleton code for new features.

The idea seemed promising. After all, these models are trained on massive datasets and can write decent Python or Java with minimal guidance. But as always in tech, there were caveats.

The Experiment

We decided to give it a shot. I set up the Anthropic model using their API and wrote a script that would take some natural language prompts from our team and try to generate code snippets. The first tests looked promising—code was generated quickly and often accurately followed the prompt.

But then, as we moved forward, issues started cropping up. One common problem was understanding context. The LLMs weren’t great at remembering what had been previously discussed or coded, leading to redundant work and confusion. Another issue was code quality; while the generated snippets were syntactically correct, they often lacked good practices like error handling or proper variable naming conventions.

Debugging the Debugger

The biggest challenge came when we tried to run these generated snippets in our CI/CD pipeline. We encountered a whole new set of issues related to environment setup and dependencies. The LLMs didn’t understand that our backend dependencies might be different from what was available in their training dataset, leading to many “not found” errors.

We hit a few walls trying to debug these issues, but eventually, we figured out some workarounds. For example, we added a step to the pipeline where the generated code was manually reviewed and adjusted before being executed. This worked, but it felt like we were losing part of the automation advantage.

The Financial View

On top of this, there was the FinOps aspect. We had to ensure that integrating these LLMs didn’t blow up our cloud bill. Every API call, every unit of code generation, adds costs. We had to be mindful of how much we were using and look for ways to optimize.

The Lesson

By the end of it all, I realized that while LLMs are incredibly powerful tools, they aren’t a silver bullet just yet. They can help with initial brainstorming or quick code snippets, but they need human oversight for more complex tasks. And let’s not forget about the costs—every extra API call and running environment adds up.

Moving Forward

For now, we’ve decided to use LLMs as part of our developer experience toolkit rather than a full-fledged production tool. The team is using them for quick prototyping and idea generation, but all final code still goes through manual review and testing in the pipeline.

As for FinOps, we’re keeping a close eye on costs and looking into ways to optimize. Maybe there are some new tools or best practices that can help us manage these expenses better.

In conclusion, as much as I love the idea of AI writing my code for me, it’s clear that we need to approach this with caution and a bit of pragmatism. It’s one more tool in our belt, but it won’t replace us anytime soon.

This was just another day in tech, full of challenges and learning experiences. The future is here, but so are the questions about how best to integrate these new tools into our workflows.