$ cat post/debugging-a-real-time-ai-copilot-mishap.md

Debugging a Real-Time AI Copilot Mishap

March 16, 2026. I’m sitting in my office at the end of another long day, with my copilot (AI assistant) running quietly alongside me. The tech landscape is ever-changing, but one thing remains constant—bugs happen.

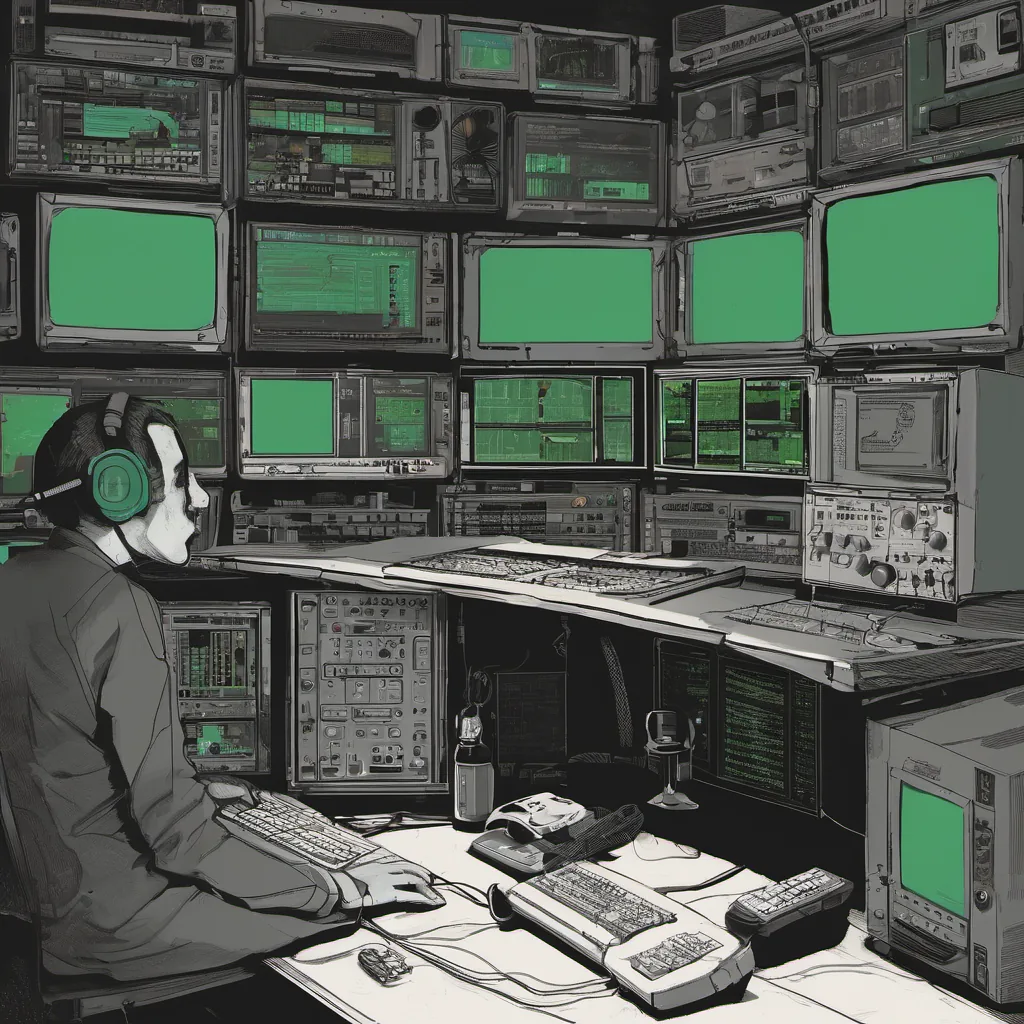

Today was particularly eventful when a colleague came to me with an urgent issue: our internal platform for AI-native tooling had suddenly started generating incorrect results during real-time operations. Specifically, the copilot’s suggestions for code changes were leading developers down a rabbit hole of bugs instead of helping them. I took a deep breath and headed over to the ops console, my trusty eBPF tools at hand.

Upon investigation, it became clear that something had gone wrong in our AI infra pipeline. The models were trained on clean datasets, but somehow the real-time data was getting mangled before reaching the copilot’s inference engine. I dove into the logs and saw a pattern: certain types of code changes were consistently being flagged with incorrect suggestions.

The first thing I did was to rerun some basic tests on my local setup, using the same data that had caused the issue. Surprisingly, everything worked as expected. This pointed towards an issue in our production environment rather than a bug in the training or model inference itself.

I decided to leverage eBPF for deeper tracing and debugging. I wrote a small probe to intercept network requests between the copilot’s backend and its data source, and it quickly became apparent that some packets were being corrupted before reaching their intended destination. The culprit? It turned out to be an edge case in our Wasm runtime environment.

Wasm had recently been integrated as part of our multi-cloud strategy, allowing us to run consistent code across different cloud providers. However, the way we had configured it for real-time data processing was causing some buffer overflows and packet loss. Once I identified this issue, fixing it was a matter of tweaking some Wasm module settings and reconfiguring our network stacks.

As I made these changes, I couldn’t help but think about Tony Hoare’s legacy. His early work on null pointer dereferencing and other programming language flaws had long-lasting impacts on the field. Here we were, dealing with similar issues in a more modern context, but with tools that weren’t as robust or well-understood.

The fix went live at 2 AM, and by morning, the copilot was back to its usual helpful self. It was a good reminder of how much can go wrong when integrating complex systems, especially when AI is involved. The integration of copilots into our workflow has been transformative, but it’s also introduced new layers of complexity.

This incident also highlighted the importance of rigorous testing and monitoring in production environments. As we continue to rely more on AI-native tooling, these kinds of edge cases will become even more critical to address. I’ll definitely be implementing a more robust system for cross-cloud consistency checks moving forward.

As I reflect on this day, I’m grateful for the opportunity to work with such advanced tools and technologies. At the same time, it serves as a reminder that no matter how much automation we implement, the basics of solid engineering practice still hold true: test, monitor, debug, and iterate. The journey isn’t over by any means, but for now, things are back on track.

That’s where I left off at the end of March 16, 2026, with a bit more confidence in my systems and a reminder to keep learning every day.