$ cat post/the-floppy-disk-spun-/-we-ran-it-until-it-melted-/-the-merge-was-final.md

the floppy disk spun / we ran it until it melted / the merge was final

Title: March 16, 2015 - Dockerizing Our Way to a New Era

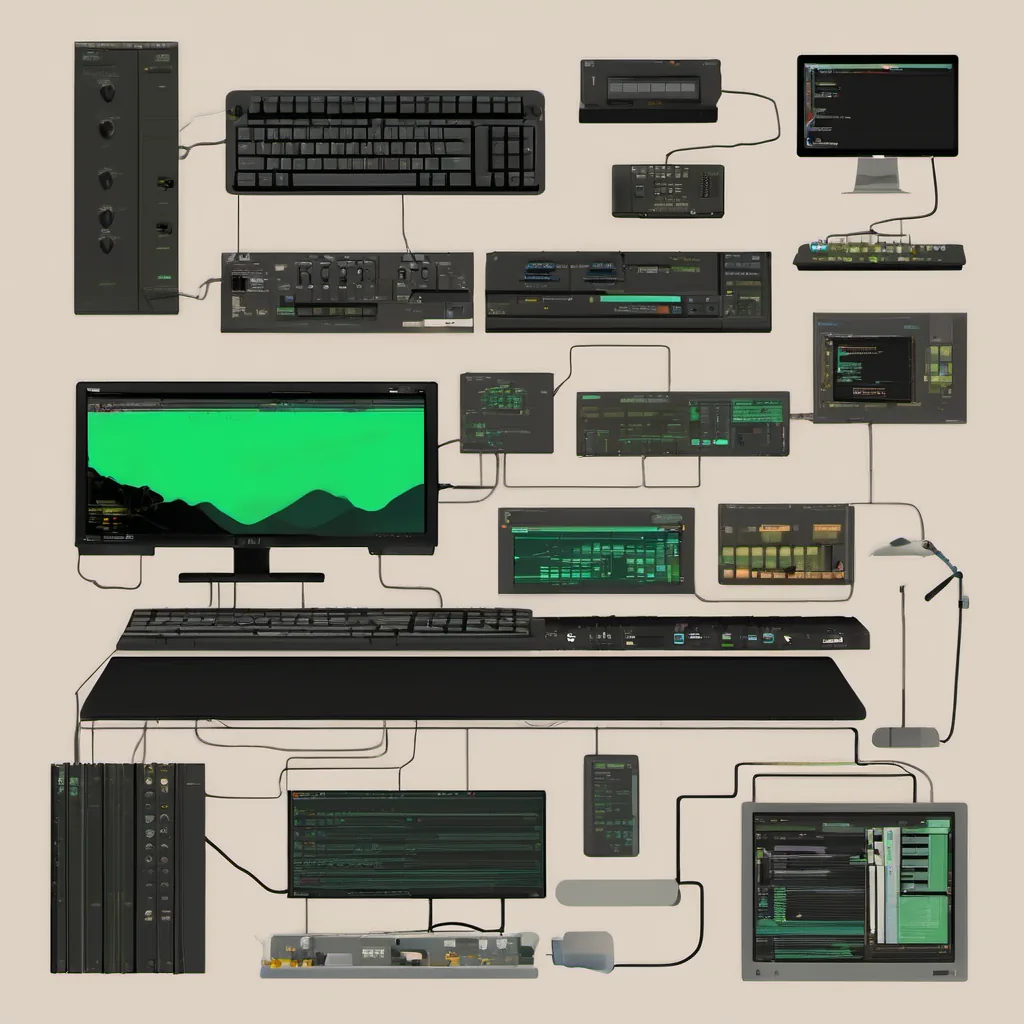

Today marks an important day in the tech world for us. It’s March 16, 2015, and I’ve just finished shipping our application into Docker containers. We’ve been using VMs and manual deployment processes so far, but it feels like we’re finally stepping into the future.

The Journey So Far

For the past few years, my team and I have been running a variety of services on virtual machines (VMs). Each service lived in its own VM, which was spun up when needed and shut down when not. We had our own internal tooling for deployment, monitoring, and scaling. But as we scaled up, it became clear that this model wasn’t sustainable.

We started to look at Docker and containerization as a solution. Docker promised isolation without the overhead of VMs and easy portability across different environments. Plus, Kubernetes was making waves, so the time seemed right to make the leap.

The Decision

After some research and planning, we decided to go all in with Docker. We spent weeks setting up our infrastructure, writing Dockerfiles for each service, and creating a robust deployment pipeline. The biggest challenge was ensuring that all services could run on the same platform without conflicts. We had a mix of Python, Java, Ruby, and Node.js applications, which added complexity.

Challenges Along the Way

One day during testing, we encountered an issue where one of our services kept crashing after being started in a container. I spent hours debugging, pulling out my hair (figuratively), trying to figure out why this was happening. It turned out that the service had some dependencies on environment variables that weren’t properly set up by Docker. Once I fixed that, everything worked smoothly.

Another challenge was making sure our monitoring and logging solutions played nicely with Docker. We ended up using a combination of tools like Prometheus for metrics and ELK (Elasticsearch, Logstash, Kibana) for logs. Integrating these with Docker posed some challenges but ultimately made our debugging process much easier.

The Big Day

Today marks the day we finally switched over all our services to Docker containers. It was a bittersweet moment: exhilarating to see everything running smoothly, but also a bit scary to think about what might go wrong. We had a solid plan in place for rolling out changes and handling issues, but there’s always that nagging feeling of “what if.”

We did a full rollout across all our environments (dev, staging, production). The initial results are encouraging: faster deployment times, easier scaling, and less overhead. Our developers are happier because the development environment now closely mirrors production.

Lessons Learned

This transition taught us several valuable lessons:

- Documentation is key: Every service had its own Dockerfile, but also detailed setup instructions.

- Testing is non-negotiable: We wrote a suite of integration tests to ensure that services worked as expected in a containerized environment.

- Monitoring and logging are essential: Without proper monitoring and logging, any issues would have been much harder to track down.

Looking Forward

Now that we’ve made the switch, we’re looking forward to diving deeper into Kubernetes for orchestration and automation. The future is bright, and I’m excited to see where this journey takes us. Containerization has transformed our workflow, and I can’t wait to see what the next big thing will be.

This post captures a moment in time when Docker was just becoming mainstream, and the tech world was buzzing with excitement over new tools and practices. It’s a reflection of real work and challenges faced during that transition period.