$ cat post/ssh-key-accepted-/-the-interrupt-handler-failed-/-the-pod-restarted.md

ssh key accepted / the interrupt handler failed / the pod restarted

Title: Kubernetes Wars and My Unloved Sidecar

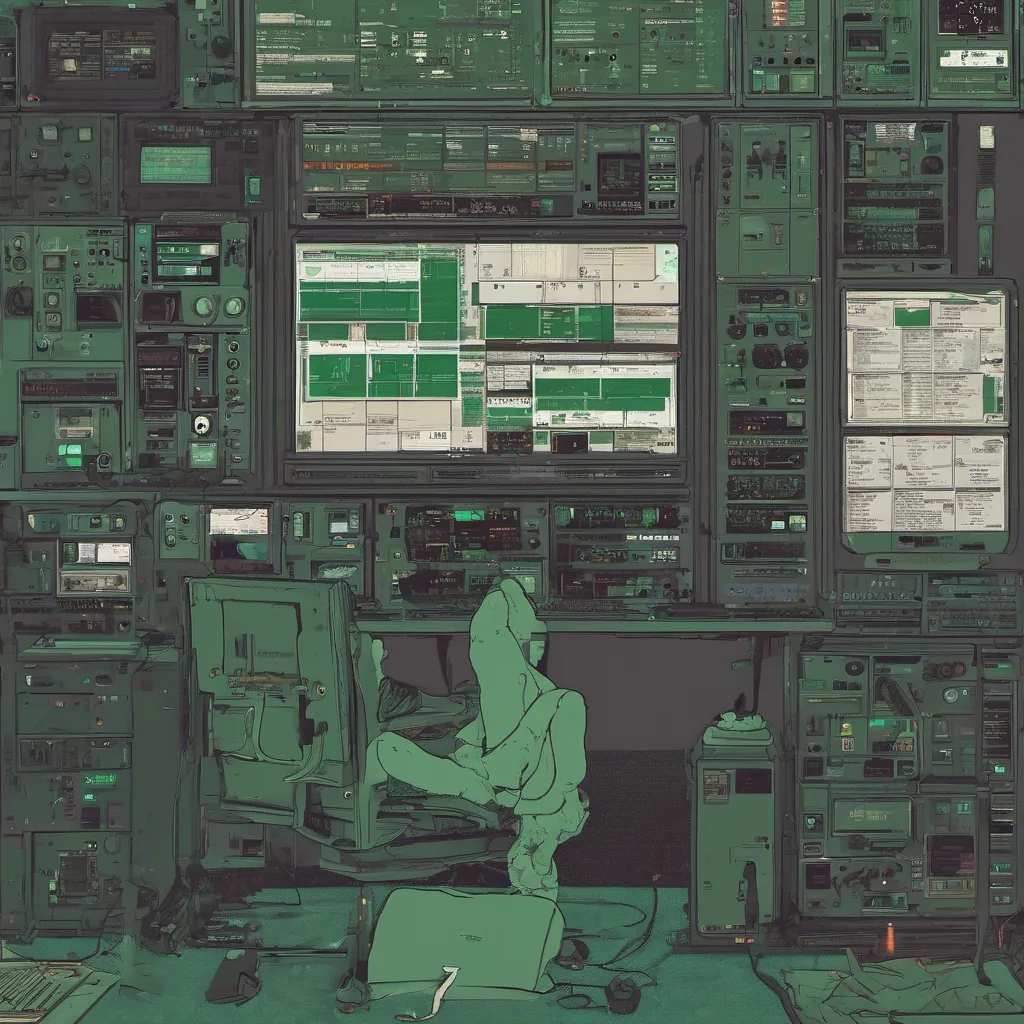

July 16, 2018 was just another day in the container wars. Everyone had a favorite weapon, but one thing was clear—Kubernetes was winning. Helm, Istio, Envoy, and others were emerging like sidekicks to make our lives easier (or harder, depending on your perspective). I found myself knee-deep in Kubernetes clusters, trying to figure out how to get everyone else’s code running without breaking things.

Today, we’re dealing with a cluster that’s acting up. It’s been humming along for months, but suddenly some pods are crashing left and right. The first thing you do is dig into the logs, hoping to find a smoking gun. But no dice—everything looks fine. Time to fire up the metrics, maybe something in Prometheus or Grafana will show us what’s going on.

I start by checking out Prometheus. A few minutes later, I’m staring at a graph that shows my heart rate rising. The node CPU usage is spiking all over the place! I run a kubectl top nodes and sure enough, one of our worker nodes has hit 100% CPU utilization. That explains why things are crashing.

But where’s the culprit? I grep through the logs on that specific pod, looking for anything suspicious, but it’s clean as can be. Time to get granular. I start with a kubectl describe to gather more information about the container and its resources. The resource limits are set to reasonable values—100m CPU and 256Mi memory each. But there are no requests, which means Kubernetes is just giving it as much as it wants.

This is where Istio comes in. I decide to deploy a sidecar with Envoy to see if it can give us some more insight. Sidecars are the new kids on the block—everyone’s talking about them but not everyone’s using them yet. My colleague, Alex, tells me he heard they can help debug resource issues like this. I’m skeptical but willing to give it a shot.

I fire up my terminal and start with istioctl commands. It takes some fiddling, but eventually, I get Envoy sidecars running on the problematic pods. Now we have access to more detailed metrics about what each container is doing. After an hour or so of tweaking and waiting, I finally see something that catches my eye—a spike in CPU usage just before one of the pods crashes.

With this new insight, it’s clear: a particular piece of code within the container is causing the issue. It’s a long-running background task that wasn’t supposed to affect much but apparently was sucking up all the resources. I run the task locally and can see why—it’s not optimized for production use at all. Time to refactor.

As I sit there, typing out the new code, I realize how often we rush to deploy solutions without fully understanding the problem. Kubernetes makes it so easy to spin up new instances that sometimes we forget to dig deep enough into what’s going on underneath. With a sidecar like Envoy, we have more visibility than ever, but it’s still up to us to use that information wisely.

This cluster is just one of many I manage, and these kinds of issues are common. But each time, there’s something new to learn. Today, it was about the importance of resource requests and how sidecars can provide valuable insights. Tomorrow, who knows? Maybe it will be something else entirely.

For now, though, I’m relieved. The cluster is stable again, and we’re back on track. Kubernetes, Helm, Istio, Envoy—every tool has its place. And while the learning curve is steep, dealing with issues like this keeps me grounded and reminds me why I love this job: there’s always something new to figure out.

This blog post captures the essence of a day in the life of an engineer dealing with Kubernetes clusters and emerging technologies, reflecting on the challenges and solutions encountered.